| Version 46 (modified by , 14 years ago) (diff) |

|---|

Project Number

1578

Project Title

Internet Scale Overlay Hosting

a.k.a. SPP

Technical Contacts

PI: Jon Turner Jon Turner

Patrick Crowley pcrowley@wustl.edu

Participating Organizations

Washington University, St. Louis, MO

Scope

The objective of the project is to acquire, assemble, deploy and operate five high performance overlay hosting platforms, and make them available for use by the research community, as part of the emerging GENI infrastructure. These systems will be hosted in the Internet 2 backbone at locations to be determined. We will provide a control interface compatible with the emerging GENI control framework that will allow the network-scale control software provided by Princeton to configure the systems in response to requests from research users. The project will leverage and extend our Supercharged PlanetLab Platform (SPP) to provide an environment in which researchers can experiment with the kind of capabilities that will ultimately be integrated into GENI. We also plan to incorporate the netFPGA to enable experimentation with hardware packet processing, in the overlay context.

Current Capabilities

Three Supercharged PlanetLab Platforms have been deployed, in Washington DC, Kansas City, and Salt Lake City.

Milestones

MilestoneDate(SPP: Limited research available)?

MilestoneDate(SPP: Component Manager ICD)?

MilestoneDate(SPP: User Web Site)?

MilestoneDate(SPP: S2.a geniwrapper)?

MilestoneDate(SPP: S2.b rspec)?

MilestoneDate(SPP: S2.c userdoc)?

MilestoneDate(SPP: S2.d demo6)? Click here for description of the demo

MilestoneDate(SPP: S2.e demo7)?

MilestoneDate(SPP: S2.f demo8)?

MilestoneDate(SPP: S2.g tutorial7)?

MilestoneDate(SPP: S2.h tutorial8)?

MilestoneDate(SPP: S2.i ops support)?

MilestoneDate(SPP: S2.j secreview)?

MilestoneDate(SPP: S2.k opsreview)?

MilestoneDate(SPP: S2.l transition plan)?

MilestoneDate(SPP: S2.m code)?

MilestoneDate(SPP: S2.n openflow)?

MilestoneDate(SPP: S2.o interfacedoc)?

Project Technical Documents

Link to WUSTL wiki Internet Scale Overlay Hosting

SPP System Architecture

Quarterly Status Reports

4Q08 Status Report

1Q09 Status Report

2Q09 Status Report

3Q09 Status Report

4Q09 Status Report

1Q10 Status Report

Recommended reading

Main Project Page

GEC Presentation (10/2008)

SIGCOMM 2007 Paper on SPP Nodes

ANCS 2006 Paper on a GENI Backbone Platform Architecture

Current Deployment (as of 11/2009)

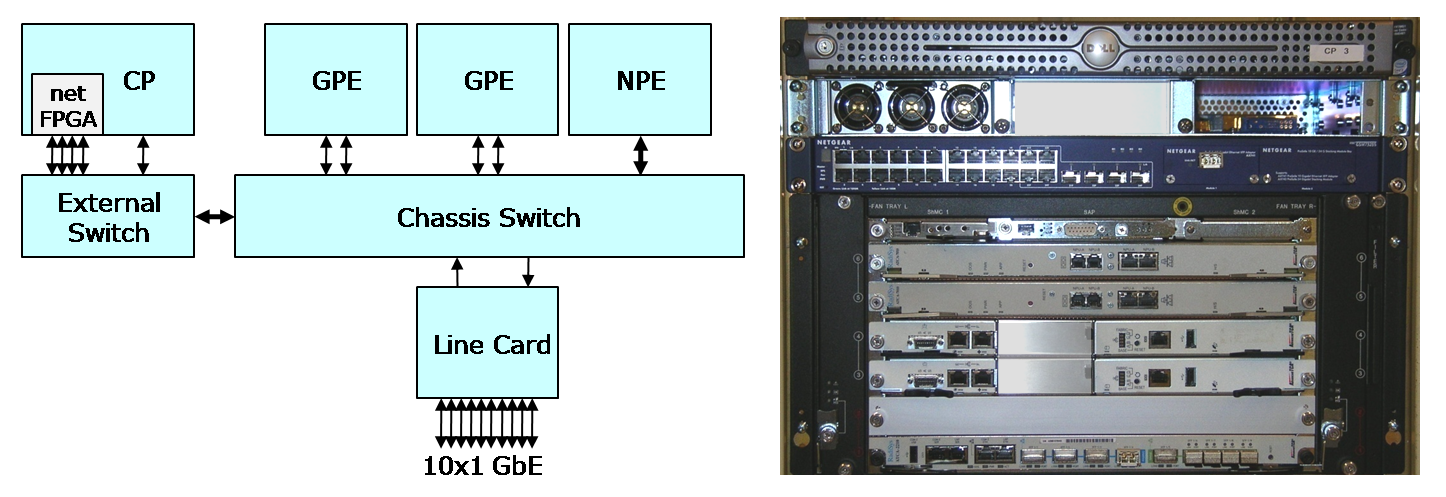

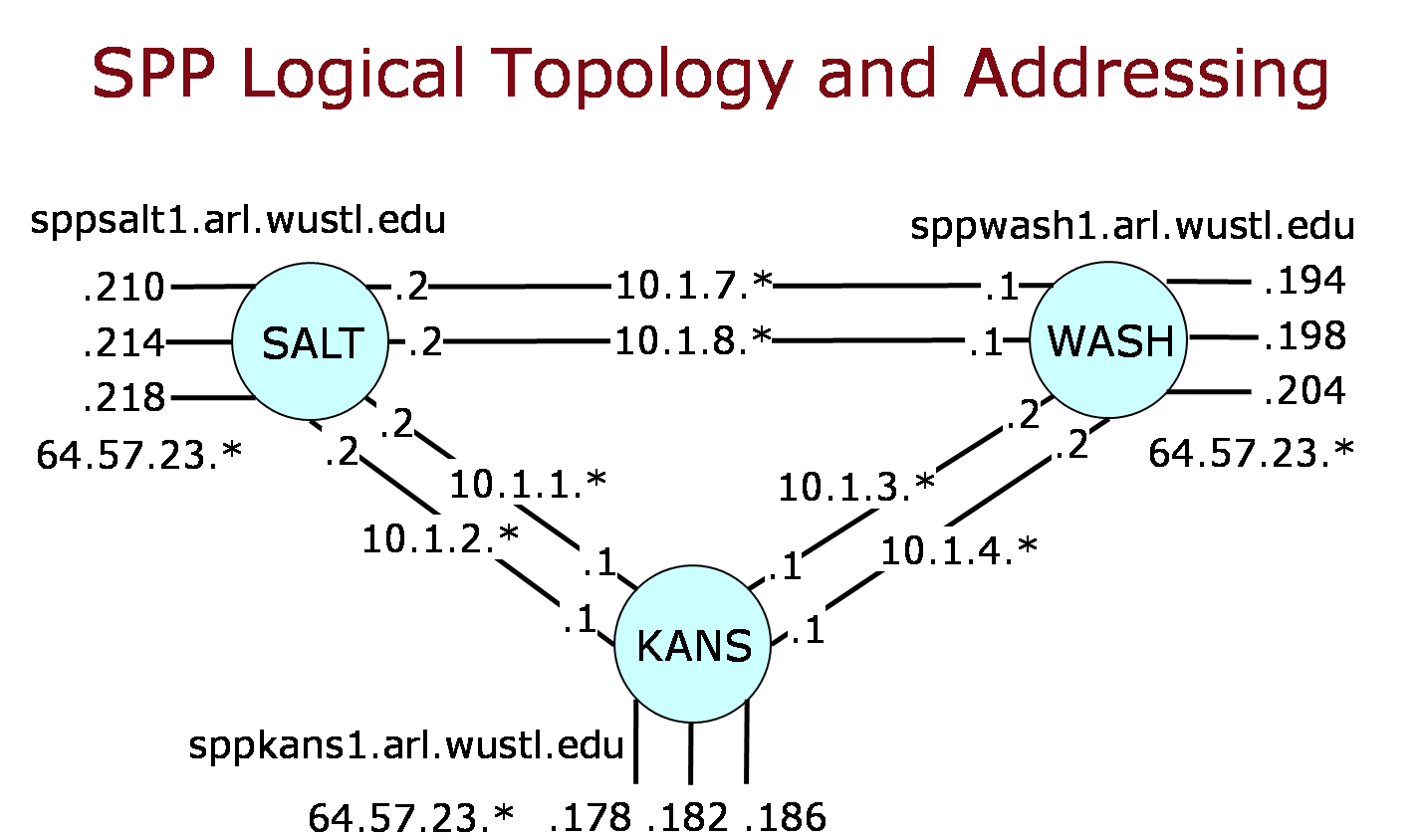

SPP Nodes are currently located at three Internet2 backbone sites - Salt Lake City, Kansas City and Houston. Additional systems will be installed in Atlanta and Houston in the second or third quarter of 2010. Each of the three current SPPs is connected by a pair of 1 Gb/s links, implemented using a static point-to-point VLAN. These are routed through the proto-GENI switch at each site, onto optical links provided by Internet 2. Each of the three SPPs also has three 1 Gb/s links to the Internet 2 router at its site. VLAN tags are used to route these connections through an I2 switch to the router. The SPP VLAN tags are used purely for routing through the connecting components and are not visible to GENI researchers. To view an SPP node configuration with connection requirements click here.

Size & Power Specifications

ATCA Chassis: 6U rack space, 1200W -48V power, max (via two 1/4“ - 20 UNC studs). The

chassis is capable of using two, redundant power sources, if available. Each would need to be rated for at least 25A @ -48V.

Power Supply: 1U rack space, one NEMA 5-15 receptacle(if -48V is not available, this power supply will provide it)

Control Processor: 1U rack space, one NEMA 5-15 receptacle (650W max)

24-port Switch: 1U rack space, one NEMA 5-15 receptacle (240W max)

The total rack space is, thus, 8U or 9U depending on if the power supply is required or not. The total power receptacles needed are either two or three, again depending on the external power supply requirement. The power requirements are enough for any expansion we do inside the ATCA chassis. If we need to add an external piece, we will need additional power receptacles for it as well as rack space.

IP addresses

Each SPP has an IP address for each of its interfaces. For the interfaces connected to the I2 router, these addresses are public and routable from any I2 connected organization. They are generally not routable from arbitrary locations in the Internet. For the interfaces used to directly connect to other SPPs, the IP addresses are private and have no significance for Internet routing. However, when configuring an experiment on an SPP node, these addresses are used to identify the interface of interest. The IP addresses for the currently deployed nodes are shown in the figure below.

Each of the public IP addresses also has an associated DNS name, such as sppkans1.arl.wustl.edu, sppwash2.arl.wustl.edu, etc.

Layer 2 connections

Layer 2 virtual Ethernets between the Gigabit Ethernet switches at all all deployed SPP nodes in Internet2 are required (see SPP interface block diagram. The NetGear GSM7328S with a 10GbE module is a likely candidate for the Gigabit Ethernet switch. The SPP does terminate VLANS, but does not perform VLAN switching.

Like normal PlanetLab nodes, SPPs use IP to tunnel packets between adjacent elements. While experimental networks may define their own protocols and packet formats, every packet sent to/from an SPP must be encapsulated in an IP packet. The port number in the IP header is used to determine which slice a packet is associated with.

We expect to provide additional VLAN-based "tail circuits" to regional networks (specifically GPENI and MAX). These will connect to routers within the regional network providing a remote access point into the SPP network.

GPO Liaison System Engineer

Related Projects

Attachments (6)

- sppPic.png (764.0 KB) - added by 15 years ago.

- sppArch.pdf (773.2 KB) - added by 15 years ago.

- sppInterfaces.png (54.5 KB) - added by 14 years ago.

-

GEC6-demo-description.pdf (2.7 MB) - added by 14 years ago.

GEC6 demo description.

-

GEC7-demo-description.pdf (539.4 KB) - added by 14 years ago.

GEC7 demo description.

-

project-review-8-2010.pptx (605.4 KB) - added by 14 years ago.

Slides for annual project review