| Version 36 (modified by , 11 years ago) (diff) |

|---|

- IG-MON-3: GENI Active Experiment Inspection Test

-

Test Plan Steps

- Step 1. Start a VM experiment and leave it running

- Step 2. Start a bare metal node experiment and leave it running

- Step 3. Start an OpenFlow experiment and leave it running

- Step 4. Administrator review of running experiments

- Step 5: get information about terminated experiments

- Step 6: get OpenFlow state information

- Step 7: verify MAC addresses on the rack dataplane switch

- Step 8: verify active dataplane traffic

IG-MON-3: GENI Active Experiment Inspection Test

This page captures status for the test case IG-MON-3, which verifies the ability to start, stop, and terminate experiments by experimenters and administrators. For overall status see the InstaGENI Acceptance Test Status page.

Last update: 2013-03-06

Test Status

| Step | State | Tickets | Notes |

| 1 | Color(green,Pass)? | ||

| 2 | Color(green,Pass)? | ||

| 3 | Color(green,Pass)? | ||

| 4 | Color(green,Pass)? | ||

| 5 | Color(lightgreen,Pass: most criteria)? | 2013-02-28 (26, 31) terminated experiments display format is a little hard to use, but contains the requested information | |

| 6 | blocked on availability of OpenFlow functionality | ||

| 7 | ready to test non-OpenFlow functionality | ||

| 8 | ready to test non-OpenFlow functionality |

| State Legend | Description |

| Color(green,Pass)? | Test completed and met all criteria |

| Color(#98FB98,Pass: most criteria)? | Test completed and met most criteria. Exceptions documented |

| Color(red,Fail)? | Test completed and failed to meet criteria. |

| Color(yellow,Complete)? | Test completed but will require re-execution due to expected changes |

| Color(orange,Blocked)? | Blocked by ticketed issue(s). |

| Color(#63B8FF,In Progress)? | Currently under test. |

Test Plan Steps

- An experimenter from the GPO starts up experiments to ensure there is data to look at:

- An experimenter runs an experiment containing at least one rack OpenVZ VM, and terminates it.

- An experimenter runs an experiment containing at least one rack OpenVZ VM, and leaves it running.

- A site administrator uses available system and experiment data sources to determine current experimental state, including:

- How many VMs are running and which experimenters own them

- How many physical hosts are in use by experiments, and which experimenters own them

- How many VMs were terminated within the past day, and which experimenters owned them

- What OpenFlow controllers the data plane switch, the rack FlowVisor, and the rack FOAM are communicating with

- A site administrator examines the switches and other rack data sources, and determines:

- What MAC addresses are currently visible on the data plane switch and what experiments do they belong to?

- For some experiment which was terminated within the past day, what data plane and control MAC and IP addresses did the experiment use?

- For some experimental data path which is actively sending traffic on the data plane switch, do changes in interface counters show approximately the expected amount of traffic into and out of the switch?

Step 1. Start a VM experiment and leave it running

As experimenter create a 2 VMs experiment in the GPO InstaGENI rack. RSpec used:

<?xml version="1.0" encoding="UTF-8"?>

<!--

This example RSpec shows how to request 2 VMs at the GPO InstaGENI Rack.

-->

<rspec type="request"

xmlns="http://www.geni.net/resources/rspec/3"

xmlns:flack="http://www.protogeni.net/resources/rspec/ext/flack/1"

xmlns:planetlab="http://www.planet-lab.org/resources/sfa/ext/planetlab/1"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://www.geni.net/resources/rspec/3

http://www.geni.net/resources/rspec/3/request.xsd">

<node client_id="VM-1" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="false">

<sliver_type name="emulab-openvz"/>

<interface client_id="VM-1:if0">

<ip address="192.168.1.1" netmask="255.255.255.0" type="ipv4"/>

</interface>

</node>

<node client_id="VM-2" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="false">

<sliver_type name="emulab-openvz"/>

<interface client_id="VM-2:if0">

<ip address="192.168.1.2" netmask="255.255.255.0" type="ipv4"/>

</interface>

</node>

<link client_id="lan0">

<component_manager name="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm"/>

<interface_ref client_id="VM-1:if0"/>

<interface_ref client_id="VM-2:if0"/>

<link_type name="lan"/>

</link>

</rspec>

Create a slice and sliver:

$ omni.py createslice EG-MON-3

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Created slice with Name EG-MON-3, URN urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+EG-MON-3, Expiration 2013-03-07 15:09:24+00:00

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createslice:

Options as run:

framework: pg

Args: createslice EG-MON-3

Result Summary: Created slice with Name EG-MON-3, URN urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+EG-MON-3, Expiration 2013-03-07 15:09:24+00:00

INFO:omni: ============================================================

$ omni.py createsliver -a ig-gpo EG-MON-3 ./instageni-2vm-at-gpo.rspec

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+EG-MON-3 expires on 2013-03-07 15:09:24 UTC

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Creating sliver(s) from rspec file ./instageni-2vm-at-gpo.rspec for slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+EG-MON-3

INFO:omni: (PG log url - look here for details on any failures: https://boss.instageni.gpolab.bbn.com/spewlogfile.php3?logfile=388177e82b203e84aa3694dda7bf441d)

INFO:omni:Got return from CreateSliver for slice EG-MON-3 at https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0:

INFO:omni:<!-- Reserved resources for:

Slice: EG-MON-3

at AM:

URN: unspecified_AM_URN

URL: https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0

-->

INFO:omni:<rspec xmlns="http://www.geni.net/resources/rspec/3" xmlns:flack="http://www.protogeni.net/resources/rspec/ext/flack/1" xmlns:planetlab="http://www.planet-lab.org/resources/sfa/ext/planetlab/1" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" type="manifest" xsi:schemaLocation="http://www.geni.net/resources/rspec/3 http://www.geni.net/resources/rspec/3/manifest.xsd">

<node client_id="VM-1" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="false" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc2" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2048">

<sliver_type name="emulab-openvz"/>

<interface client_id="VM-1:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc2:lo0" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2052" mac_address="02104639f347">

<ip address="192.168.1.1" netmask="255.255.255.0" type="ipv4"/>

</interface>

<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pcvm2-1"/><host name="VM-1.EG-MON-3.pgeni-gpolab-bbn-com.instageni.gpolab.bbn.com"/><services><login authentication="ssh-keys" hostname="pc2.instageni.gpolab.bbn.com" port="31290" username="lnevers"/></services></node>

<node client_id="VM-2" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="false" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc2" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2049">

<sliver_type name="emulab-openvz"/>

<interface client_id="VM-2:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc2:lo0" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2053" mac_address="020063c74361">

<ip address="192.168.1.2" netmask="255.255.255.0" type="ipv4"/>

</interface>

<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pcvm2-6"/><host name="VM-2.EG-MON-3.pgeni-gpolab-bbn-com.instageni.gpolab.bbn.com"/><services><login authentication="ssh-keys" hostname="pc2.instageni.gpolab.bbn.com" port="31291" username="lnevers"/></services></node>

<link client_id="lan0" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2051">

<component_manager name="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm"/>

<interface_ref client_id="VM-1:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc2:lo0" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2052"/>

<interface_ref client_id="VM-2:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc2:lo0" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2053"/>

<link_type name="lan"/>

</link>

</rspec>

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createsliver:

Options as run:

aggregate: ['ig-gpo']

framework: pg

Args: createsliver EG-MON-3 ./instageni-2vm-at-gpo.rspec

Result Summary: Got Reserved resources RSpec from instageni-gpolab-bbn-com-protogeniv2

INFO:omni: ============================================================

Determine login information for the VM resources assigned and that the VMs are "ready" for use:

$ readyToLogin.py -a ig-gpo EG-MON-3 <...> VM-2's geni_status is: ready (am_status:ready) User lnevers logins to VM-2 using: xterm -e ssh -p 31291 -i /home/lnevers/.ssh/id_rsa lnevers@pc2.instageni.gpolab.bbn.com & VM-1's geni_status is: ready (am_status:ready) User lnevers logins to VM-1 using: xterm -e ssh -p 31290 -i /home/lnevers/.ssh/id_rsa lnevers@pc2.instageni.gpolab.bbn.com &

Login to VM-1, verify hostname, address assignment and initial ARP table entries:

$ ssh -p 31290 -i /home/lnevers/.ssh/id_rsa lnevers@pc2.instageni.gpolab.bbn.com

[lnevers@VM-1 ~]$ /sbin/ifconfig mv1.12

mv1.12 Link encap:Ethernet HWaddr 02:10:46:39:F3:47

inet addr:192.168.1.1 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::10:46ff:fe39:f347/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:6 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:6 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:552 (552.0 b) TX bytes:0 (0.0 b)

[lnevers@VM-1 ~]$ /sbin/arp -a

pc2.instageni.gpolab.bbn.com (192.1.242.141) at 10:60:4b:9b:82:14 [ether] on eth999

boss.instageni.gpolab.bbn.com (192.1.242.132) at 02:9e:26:f1:52:99 [ether] on eth999

Login to VM-2, verify hostname, address assignment, MAC address for data plane interface, and initial ARP table entries:

$ ssh -p 31291 -i /home/lnevers/.ssh/id_rsa lnevers@pc2.instageni.gpolab.bbn.com

[lnevers@VM-2 ~]$ /sbin/ifconfig mv6.13

mv6.13 Link encap:Ethernet HWaddr 02:00:63:C7:43:61

inet addr:192.168.1.2 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::63ff:fec7:4361/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:6 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 b) TX bytes:0 (0.0 b)

[lnevers@VM-2 ~]$ /sbin/arp -a

pc2.instageni.gpolab.bbn.com (192.1.242.141) at 10:60:4b:9b:82:14 [ether] on eth999

boss.instageni.gpolab.bbn.com (192.1.242.132) at 02:9e:26:f1:52:99 [ether] on eth999

Install iperf on both VMs and exchange TCP traffic from VM-1 client to VM-2 server over the data plane interface and look at ARP table entries:

[lnevers@VM-1 ~]$ /usr/bin/iperf -c 192.168.1.2 -t 60 ------------------------------------------------------------ Client connecting to 192.168.1.2, TCP port 5001 TCP window size: 16.0 KByte (default) ------------------------------------------------------------ [ 3] local 192.168.1.1 port 55185 connected with 192.168.1.2 port 5001 [ ID] Interval Transfer Bandwidth [ 3] 0.0-60.0 sec 687 MBytes 96.0 Mbits/sec [lnevers@VM-1 ~]$ /sbin/arp -a pc2.instageni.gpolab.bbn.com (192.1.242.141) at 10:60:4b:9b:82:14 [ether] on eth999 boss.instageni.gpolab.bbn.com (192.1.242.132) at 02:9e:26:f1:52:99 [ether] on eth999 VM-2-lan0 (192.168.1.2) at 02:00:63:c7:43:61 [ether] on mv1.12

Show ARP entries on VM2 (iperf server):

[lnevers@VM-2 ~]$ /sbin/arp -a pc2.instageni.gpolab.bbn.com (192.1.242.141) at 10:60:4b:9b:82:14 [ether] on eth999 boss.instageni.gpolab.bbn.com (192.1.242.132) at 02:9e:26:f1:52:99 [ether] on eth999 VM-1-lan0 (192.168.1.1) at 02:10:46:39:f3:47 [ether] on mv6.13

Leave experiment running.

Step 2. Start a bare metal node experiment and leave it running

As experimenter create a 2 Raw PCs experiment in the GPO InstaGENI rack. RSpec used:

<?xml version="1.0" encoding="UTF-8"?>

<rspec type="request"

xmlns="http://www.geni.net/resources/rspec/3"

xmlns:flack="http://www.protogeni.net/resources/rspec/ext/flack/1"

xmlns:planetlab="http://www.planet-lab.org/resources/sfa/ext/planetlab/1"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://www.geni.net/resources/rspec/3

http://www.geni.net/resources/rspec/3/request.xsd">

<node client_id="PC-1" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc5"

component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="true">

<sliver_type name="raw-pc"/>

<interface client_id="PC-1:if0">

<ip address="192.168.1.1" netmask="255.255.255.0" type="ipv4"/>

</interface>

</node>

<node client_id="PC-2" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc4"

component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="true">

<sliver_type name="raw-pc"/>

<interface client_id="PC-2:if0">

<ip address="192.168.1.2" netmask="255.255.255.0" type="ipv4"/>

</interface>

</node>

<link client_id="lan0">

<component_manager name="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm"/>

<interface_ref client_id="PC-1:if0"/>

<interface_ref client_id="PC-2:if0"/>

<link_type name="lan"/>

</link>

</rspec>

Create a slice and sliver:

$ omni.py createslice IG-MON-3

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Created slice with Name IG-MON-3, URN urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3, Expiration 2013-03-07 15:47:53+00:00

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createslice:

Options as run:

framework: pg

Args: createslice IG-MON-3

Result Summary: Created slice with Name IG-MON-3, URN urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3, Expiration 2013-03-07 15:47:53+00:00

INFO:omni: ============================================================

$ omni.py createsliver -a ig-gpo IG-MON-3 ./instageni-2rawpc-at-gpo.rspec

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3 expires on 2013-03-07 15:47:53 UTC

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Creating sliver(s) from rspec file ./instageni-2rawpc-at-gpo.rspec for slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3

INFO:omni: (PG log url - look here for details on any failures: https://boss.instageni.gpolab.bbn.com/spewlogfile.php3?logfile=deb52735b826a50320d5274e8e4c6c8e)

INFO:omni:Got return from CreateSliver for slice IG-MON-3 at https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0:

INFO:omni:<!-- Reserved resources for:

Slice: IG-MON-3

at AM:

URN: unspecified_AM_URN

URL: https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0

-->

INFO:omni:<rspec xmlns="http://www.geni.net/resources/rspec/3" xmlns:flack="http://www.protogeni.net/resources/rspec/ext/flack/1" xmlns:planetlab="http://www.planet-lab.org/resources/sfa/ext/planetlab/1" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" type="manifest" xsi:schemaLocation="http://www.geni.net/resources/rspec/3 http://www.geni.net/resources/rspec/3/manifest.xsd">

<node client_id="PC-1" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc5" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="true" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2042">

<sliver_type name="raw-pc"/>

<interface client_id="PC-1:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc5:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2046" mac_address="10604B9C476A">

<ip address="192.168.1.1" netmask="255.255.255.0" type="ipv4"/>

</interface>

<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pc5"/><host name="PC-1.IG-MON-3.pgeni-gpolab-bbn-com.instageni.gpolab.bbn.com"/><services><login authentication="ssh-keys" hostname="pc5.instageni.gpolab.bbn.com" port="22" username="lnevers"/></services></node>

<node client_id="PC-2" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc4" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="true" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2043">

<sliver_type name="raw-pc"/>

<interface client_id="PC-2:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc4:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2047" mac_address="10604B9600D6">

<ip address="192.168.1.2" netmask="255.255.255.0" type="ipv4"/>

</interface>

<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pc4"/><host name="PC-2.IG-MON-3.pgeni-gpolab-bbn-com.instageni.gpolab.bbn.com"/><services><login authentication="ssh-keys" hostname="pc4.instageni.gpolab.bbn.com" port="22" username="lnevers"/></services></node>

<link client_id="lan0" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2045" vlantag="258">

<component_manager name="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm"/>

<interface_ref client_id="PC-1:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc5:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2046"/>

<interface_ref client_id="PC-2:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc4:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2047"/>

<link_type name="lan"/>

</link>

</rspec>

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createsliver:

Options as run:

aggregate: ['ig-gpo']

framework: pg

Args: createsliver IG-MON-3 ./instageni-2rawpc-at-gpo.rspec

Result Summary: Got Reserved resources RSpec from instageni-gpolab-bbn-com-protogeniv2

INFO:omni: ============================================================

Determine login information for the sliver resources:

$ readyToLogin.py -a ig-gpo IG-MON-3 <...> PC-1's geni_status is: ready (am_status:ready) User lnevers logins to PC-1 using: xterm -e ssh -i /home/lnevers/.ssh/id_rsa lnevers@pc5.instageni.gpolab.bbn.com & PC-2's geni_status is: ready (am_status:ready) User lnevers logins to PC-2 using: xterm -e ssh -i /home/lnevers/.ssh/id_rsa lnevers@pc4.instageni.gpolab.bbn.com &

Login to PC-1, verify hostname, address assignment and initial ARP table entries:

$ ssh -i /home/lnevers/.ssh/id_rsa lnevers@pc5.instageni.gpolab.bbn.com

[lnevers@pc-1 ~]$ /sbin/ifconfig eth1

eth1 Link encap:Ethernet HWaddr 10:60:4B:9C:47:6A

inet addr:192.168.1.1 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::1260:4bff:fe9c:476a/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:6 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 b) TX bytes:492 (492.0 b)

Interrupt:37 Memory:f2000000-f2012800

[lnevers@pc-1 ~]$ /sbin/arp -a

ops.instageni.gpolab.bbn.com (192.1.242.133) at 02:07:2d:51:f4:6a [ether] on eth0

boss.instageni.gpolab.bbn.com (192.1.242.132) at 02:9e:26:f1:52:99 [ether] on eth0

? (192.1.242.129) at cc:ef:48:7a:7a:a9 [ether] on eth0

Login to PC-2, verify hostname, address assignment and initial ARP table entries:

$ ssh -i /home/lnevers/.ssh/id_rsa lnevers@pc4.instageni.gpolab.bbn.com

[lnevers@pc-2 ~]$ /sbin/ifconfig eth1

eth1 Link encap:Ethernet HWaddr 10:60:4B:96:00:D6

inet addr:192.168.1.2 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::1260:4bff:fe96:d6/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:6 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 b) TX bytes:492 (492.0 b)

Interrupt:37 Memory:f2000000-f2012800

[lnevers@pc-2 ~]$ /sbin/arp -a

ops.instageni.gpolab.bbn.com (192.1.242.133) at 02:07:2d:51:f4:6a [ether] on eth0

boss.instageni.gpolab.bbn.com (192.1.242.132) at 02:9e:26:f1:52:99 [ether] on eth0

? (192.1.242.129) at cc:ef:48:7a:7a:a9 [ether] on eth0

Install iperf on both PCs and exchange TCP traffic from PC-1 client to PC-2 server over the data plane interface and look at ARP table entries:

[lnevers@pc-1 ~]$ /usr/bin/iperf -c 192.168.1.2 -i 1 ------------------------------------------------------------ Client connecting to 192.168.1.2, TCP port 5001 TCP window size: 16.0 KByte (default) ------------------------------------------------------------ [ 3] local 192.168.1.1 port 58436 connected with 192.168.1.2 port 5001 [ ID] Interval Transfer Bandwidth [ 3] 0.0- 1.0 sec 113 MBytes 947 Mbits/sec [ 3] 1.0- 2.0 sec 112 MBytes 940 Mbits/sec [ 3] 2.0- 3.0 sec 112 MBytes 943 Mbits/sec [ 3] 3.0- 4.0 sec 112 MBytes 942 Mbits/sec [ 3] 4.0- 5.0 sec 112 MBytes 940 Mbits/sec

Leave sliver running.

Step 3. Start an OpenFlow experiment and leave it running

As experimenter create a 2 VMs OpenFlow experiment in the GPO InstaGENI rack. RSpec used:

<?xml version="1.0" encoding="UTF-8"?>

<rspec xmlns="http://www.geni.net/resources/rspec/3"

xmlns:xs="http://www.w3.org/2001/XMLSchema-instance"

xmlns:sharedvlan="http://www.protogeni.net/resources/rspec/ext/shared-vlan/1"

xs:schemaLocation="http://www.geni.net/resources/rspec/3

http://www.geni.net/resources/rspec/3/request.xsd

http://www.protogeni.net/resources/rspec/ext/shared-vlan/1

http://www.protogeni.net/resources/rspec/ext/shared-vlan/1/request.xsd"

type="request">

<node client_id="gpo-ig" exclusive="false">

<sliver_type name="emulab-openvz" />

<interface client_id="gpo-ig:if0">

<ip address="10.42.18.43" netmask="255.255.255.0" type="ipv4" />

</interface>

</node>

<node client_id="gpo-ig2" exclusive="false">

<sliver_type name="emulab-openvz" />

<interface client_id="gpo-ig2:if0">

<ip address="10.42.18.42" netmask="255.255.255.0" type="ipv4" />

</interface>

</node>

<link client_id="openflow-mesoscale-0">

<interface_ref client_id="gpo-ig:if0" />

<sharedvlan:link_shared_vlan name="mesoscale-openflow" />

</link>

<link client_id="openflow-mesoscale-1">

<interface_ref client_id="gpo-ig2:if0" />

<sharedvlan:link_shared_vlan name="mesoscale-openflow" />

</link>

</rspec>

Create a sliver and sliver for the 2 VMs on shared OpenFlow VLAN 1750 in the GPO InstaGENI rack:

$ omni.py createslice IG-MON-3-OF

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Created slice with Name IG-MON-3-OF, URN urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF, Expiration 2013-03-07 16:26:04+00:00

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createslice:

Options as run:

framework: pg

Args: createslice IG-MON-3-OF

Result Summary: Created slice with Name IG-MON-3-OF, URN urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF, Expiration 2013-03-07 16:26:04+00:00

INFO:omni: ============================================================

$ omni.py createsliver -a ig-gpo IG-MON-3-OF ./instageni-2vm-vlan1750-at-gpo.rspec

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF expires on 2013-03-07 16:26:04 UTC

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Substituting AM nickname ig-gpo with URL https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Creating sliver(s) from rspec file ./instageni-2vm-vlan1750-at-gpo.rspec for slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF

INFO:omni: (PG log url - look here for details on any failures: https://boss.instageni.gpolab.bbn.com/spewlogfile.php3?logfile=8af1230117ca9b383b6510da21d7abc7)

INFO:omni:Got return from CreateSliver for slice IG-MON-3-OF at https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0:

INFO:omni:<!-- Reserved resources for:

Slice: IG-MON-3-OF

at AM:

URN: unspecified_AM_URN

URL: https://instageni.gpolab.bbn.com:12369/protogeni/xmlrpc/am/2.0

-->

INFO:omni:<rspec xmlns="http://www.geni.net/resources/rspec/3" xmlns:xs="http://www.w3.org/2001/XMLSchema-instance" xmlns:sharedvlan="http://www.protogeni.net/resources/rspec/ext/shared-vlan/1" xs:schemaLocation="http://www.geni.net/resources/rspec/3 http://www.geni.net/resources/rspec/3/manifest.xsd http://www.protogeni.net/resources/rspec/ext/shared-vlan/1 http://www.protogeni.net/resources/rspec/ext/shared-vlan/1/request.xsd" type="manifest">

<node client_id="gpo-ig" exclusive="false" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc2" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2065">

<sliver_type name="emulab-openvz"/>

<interface client_id="gpo-ig:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc2:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2069" mac_address="02e922fceb01">

<ip address="10.42.18.43" netmask="255.255.255.0" type="ipv4"/>

</interface>

<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pcvm2-9"/><host name="gpo-ig.IG-MON-3-OF.pgeni-gpolab-bbn-com.instageni.gpolab.bbn.com"/><services><login authentication="ssh-keys" hostname="pc2.instageni.gpolab.bbn.com" port="32058" username="lnevers"/></services></node>

<node client_id="gpo-ig2" exclusive="false" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc2" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2066">

<sliver_type name="emulab-openvz"/>

<interface client_id="gpo-ig2:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc2:eth2" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2071" mac_address="0240e5291a6f">

<ip address="10.42.18.42" netmask="255.255.255.0" type="ipv4"/>

</interface>

<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pcvm2-10"/><host name="gpo-ig2.IG-MON-3-OF.pgeni-gpolab-bbn-com.instageni.gpolab.bbn.com"/><services><login authentication="ssh-keys" hostname="pc2.instageni.gpolab.bbn.com" port="32059" username="lnevers"/></services></node>

<link xmlns:sharedvlan="http://www.protogeni.net/resources/rspec/ext/shared-vlan/1" client_id="openflow-mesoscale-0" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2068">

<interface_ref client_id="gpo-ig:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc2:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2069"/>

<sharedvlan:link_shared_vlan name="mesoscale-openflow"/>

</link>

<link xmlns:sharedvlan="http://www.protogeni.net/resources/rspec/ext/shared-vlan/1" client_id="openflow-mesoscale-1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2070">

<interface_ref client_id="gpo-ig2:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc2:eth2" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+2071"/>

<sharedvlan:link_shared_vlan name="mesoscale-openflow"/>

</link>

</rspec>

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createsliver:

Options as run:

aggregate: ['ig-gpo']

framework: pg

Args: createsliver IG-MON-3-OF ./instageni-2vm-vlan1750-at-gpo.rspec

Result Summary: Got Reserved resources RSpec from instageni-gpolab-bbn-com-protogeniv2

INFO:omni: ============================================================

A FOAM sliver is needed to allow the traffic exchange. Using this FOAM RSpec:

<?xml version="1.0" encoding="UTF-8"?>

<rspec xmlns="http://www.geni.net/resources/rspec/3"

xmlns:xs="http://www.w3.org/2001/XMLSchema-instance"

xmlns:openflow="http://www.geni.net/resources/rspec/ext/openflow/3"

xs:schemaLocation="http://www.geni.net/resources/rspec/3

http://www.geni.net/resources/rspec/3/request.xsd

http://www.geni.net/resources/rspec/ext/openflow/3

http://www.geni.net/resources/rspec/ext/openflow/3/of-resv.xsd"

type="request">

<openflow:sliver description=" InstaGENI OpenFlow" email="lnevers@bbn.com">

<openflow:controller url="tcp:mallorea.gpolab.bbn.com:33018" type="primary" />

<openflow:group name="bbn-instageni-1750">

<openflow:datapath component_id="urn:publicid:IDN+openflow:foam:foam.instageni.gpolab.bbn.com+datapath+06:d6:84:34:97:c6:c9:00"

component_manager_id="urn:publicid:IDN+openflow:foam:foam.instageni.gpolab.bbn.com+authority+am" />

</openflow:group>

<openflow:match>

<openflow:use-group name="bbn-instageni-1750" />

<openflow:packet>

<openflow:dl_type value="0x800,0x806"/>

<openflow:nw_dst value="10.42.18.0/24"/>

<openflow:nw_src value="10.42.18.0/24"/>

</openflow:packet>

</openflow:match>

</openflow:sliver>

</rspec>

Create a sliver at the GPO InstaGENI rack FOAM to allow the traffic exchange:

$ omni.py createsliver -a ig-of-gpo IG-MON-3-OF ./instageni-openflow-at-gpo.rspec

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Substituting AM nickname ig-of-gpo with URL https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1, URN unspecified_AM_URN

WARNING:omni:You asked to use AM API 2, but the AM(s) you are contacting do not all speak that version.

WARNING:omni:At the URLs you are contacting, all your AMs speak AM API v1.

WARNING:omni:Switching to AM API v1. Next time call Omni with '-V1'.

INFO:omni:Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF expires on 2013-03-07 16:26:04 UTC

INFO:omni:Substituting AM nickname ig-of-gpo with URL https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1, URN unspecified_AM_URN

INFO:omni:Substituting AM nickname ig-of-gpo with URL https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1, URN unspecified_AM_URN

INFO:omni:Creating sliver(s) from rspec file ./instageni-openflow-at-gpo.rspec for slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF

INFO:omni:Got return from CreateSliver for slice IG-MON-3-OF at https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1:

INFO:omni:<?xml version="1.0" encoding="UTF-8"?>

INFO:omni: <!-- Reserved resources for:

Slice: IG-MON-3-OF

at AM:

URN: unspecified_AM_URN

URL: https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1

-->

INFO:omni:

<rspec xmlns="http://www.geni.net/resources/rspec/3"

xmlns:xs="http://www.w3.org/2001/XMLSchema-instance"

xmlns:openflow="http://www.geni.net/resources/rspec/ext/openflow/3"

xs:schemaLocation="http://www.geni.net/resources/rspec/3

http://www.geni.net/resources/rspec/3/manifest.xsd

http://www.geni.net/resources/rspec/ext/openflow/3

http://www.geni.net/resources/rspec/ext/openflow/3/of-resv.xsd"

type="manifest">

<openflow:sliver description=" InstaGENI OpenFlow" email="lnevers@bbn.com">

<openflow:controller url="tcp:mallorea.gpolab.bbn.com:33018" type="primary" />

<openflow:group name="bbn-instageni-1750">

<openflow:datapath component_id="urn:publicid:IDN+openflow:foam:foam.instageni.gpolab.bbn.com+datapath+06:d6:84:34:97:c6:c9:00"

component_manager_id="urn:publicid:IDN+openflow:foam:foam.instageni.gpolab.bbn.com+authority+am" />

</openflow:group>

<openflow:match>

<openflow:use-group name="bbn-instageni-1750" />

<openflow:packet>

<openflow:dl_type value="0x800,0x806"/>

<openflow:nw_dst value="10.42.18.0/24"/>

<openflow:nw_src value="10.42.18.0/24"/>

</openflow:packet>

</openflow:match>

</openflow:sliver>

</rspec>

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createsliver:

Options as run:

aggregate: ['ig-of-gpo']

api_version: 1

framework: pg

Args: createsliver IG-MON-3-OF ./instageni-openflow-at-gpo.rspec

Result Summary: Your AMs do not all speak requested API v2. At the URLs you are contacting, all your AMs speak AM API v1. Switching to AM API v1. Next time call Omni with '-V1'.

Got Reserved resources RSpec from foam-instageni-gpolab-bbn-com

INFO:omni: ============================================================

This sliver is auto approved. State can be confirmed with omni command:

$ omni.py sliverstatus -a ig-of-gpo IG-MON-3-OF -V1

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Substituting AM nickname ig-of-gpo with URL https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1, URN unspecified_AM_URN

INFO:omni:Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF expires on 2013-03-07 16:26:04 UTC

INFO:omni:Substituting AM nickname ig-of-gpo with URL https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1, URN unspecified_AM_URN

INFO:omni:Status of Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF:

INFO:omni:Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF at AM https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1 has overall SliverStatus: ready

INFO:omni:Sliver status for Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF at AM URL https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1

INFO:omni:{

"geni_status": "ready",

"geni_urn": "urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF:8070b745-84c7-47eb-8e04-63a764997f3a",

"foam_pend_reason": null,

"foam_expires": "2013-03-07 16:26:04+00:00",

"geni_resources": [

{

"geni_urn": "urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF:8070b745-84c7-47eb-8e04-63a764997f3a",

"geni_error": "",

"geni_status": "ready"

}

],

"foam_status": "Approved"

}

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed sliverstatus:

Options as run:

aggregate: ['ig-of-gpo']

api_version: 1

framework: pg

Args: sliverstatus IG-MON-3-OF

Result Summary: Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF expires on 2013-03-07 16:26:04 UTC

Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-MON-3-OF at AM https://foam.instageni.gpolab.bbn.com:3626/foam/gapi/1 has overall SliverStatus: ready.

Returned status of slivers on 1 of 1 possible aggregates.

INFO:omni: ============================================================

Determine login for assigned nodes:

$ readyToLogin.py -a ig-gpo IG-MON-3-OF <...> gpo-ig's geni_status is: ready (am_status:ready) User lnevers logins to gpo-ig using: xterm -e ssh -p 32058 -i /home/lnevers/.ssh/id_rsa lnevers@pc2.instageni.gpolab.bbn.com & gpo-ig2's geni_status is: ready (am_status:ready) User lnevers logins to gpo-ig2 using: xterm -e ssh -p 32059 -i /home/lnevers/.ssh/id_rsa lnevers@pc2.instageni.gpolab.bbn.com &

Login to VM named 'gpo-ig' and send traffic to remote:

$ ssh -p 32058 -i /home/lnevers/.ssh/id_rsa lnevers@pc2.instageni.gpolab.bbn.com [lnevers@ig-gpo ~]$ ping 10.42.18.42 -c 5 PING 10.42.18.42 (10.42.18.42) 56(84) bytes of data. 64 bytes from 10.42.18.42: icmp_req=1 ttl=64 time=6.73 ms 64 bytes from 10.42.18.42: icmp_req=2 ttl=64 time=0.077 ms 64 bytes from 10.42.18.42: icmp_req=3 ttl=64 time=0.075 ms 64 bytes from 10.42.18.42: icmp_req=4 ttl=64 time=0.075 ms 64 bytes from 10.42.18.42: icmp_req=5 ttl=64 time=0.075 ms --- 10.42.18.42 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 4001ms rtt min/avg/max/mdev = 0.075/1.407/6.735/2.664 ms

Login to VM named 'gpo-ig2' and send traffic to remote:

$ ssh -p 32059 -i /home/lnevers/.ssh/id_rsa lnevers@pc2.instageni.gpolab.bbn.com [lnevers@ig-gpo2 ~]$ ping 10.42.18.43 -c 5 PING 10.42.18.43 (10.42.18.43) 56(84) bytes of data. 64 bytes from 10.42.18.43: icmp_req=1 ttl=64 time=6.33 ms 64 bytes from 10.42.18.43: icmp_req=2 ttl=64 time=0.075 ms 64 bytes from 10.42.18.43: icmp_req=3 ttl=64 time=0.074 ms 64 bytes from 10.42.18.43: icmp_req=4 ttl=64 time=0.076 ms 64 bytes from 10.42.18.43: icmp_req=5 ttl=64 time=0.074 ms --- 10.42.18.43 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 4000ms rtt min/avg/max/mdev = 0.074/1.327/6.337/2.505 ms [lnevers@ig-gpo2 ~]$

Leave experiment running

Step 4. Administrator review of running experiments

As administrator determine experiments status, resource assignment, and configuration for the 3 experiments that are running from previous steps:

- EG-MON-3 - a 2 VM experiment

- IG-MON-3 - a 2 raw pc experiment

- IG-MON-3-OF - a 2 VM OpenFlow experiment

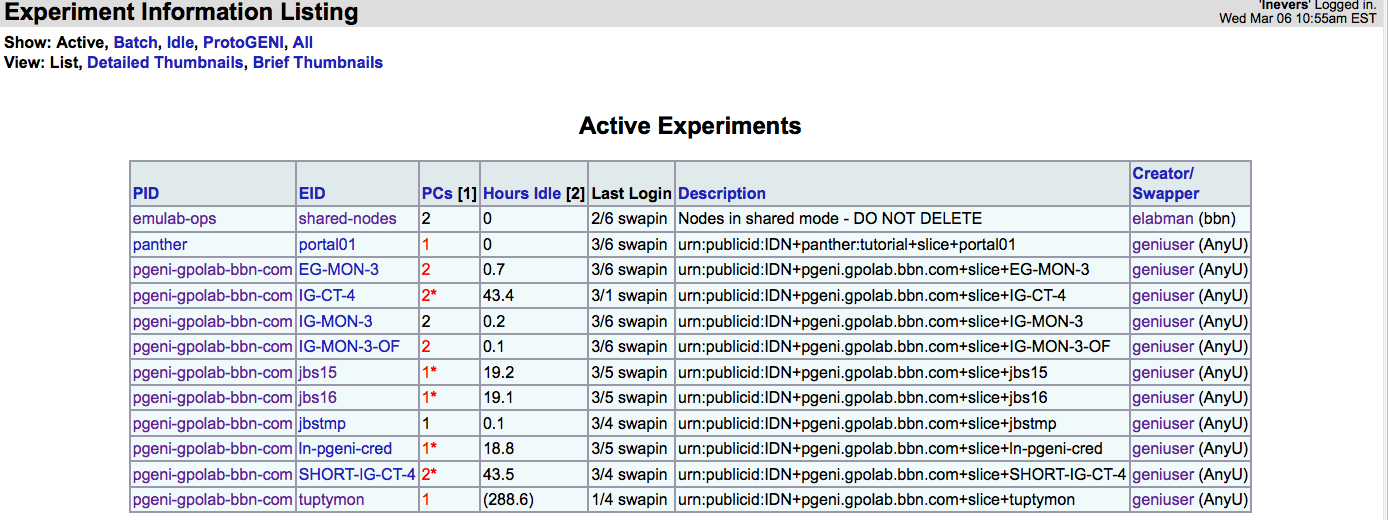

Determine list of active experiments, by selecting "Experimentation" pulldown in "red dot" mode, and choosing "Experiment list", which maps to the URL https://boss.instageni.gpolab.bbn.com/showexp_list.php3. A capture of the active experiment page shows:

Each of the 3 experiment created for this test case (EG-MON-3, IG-MON-3, and IG-MON-3-OF) are present in the list.

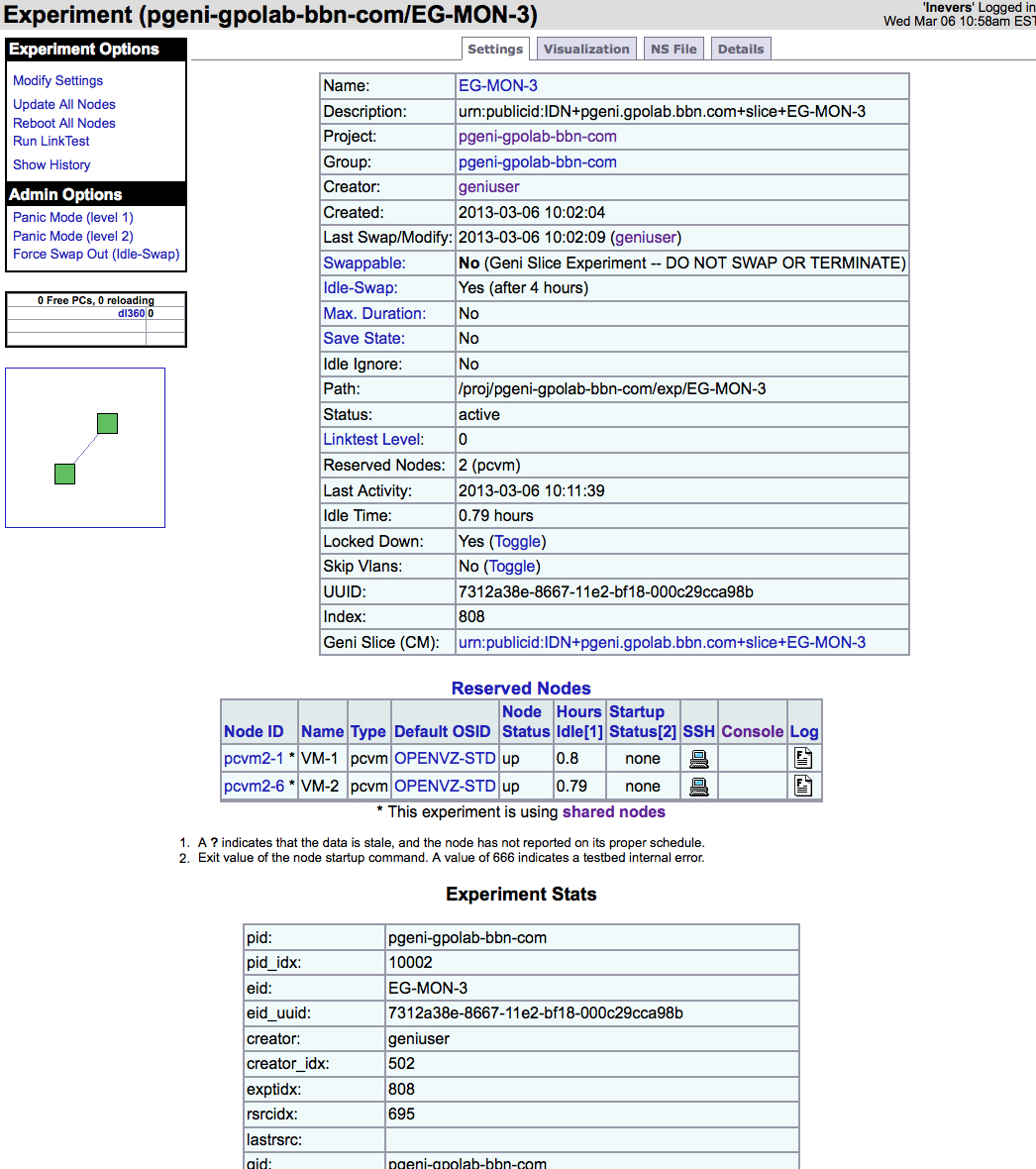

Select the experiment EG-MON-3 (2 VM experiment) and this shows show all know details for the experiment:

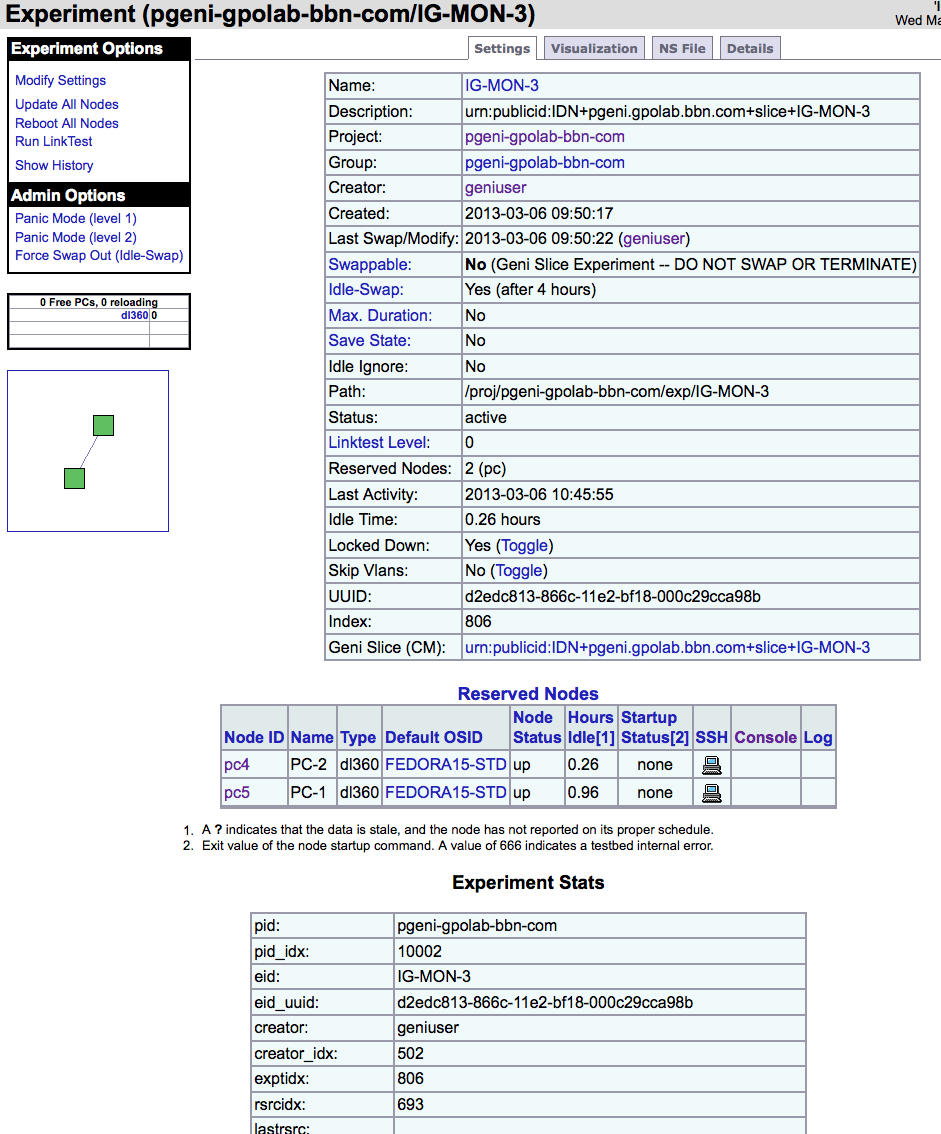

Select the experiment IG-MON-3 (2 raw pc experiment) and this shows show all know details for the experiment:

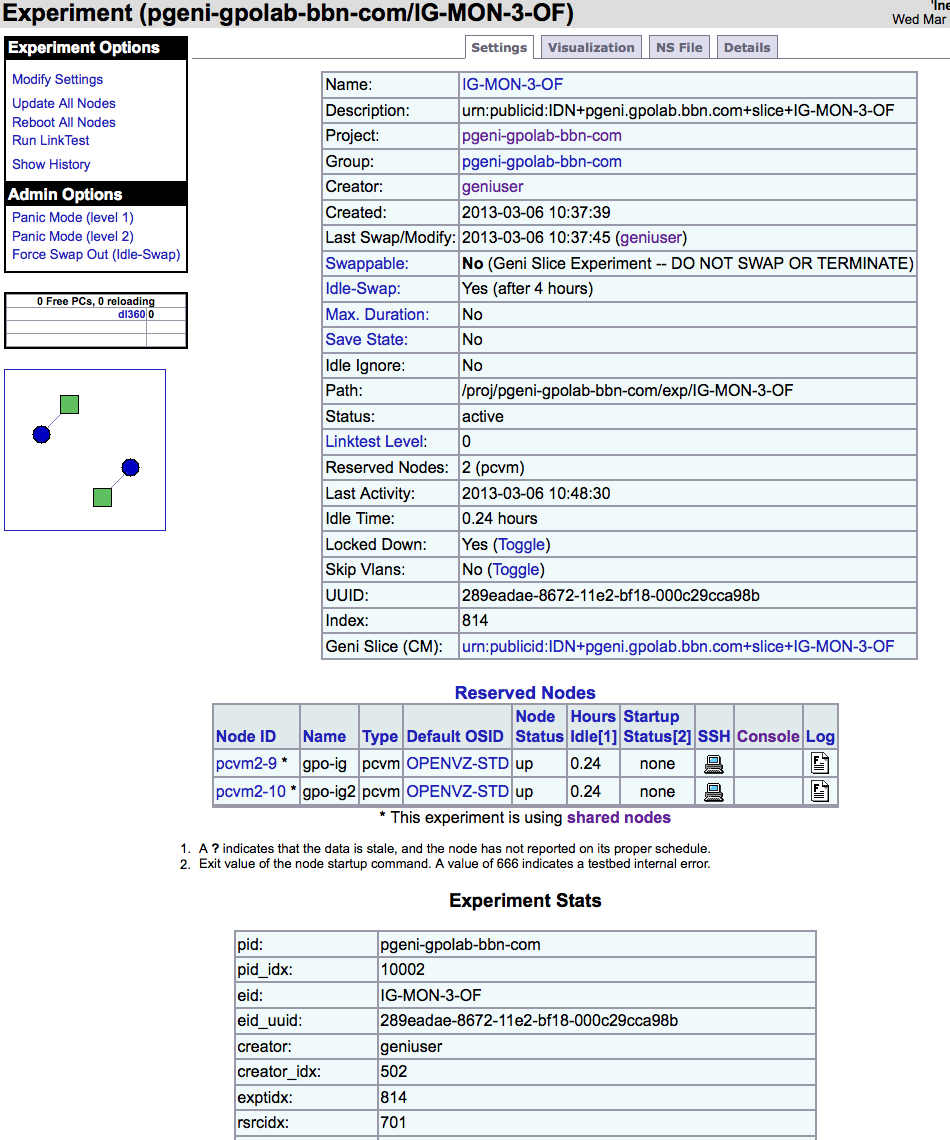

Select the experiment IG-MON-3-OF (2 VM OpenFlow experiment)and this shows show all know details for the experiment:

For each of the above experiment clicking on the topology diagram on the left hand side panel will result in a details page that includes specific configuration and allocation for the experiment.

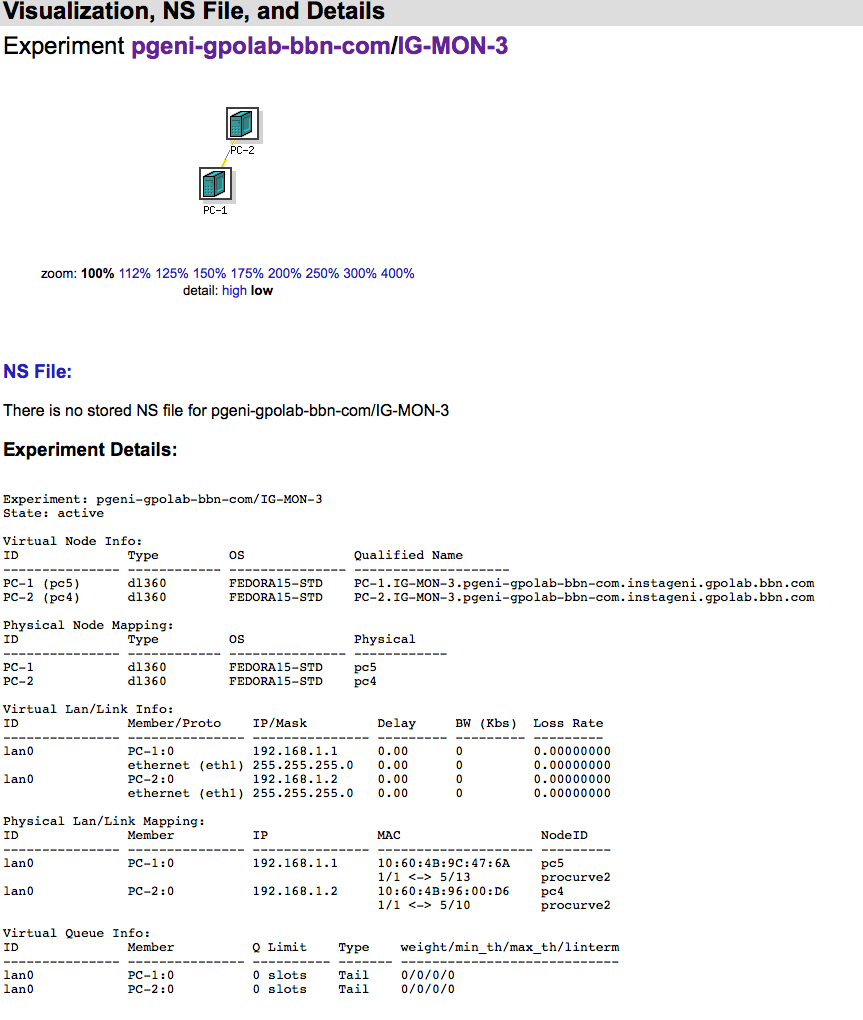

Clicking on the topology for the experiment IG-MON-3 (2 raw pc experiment) show:

The above shows node mapping, IP Port allocation, and other useful information.

For the OpenFlow experiment, the

{ 'attributes':

{ 'client_id': 'phys1:if0',

'component_id': 'urn:publicid:IDN+utah.geniracks.net+interface+pc3:eth1',

'mac_address': 'e83935b14e8a',

...

{ 'attributes':

{ 'client_id': 'virt1:if0',

'component_id': 'urn:publicid:IDN+utah.geniracks.net+interface+pc5:eth1',

'mac_address': '00000a0a0102',

- So i think it should be possible for the admin interface to know that virtual mac address too.

- Huh, but also, that mac address reported in sliverstatus is in fact wrong. Let me summarize:

MAC addrs reported for phys1:0 == 10.10.1.1 E8:39:35:B1:4E:8A: from /sbin/ifconfig eth1 run on phys1 (authoritative) e83935b14e8a: from sliverstatus as experimenter (correct) e8:39:35:b1:4e:8a: from: https://boss.utah.geniracks.net/showexp.php3?experiment=363#details (correct) MAC addrs reported for virt1:0 == 10.10.1.2 82:01:0A:0A:01:02: from /sbin/ifconfig mv1.1 run on virt1 (authoritative) 00000a0a0102: from sliverstatus as experimenter (incorrect: first four digits are wrong) - : from https://boss.utah.geniracks.net/showexp.php3?experiment=363#details (not reported)

I opened 26 for this issue.

- Now, use the OpenVZ host itself to view activity:

- As an admin, login to pc5.utah.geniracks.net

- Poking around, i was led to a couple of prospective data sources:

- Logs in

/var/emulab - The

vzctlRPM, containing a number of OpenVZ control commands

- Logs in

- The latter seems to give a list of running VMs easily:

vhost1,[/var/emulab],05:00(1)$ sudo vzlist -a CTID NPROC STATUS IP_ADDR HOSTNAME 1 15 running - virt1.ecgtest.pgeni-gpolab-bbn-com.utah.geniracks.net - I also see a command to figure out which container is running a given PID. Suppose i run top and am concerned about an sshd process chewing up all system CPU:

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND 51817 20001 20 0 116m 3780 872 R 94.4 0.0 0:05.74 sshd

- Since the user is numeric, i can assume this process is probably running in a container, so find out which one:

vhost1,[/var/emulab],05:05(0)$ sudo vzpid 51766 Pid CTID Name 51766 1 sshd chaos 51804 51163 0 05:04 pts/0 00:00:00 grep --color=auto ssh

- and then look up the container info as above.

- The files in

/var/emulabgive details about how each experiment was created. In particular:Information about experiment startup attributes: /var/emulab/boot/tmcc.pcvm5-1/ /var/emulab/boot/tmcc.pcvm5-2/ Logs of experiment progress: /var/emulab/logs/tbvnode-pcvm5-1.log /var/emulab/logs/tbvnode-pcvm5-2.log /var/emulab/logs/tmccproxy.pcvm5-1.log /var/emulab/logs/tmccproxy.pcvm5-2.log

- These may be useful for running and terminated experiments if the context IDs are unique.

Results of testing step 4: 2012-05-21

- Per-host view of current state:

- From https://boss.utah.geniracks.net/nodecontrol_list.php3?showtype=dl360 in red dot mode, i can once again see that pc4 is allocated as phys1 to

pgeni-gpolab-bbn-com/ecgtest. - I can see that pc1 and pc3 are configured as OpenVZ shared hosts, but i can't see what experiments they are running.

- From https://boss.utah.geniracks.net/nodecontrol_list.php3?showtype=dl360 in red dot mode, i can once again see that pc4 is allocated as phys1 to

- Per-experiment view of current state:

- Browse to https://boss.utah.geniracks.net/genislices.php and find one slice running on the Component Manager:

ID HRN Created Expires 535 bbn-pgeni.ecgtest (ecgtest) 2012-05-21 13:19:28 2012-05-22 10:02:36

- Click

(ecgtest)to view the details of that experiment at https://boss.utah.geniracks.net/showexp.php3?experiment=536#details. - This shows what nodes it's using, including that its VM has been put on pc3:

Physical Node Mapping: ID Type OS Physical --------------- ------------ --------------- ------------ phys1 dl360 FEDORA15-STD pc4 virt1 pcvm OPENVZ-STD pcvm3-1 (pc3)

- Here are some other interesting things, all of which are similar to Friday's test:

IP Port allocation: Low High --------------- ------------ 30000 30255 SSHD Port allocation ('ssh -p portnum'): ID Port SSH command --------------- ---------- ---------------------- Physical Lan/Link Mapping: ID Member IP MAC NodeID --------------- --------------- --------------- -------------------- --------- phys1-virt1-0 phys1:0 10.10.1.1 e8:39:35:b1:ec:9e pc4 1/1 <-> 1/37 procurve2 phys1-virt1-0 virt1:0 10.10.1.2 pcvm3-1

- Browse to https://boss.utah.geniracks.net/genislices.php and find one slice running on the Component Manager:

- Now, use the OpenVZ host itself to view activity:

- As an admin, login to pc3.utah.geniracks.net

- Everything seems similar to when i looked Friday:

vhost2,[~],13:57(0)$ sudo vzlist -a CTID NPROC STATUS IP_ADDR HOSTNAME 1 19 running - virt1.ecgtest.pgeni-gpolab-bbn-com.utah.geniracks.net

Results of testing step 4: 2012-05-26

- Per-host view of current state:

- From https://boss.utah.geniracks.net/nodecontrol_list.php3?showtype=dl360 in red dot mode, i can once again see that pc2 is allocated as phys1 to

pgeni-gpolab-bbn-com/ecgtest. - I can see that pc3 and pc5 are configured as OpenVZ shared hosts, but i can't see what experiments they are running.

- Using https://boss.utah.geniracks.net/showpool.php, i can see that pc3 is running one VM and pc5 is running zero, but not what experiments each is running. I opened 35 to ask whether a node-to-experiment mapping would be an easy modification to

showpool.php.

- From https://boss.utah.geniracks.net/nodecontrol_list.php3?showtype=dl360 in red dot mode, i can once again see that pc2 is allocated as phys1 to

- Per-experiment view of current state:

- Browse to https://boss.utah.geniracks.net/genislices.php and find two slices running on the Component Manager:

ID HRN Created Expires 949 bbn-pgeni.lnubuntu12b (lnubuntu12b) 2012-05-25 09:54:08 2012-05-29 18:00:00 951 bbn-pgeni.ecgtest (ecgtest) 2012-05-26 05:30:20 2012-06-29 18:00:00

- Click

(ecgtest)to view the details of that experiment at https://boss.utah.geniracks.net/showexp.php3?experiment=952#details. - This shows what nodes it's using, including that its VM has been put on pc3:

Physical Node Mapping: ID Type OS Physical --------------- ------------ --------------- ------------ phys1 dl360 FEDORA15-STD pc2 virt1 pcvm OPENVZ-STD pcvm3-1 (pc3)

- Here are some other interesting things:

IP Port allocation: Low High --------------- ------------ 30000 30255 SSHD Port allocation ('ssh -p portnum'): ID Port SSH command --------------- ---------- ---------------------- Physical Lan/Link Mapping: ID Member IP MAC NodeID --------------- --------------- --------------- -------------------- --------- phys1-virt1-0 phys1:0 10.10.1.1 e8:39:35:b1:0c:7e pc2 1/1 <-> 1/28 procurve2 phys1-virt1-0 virt1:0 10.10.1.2 00:00:0a:0a:01:02 pcvm3-1

- Browse to https://boss.utah.geniracks.net/genislices.php and find two slices running on the Component Manager:

- So, indeed, a MAC address is reported for the virtual node. However, virt1 itself still says:

mv1.1 Link encap:Ethernet HWaddr 82:01:0A:0A:01:02 inet addr:10.10.1.2 Bcast:10.10.1.255 Mask:255.255.255.0 - Now, use the OpenVZ host itself to view activity:

- As an admin, login to pc3.utah.geniracks.net

- Everything seems similar to when i looked Friday:

vhost2,[~],06:00(0)$ sudo vzlist -a CTID NPROC STATUS IP_ADDR HOSTNAME 1 19 running - virt1.ecgtest.pgeni-gpolab-bbn-com.utah.geniracks.net

Earlier, i said:

- Using https://boss.utah.geniracks.net/showpool.php, i can see that pc3 is running one VM and pc5 is running zero, but not what experiments each is running. I opened 35 to ask whether a node-to-experiment mapping would be an easy modification to

showpool.php. - Leigh responded that this is already at the bottom of the node UI, see e.g. https://boss.utah.geniracks.net/shownode.php3?node_id=pc3 (which you can get to by clicking through from showpool.php. So this is good for our purposes.

Step 5: get information about terminated experiments

Using:

- On boss, use AM state, logs, or administrator interfaces to find evidence of the two terminated experiments.

- Determine how many other experiments were run in the past day.

- Determine which GENI user created each of the terminated experiments.

- Determine the mapping of experiments to OpenVZ or exclusive hosts for each of the terminated experiments.

- Determine the control and dataplane MAC addresses assigned to each VM in each terminated experiment.

Determine any IP addresses assigned by InstaGENI to each VM in each terminated experiment.- Given a control IP address which InstaGENI had assigned to a now-terminated VM, determine which experiment was given that control IP.

- Given a data plane IP address which an experimenter had requested for a now-terminated VM, determine which experiment was given that IP.

Verify:

- A site administrator can get ownership and resource allocation information for recently-terminated experiments which used OpenVZ VMs.

- A site administrator can get ownership and resource allocation information for recently-terminated experiments which used physical hosts.

- A site administrator can get information about MAC addresses and IP addresses used by recently-terminated experiments.

Results of testing step 5: 2012-05-21

- In red dot mode, https://boss.utah.geniracks.net/genihistory.php, i can view lots of previous slivers, of which

ecgtest3andecgtest2are among the most recent - I can type:

urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest2

into the search box, and bring up all previous instances of slivers in that slice. - Note that this is an exact match, not a regexp:

urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest

only pulls upecgtestslivers, notecgtest2orecgtest3. And just searching forecgtestreports nothing. - As promised by the default text in the search box, searching for:

urn:publicid:IDN+pgeni.gpolab.bbn.com+user+chaos

does appear to get all of my slivers. - That UI shows that the following slivers were created in the past 24 hours:

ID Slice HRN/URN Creator HRN/URN Created Destroyed Manifest 784 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest urn:publicid:IDN+pgeni.gpolab.bbn.com+user+chaos 2012-05-21 13:19:41 manifest 778 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest3 urn:publicid:IDN+pgeni.gpolab.bbn.com+user+chaos 2012-05-21 12:51:18 2012-05-21 12:56:36 manifest 772 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest2 urn:publicid:IDN+pgeni.gpolab.bbn.com+user+chaos 2012-05-21 12:17:44 2012-05-21 12:40:30 manifest 760 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest urn:publicid:IDN+pgeni.gpolab.bbn.com+user+chaos 2012-05-21 09:05:11 2012-05-21 09:27:04 manifest 718 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+20vm urn:publicid:IDN+pgeni.gpolab.bbn.com+user+lnevers 2012-05-21 08:03:37 2012-05-21 10:34:19 manifest 686 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+15vm urn:publicid:IDN+pgeni.gpolab.bbn.com+user+lnevers 2012-05-21 07:47:56 2012-05-21 10:52:30 manifest 654 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+15vm urn:publicid:IDN+pgeni.gpolab.bbn.com+user+lnevers 2012-05-21 07:32:17 2012-05-21 07:39:03 manifest 622 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+2vmubuntu urn:publicid:IDN+pgeni.gpolab.bbn.com+user+lnevers 2012-05-21 07:24:50 2012-05-21 07:29:53 manifest 616 urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+2vmubuntu urn:publicid:IDN+pgeni.gpolab.bbn.com+user+lnevers 2012-05-21 07:10:14 2012-05-21 07:23:27 manifest

- That display shows which GENI user created each experiment.

- The clickable manifests can be used to get the sliver-to-resource mappings. Within each manifest,

<rs:vnode />elements can be used to find the resources used by the experiment. These look like:<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pc4"><host name="phys1.ecgtest.pgeni-gpolab-bbn-com.utah.geniracks.net"><services><login authentication="ssh-keys" hostname="pc4.utah.geniracks.net" port="22" username="chaos"></login></services></host></rs:vnode></sliver_type></node> <rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pcvm3-1"><host name="virt1.ecgtest.pgeni-gpolab-bbn-com.utah.geniracks.net"><services><login authentication="ssh-keys" hostname="pc3.utah.geniracks.net" port="30010" username="chaos"></login></services></host></rs:vnode></sliver_type></node>

- In addition, the manifests contain dataplane IP addresses and MAC addresses for each experiment (though these are wrong or missing for VMs, per 26)

- Here is all the information i can get this way:

| Emulab ID | Sliver URN | Physical nodes | OpenVZ containers | Dataplane IPs and MACs |

| 784 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest | pc4(phys1) | pc3:pcvm3-1(virt1) | 10.10.1.1(phys1:e83935b1ec9e) 10.10.1.2(virt1:00000a0a0102) |

| 778 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest3 | pc5(phys1) pc4(phys2) | 10.10.1.1(phys1:e4115bed1cb6) 10.10.1.2(phys2:e83935b1ec9e) | |

| 772 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest2 | pc3:pcvm3-1(virt1) pc3:pcvm3-2(virt2) | 10.10.1.1(virt1:UNKNOWN) 10.10.1.2(virt2:UNKNOWN) | |

| 760 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+ecgtest | pc5:pcvm5-21(virt01) pc5:pcvm5-22(virt02) pc5:pcvm5-23(virt03) pc5:pcvm5-24(virt04) pc5:pcvm5-25(virt05) pc5:pcvm5-26(virt06) pc5:pcvm5-27(virt07) pc5:pcvm5-28(virt08) pc5:pcvm5-29(virt09) pc5:pcvm5-30(virt10) pc1:pcvm1-1(virt11) | ||

| 718 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+20vm | pc5:pcvm5-11(VM-1) pc2:pcvm2-8(VM-2) pc5:pcvm5-16(VM-3) pc5:pcvm5-17(VM-4) pc2:pcvm2-9(VM-5) pc2:pcvm2-10(VM-6) pc5:pcvm5-18(VM-7) pc5:pcvm5-19(VM-8) pc5:pcvm5-20(VM-9) pc5:pcvm5-12(VM-10) pc5:pcvm5-13(VM-11) pc2:pcvm2-1(VM-12) pc2:pcvm2-2(VM-13) pc2:pcvm2-3(VM-14) pc2:pcvm2-4(VM-15) pc5:pcvm5-14(VM-16) pc2:pcvm2-5(VM-17) pc2:pcvm2-6(VM-18) pc2:pcvm2-7(VM-19) pc5:pcvm5-15(VM-20) | 10.10.1.1(VM-1:00000a0a0101) 10.10.1.2(VM-2:00000a0a0102) 10.10.1.3(VM-3:00000a0a0103) 10.10.1.4(VM-4:00000a0a0104) 10.10.1.5(VM-5:00000a0a0105) 10.10.1.6(VM-6:00000a0a0106) 10.10.1.7(VM-7:00000a0a0107) 10.10.1.8(VM-8:00000a0a0108) 10.10.1.9(VM-9:00000a0a0109) 10.10.1.10(VM-10:00000a0a010a) 10.10.1.20(VM-11:00000a0a0114) 10.10.1.19(VM-12:00000a0a0113) 10.10.1.11(VM-13:00000a0a010b) 10.10.1.12(VM-14:00000a0a010c) 10.10.1.13(VM-15:00000a0a010d) 10.10.1.14(VM-16:00000a0a010e) 10.10.1.15(VM-17:00000a0a010f) 10.10.1.16(VM-18:00000a0a0110) 10.10.1.17(VM-19:00000a0a0111) 10.10.1.18(VM-20:00000a0a0112) | |

| 686 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+15vm | pc5:pcvm5-1(VM-1) pc5:pcvm5-6(VM-2) pc5:pcvm5-7(VM-3) pc4:pcvm4-3(VM-4) pc5:pcvm5-8(VM-5) pc4:pcvm4-4(VM-6) pc5:pcvm5-9(VM-7) pc4:pcvm4-5(VM-8) pc5:pcvm5-10(VM-9) pc5:pcvm5-2(VM-10) pc5:pcvm5-3(VM-11) pc5:pcvm5-4(VM-12) pc4:pcvm4-1(VM-13) pc4:pcvm4-2(VM-14) pc5:pcvm5-5(VM-15) | 10.10.1.1(VM-1:00000a0a0101) 10.10.1.2(VM-2:00000a0a0102) 10.10.1.3(VM-3:00000a0a0103) 10.10.1.4(VM-4:UNKNOWN) 10.10.1.5(VM-5:00000a0a0105) 10.10.1.6(VM-6:UNKNOWN) 10.10.1.7(VM-7:00000a0a0107) 10.10.1.8(VM-8:UNKNOWN) 10.10.1.9(VM-9:00000a0a0109) 10.10.1.10(VM-10:00000a0a010a) 10.10.1.15(VM-11:00000a0a010f) 10.10.1.14(VM-12:00000a0a010e) 10.10.1.11(VM-13:UNKNOWN) 10.10.1.12(VM-14:UNKNOWN) 10.10.1.13(VM-15:00000a0a010d) | |

| 654 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+15vm | pc2:pcvm2-1(VM-1) pc5:pcvm5-5(VM-2) pc5:pcvm5-6(VM-3) pc5:pcvm5-7(VM-4) pc5:pcvm5-8(VM-5) pc2:pcvm2-4(VM-6) pc2:pcvm2-5(VM-7) pc5:pcvm5-9(VM-8) pc5:pcvm5-10(VM-9) pc2:pcvm2-2(VM-10) pc5:pcvm5-1(VM-11) pc5:pcvm5-2(VM-12) pc5:pcvm5-3(VM-13) pc2:pcvm2-3(VM-14) pc5:pcvm5-4(VM-15) | 10.10.1.2(VM-2:00000a0a0102) 10.10.1.3(VM-3:00000a0a0103) 10.10.1.4(VM-4:00000a0a0104) 10.10.1.5(VM-5:00000a0a0105) 10.10.1.8(VM-8:00000a0a0108) 10.10.1.9(VM-9:00000a0a0109) 10.10.1.15(VM-11:00000a0a010f) 10.10.1.14(VM-12:00000a0a010e) 10.10.1.11(VM-13:00000a0a010b) 10.10.1.13(VM-15:00000a0a010d) 10.10.1.1(VM-1:UNKNOWN) 10.10.1.6(VM-6:UNKNOWN) 10.10.1.7(VM-7:UNKNOWN) 10.10.1.10(VM-10:UNKNOWN) 10.10.1.12(VM-14:UNKNOWN) | |

| 622 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+2vmubuntu | pc5:pcvm5-1(VM-1) pc1:pcvm1-3(VM-2) pc5:pcvm5-6(VM-3) pc1:pcvm1-4(VM-4) pc5:pcvm5-7(VM-5) pc1:pcvm1-5(VM-6) pc5:pcvm5-8(VM-7) pc5:pcvm5-9(VM-8) pc5:pcvm5-10(VM-9) pc1:pcvm1-1(VM-10) pc5:pcvm5-2(VM-11) pc5:pcvm5-3(VM-12) pc5:pcvm5-4(VM-13) pc5:pcvm5-5(VM-14) pc1:pcvm1-2(VM-15) | 10.10.1.1(VM-1:00000a0a0101) 10.10.1.3(VM-3:00000a0a0103) 10.10.1.5(VM-5:00000a0a0105) 10.10.1.7(VM-7:00000a0a0107) 10.10.1.8(VM-8:00000a0a0108) 10.10.1.9(VM-9:00000a0a0109) 10.10.1.15(VM-11:00000a0a010f) 10.10.1.14(VM-12:00000a0a010e) 10.10.1.11(VM-13:00000a0a010b) 10.10.1.12(VM-14:00000a0a010c) 10.10.1.2(VM-2:UNKNOWN) 10.10.1.4(VM-4:UNKNOWN) 10.10.1.6(VM-6:UNKNOWN) 10.10.1.10(VM-10:UNKNOWN) 10.10.1.13(VM-15:UNKNOWN) | |

| 622 | urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+2vmubuntu | pc5:pcvm5-1(VM-1) pc1:pcvm1-3(VM-2) pc5:pcvm5-6(VM-3) pc1:pcvm1-4(VM-4) pc5:pcvm5-7(VM-5) pc1:pcvm1-5(VM-6) pc5:pcvm5-8(VM-7) pc5:pcvm5-9(VM-8) pc5:pcvm5-10(VM-9) pc1:pcvm1-1(VM-10) pc5:pcvm5-2(VM-11) pc5:pcvm5-3(VM-12) pc5:pcvm5-4(VM-13) pc5:pcvm5-5(VM-14) pc1:pcvm1-2(VM-15) | 10.10.1.1(VM-1:00000a0a0101) 10.10.1.3(VM-3:00000a0a0103) 10.10.1.5(VM-5:00000a0a0105) 10.10.1.7(VM-7:00000a0a0107) 10.10.1.8(VM-8:00000a0a0108) 10.10.1.9(VM-9:00000a0a0109) 10.10.1.15(VM-11:00000a0a010f) 10.10.1.14(VM-12:00000a0a010e) 10.10.1.11(VM-13:00000a0a010b) 10.10.1.12(VM-14:00000a0a010c) 10.10.1.2(VM-2:UNKNOWN) 10.10.1.4(VM-4:UNKNOWN) 10.10.1.6(VM-6:UNKNOWN) 10.10.1.10(VM-10:UNKNOWN) 10.10.1.13(VM-15:UNKNOWN) |

- Note, i semi-automated getting that information from the manifest using awk, as follows:

- Download the XML data from the page (copy/paste)

- Find every line that starts with

<interface, and concatenate the next line, which contains</interface>to it - Find the node assignments:

grep "<rs:vnode" tmpfile | awk '{print $6 " " $3 " " $4}' | awk -F= '{print $2 " " $3 " " $4}' | awk -F\" '{print $2 " " $4 " " $6}' | awk -F\. '{print $1 " " $4}' | awk '{print $1 ":" $3 "(" $4 ")"}' - Find the interface data for interfaces which have mac addresses defined:

grep "<interface " tmpfile | grep mac_address | awk '{print $7 " " $2 " " $5}' | awk -F\" '{print $2 " " $4 " " $6}' | awk -F: '{print $1 " " $2}' | awk '{print $1 "(" $2 ":" $4 ")"}' - Find the interface data for interfaces which don't have mac addresses defined:

grep "<interface " tmpfile | grep -v mac_address | awk '{print $6 " " $2}' | awk -F\" '{print $2 " " $4}' | awk -F: '{print $1}' | awk '{print $1 "(" $2 ":UNKNOWN)"}'

- Incidentally, i came across this UI (https://boss.utah.geniracks.net/showpool.php), which shows the utilization of the nodes in the shared pool. That's not bad.

- I poked around regarding how to do these:

- Determine the control and dataplane MAC addresses assigned to each VM in each terminated experiment.

- Determine any IP addresses assigned by InstaGENI to each VM in each terminated experiment.

Since i couldn't figure out anything really bulletproof, i created 31 to see whether Utah has a preferred solution to this. It's possible that some of this information can be obtained from the OpenVZ hosts. However, i can't get the information for e.g. Luisa's 20 VM experiment from earlier today, because the hosts have been swapped out and back in since then. This is an unusual situation in general, but not an unheard-of one. It would be better to cache information which might be forensically relevant on boss.

This test is also blocked by 26 from being fully completed, though i expect that the relevant parts of this will succeed too, and a cursory check should be sufficient.

Step 6: get OpenFlow state information

Using:

- On the dataplane switch, get a list of controllers, and see if any additional controllers are serving experiments.

- On the flowvisor VM, get a list of active FV slices from the FlowVisor

- On the FOAM VM, get a list of active slivers from FOAM

- Use FV, FOAM, or the switch to list the flowspace of a running OpenFlow experiment.

Verify:

- A site administrator can get information about the OpenFlow resources used by running experiments.

- When an OpenFlow experiment is started by InstaGENI, a new controller is added directly to the switch.

- No new FlowVisor slices are added for new OpenFlow experiments started by InstaGENI.

- No new FOAM slivers are added for new OpenFlow experiments started by InstaGENI.

Step 7: verify MAC addresses on the rack dataplane switch

Using:

- Establish a privileged login to the dataplane switch

- Obtain a list of the full MAC address table of the switch

- On boss and the experimental hosts, use available data sources to determine which host or VM owns each MAC address.

Verify:

- It is possible to identify and classify every MAC address visible on the switch

Step 8: verify active dataplane traffic

Using:

- Establish a privileged login to the dataplane switch

- Based on the information from Step 7, determine which interfaces are carrying traffic between the experimental VMs

- Collect interface counters for those interfaces over a period of 10 minutes

- Estimate the rate at which the experiment is sending traffic

Verify:

- The switch reports interface counters, and an administrator can obtain plausible estimates of dataplane traffic quantities by looking at them.

Attachments (8)

- IG-MON-3-2pc.jpg (829.2 KB) - added by 11 years ago.

- IG-MON-3-2vm-of.jpg (884.7 KB) - added by 11 years ago.

- IG-MON-3-2vm.jpg (874.5 KB) - added by 11 years ago.

- IG-MON-3-experiments.jpg (811.4 KB) - added by 11 years ago.

- IG-MON-3-2pc-detail.jpg (537.2 KB) - added by 11 years ago.

- IG-MON-3-2vm-of-detail.jpg (675.3 KB) - added by 11 years ago.

- IG-MON-3-AggHistory.jpg (1.3 MB) - added by 11 years ago.

- IG-MON-3-SliceHistory.jpg (291.3 KB) - added by 11 years ago.