| Version 44 (modified by , 11 years ago) (diff) |

|---|

IG-EXP-1: Bare Metal Support Acceptance Test

This page captures status for the test case IG-EXP-1, which verified support for bare metal nodes. For overall status see the InstaGENI Acceptance Test Status page.

Last update: 2013/02/06

Test Status

This section captures the status for each step in the acceptance test plan.

| Step | State | Ticket | Notes |

| Step 1 | Color(green,Pass)? | ||

| Step 2 | Color(red,Fail)? | instaticket:13 | Windows not supported. |

| Step 3 | Color(red,Fail)? | Windows not supported. | |

| Step 4 | Color(green,Pass)? | ||

| Step 5 | Color(green,Pass)? | ||

| Step 6 | Color(green,Pass)? | ||

| Step 7 |

| State Legend | Description |

| Color(green,Pass)? | Test completed and met all criteria |

| Color(#98FB98,Pass: most criteria)? | Test completed and met most criteria. Exceptions documented |

| Color(red,Fail)? | Test completed and failed to meet criteria. |

| Color(yellow,Complete)? | Test completed but will require re-execution due to expected changes |

| Color(orange,Blocked)? | Blocked by ticketed issue(s). |

| Color(#63B8FF,In Progress)? | Currently under test. |

Test Plan Steps

A nick_name alias is used for the GPO InstaGENI aggregate manager in the omni_config:

ig-gpo=,http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0

GPO ProtoGENI user credentials for lnevers@bbn.com were used for Experimenter1.

Step 1. Determine which nodes can be used as exclusive nodes

Able to determine which node are exclusive and if they are available via Omni listresources. ListResources obtained with the following Omni command:

$ omni.py -a ig-gpo listresources --available -o

Step 2. Obtain 2 licensed recent Microsoft OS images for physical nodes from the site (BBN)

Windows is not supported. Need to get InstaGENI folks to document the non-support of MS Windows and cleanup of windows references (ticket 13).

Step 3. Reserve and boot 2 physical nodes using Microsoft image

MS Windows is not verified (ticket 13) According to email from Leigh, this is very time intensive and would require weeks. Also Windows XP instructions existed, but have since been removed.

Step 4. Obtain a recent Linux OS image for physical nodes from the InstaGENI list

Have requested the list of currently supported OS by InstaGENI. Initially only Standard 32-bit Fedora 15 image was available, two images have been added to the list of available OS in the Utah InstaGENI rack (ticket 13). Tested each images made available to experimenters, by setting up a 2 raw pc with 1 lan experiment for each image and attached Rspec:

- FreeBSD 8.2 32-bit version

- Standard 64-bit Ubuntu 12.04 image

- Standard 32-bit Fedora 15 image (default)

Command used to determine images:

$ $ omni.py -a ig-gpo listresources --available -o

$ egrep "node component|disk_image|available" rspec-instageni-gpolab-bbn-com-protogeniv2.xml

<...>

<node component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc4" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" component_name="pc4" exclusive="true">

<disk_image default="true" description="Standard 32-bit Fedora 15 image" name="urn:publicid:IDN+instageni.gpolab.bbn.com+image+emulab-ops:FEDORA15-STD" os="Fedora" version="15"/>

<disk_image description="FreeBSD 8.2 32-bit version" name="urn:publicid:IDN+instageni.gpolab.bbn.com+image+emulab-ops:FBSD82-STD" os="FreeBSD" version="8.2"/>

<disk_image description="Ubuntu 12.04 LTS" name="urn:publicid:IDN+instageni.gpolab.bbn.com+image+emulab-ops:UBUNTU12-64-STD" os="Linux" version=""/>

<disk_image default="true" description="Standard 32-bit Fedora 15 image" name="urn:publicid:IDN+instageni.gpolab.bbn.com+image+emulab-ops:FEDORA15-STD" os="Fedora" version="15"/>

<disk_image description="Generic osid for openvz virtual nodes." name="urn:publicid:IDN+instageni.gpolab.bbn.com+image+emulab-ops:OPENVZ-STD" os="Fedora" version="15"/>

<available now="true"/>

Step 5. Reserve and boot physical node using each loading a different Linux OS image

The RSpec IG-EXP-1-gpo.rspec that includes 1 FreeBSD and 1 Ubuntu node was used to create a slice and sliver at the GPO InstaGENI:

$ omni.py createslice IG-EXP-1

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Created slice with Name IG-EXP-1, URN urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-EXP-1, Expiration 2013-01-25 21:04:16+00:00

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createslice:

Options as run:

framework: pg

Args: createslice IG-EXP-1

Result Summary: Created slice with Name IG-EXP-1, URN urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-EXP-1, Expiration 2013-01-25 21:04:16+00:00

INFO:omni: ============================================================

$ omni.py createsliver -a ig-gpo IG-EXP-1 IG-EXP-1-gpo.rspec

INFO:omni:Loading config file /home/lnevers/.gcf/omni_config

INFO:omni:Using control framework pg

INFO:omni:Substituting AM nickname ig-gpo with URL http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-EXP-1 expires on 2013-01-25 21:04:16 UTC

INFO:omni:Substituting AM nickname ig-gpo with URL http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Substituting AM nickname ig-gpo with URL http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN

INFO:omni:Creating sliver(s) from rspec file IG-EXP-1-gpo.rspec for slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-EXP-1

INFO:omni:Got return from CreateSliver for slice IG-EXP-1 at http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0:

INFO:omni:<!-- Reserved resources for:

Slice: IG-EXP-1

at AM:

URN: unspecified_AM_URN

URL: http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0

-->

INFO:omni:<rspec xmlns="http://www.geni.net/resources/rspec/3" xmlns:flack="http://www.protogeni.net/resources/rspec/ext/flack/1" xmlns:planetlab="http://www.planet-lab.org/resources/sfa/ext/planetlab/1" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" type="manifest" xsi:schemaLocation="http://www.geni.net/resources/rspec/3 http://www.geni.net/resources/rspec/3/manifest.xsd">

<node client_id="host-bsd" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="true" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc4" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+847">

<sliver_type name="raw-pc">

<disk_image description="FreeBSD 8.2 32-bit version" name="urn:publicid:IDN+instageni.gpolab.bbn.com+image+emulab-ops:FBSD82-STD" os="FreeBSD" version="8.2"/>

</sliver_type>

<interface client_id="host-bsd:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc4:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+851" mac_address="10604B9600D6">

<ip address="10.10.1.2" type="ipv4"/></interface>

<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pc4"/><host name="host-bsd.IG-EXP-1.pgeni-gpolab-bbn-com.instageni.gpolab.bbn.com"/><services><login authentication="ssh-keys" hostname="pc4.instageni.gpolab.bbn.com" port="22" username="lnevers"/></services></node>

<node client_id="host-ubuntu12" component_manager_id="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm" exclusive="true" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+node+pc5" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+846">

<sliver_type name="raw-pc">

<disk_image description="Ubuntu 12.04 LTS" name="urn:publicid:IDN+instageni.gpolab.bbn.com+image+emulab-ops:UBUNTU12-64-STD" os="Linux" version=""/>

</sliver_type>

<interface client_id="host-ubuntu12:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc5:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+850" mac_address="10604B9C476A">

<ip address="10.10.1.1" type="ipv4"/></interface>

<rs:vnode xmlns:rs="http://www.protogeni.net/resources/rspec/ext/emulab/1" name="pc5"/><host name="host-ubuntu12.IG-EXP-1.pgeni-gpolab-bbn-com.instageni.gpolab.bbn.com"/><services><login authentication="ssh-keys" hostname="pc5.instageni.gpolab.bbn.com" port="22" username="lnevers"/></services></node>

<link client_id="lan0" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+849" vlantag="258">

<component_manager name="urn:publicid:IDN+instageni.gpolab.bbn.com+authority+cm"/>

<interface_ref client_id="host-ubuntu12:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc5:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+850"/>

<interface_ref client_id="host-bsd:if0" component_id="urn:publicid:IDN+instageni.gpolab.bbn.com+interface+pc4:eth1" sliver_id="urn:publicid:IDN+instageni.gpolab.bbn.com+sliver+851"/>

<link_type name="lan"/>

</link>

</rspec>

INFO:omni: ------------------------------------------------------------

INFO:omni: Completed createsliver:

Options as run:

aggregate: ['ig-gpo']

framework: pg

Args: createsliver IG-EXP-1 IG-EXP-1-gpo.rspec

Result Summary: Got Reserved resources RSpec from instageni-gpolab-bbn-com-protogeniv2

INFO:omni: ============================================================

Note: A version of the RSpec IG-EXP-1-utah.rspec is available for IG Utah rack.

Once sliver is ready, determined the login information for the sliver EG-EXP-1 nodes:

$ readyToLogin.py -a ig-gpo IG-EXP-1 <...> host-ubuntu12's geni_status is: changing (am_status:ready) User lnevers logins to host-ubuntu12 using: xterm -e ssh -i /home/lnevers/.ssh/id_rsa lnevers@pc5.instageni.gpolab.bbn.com & host-bsd's geni_status is: ready (am_status:ready) User lnevers logins to host-bsd using: xterm -e ssh -i /home/lnevers/.ssh/id_rsa lnevers@pc4.instageni.gpolab.bbn.com &

Verified dataplane connectivity for each host in each experiment. Checked the FreeBSD host:

$ ssh -i /home/lnevers/.ssh/id_rsa lnevers@pc4.instageni.gpolab.bbn.com

[lnevers@host-bsd ~]$ /sbin/ifconfig bce1

bce1: flags=8843<UP,BROADCAST,RUNNING,SIMPLEX,MULTICAST> metric 0 mtu 1500

options=c01bb<RXCSUM,TXCSUM,VLAN_MTU,VLAN_HWTAGGING,JUMBO_MTU,VLAN_HWCSUM,TSO4,VLAN_HWTSO,LINKSTATE>

ether 10:60:4b:96:00:d6

inet 10.10.1.2 netmask 0xffffff00 broadcast 10.10.1.255

media: Ethernet 1000baseT <full-duplex>

status: active

[lnevers@host-bsd ~]$ ping 10.10.1.1

PING 10.10.1.1 (10.10.1.1): 56 data bytes

64 bytes from 10.10.1.1: icmp_seq=0 ttl=64 time=0.233 ms

64 bytes from 10.10.1.1: icmp_seq=1 ttl=64 time=0.110 ms

Checked the Ubuntu host:

lnevers@localhost:~$ /sbin/ifconfig eth1

eth1 Link encap:Ethernet HWaddr 10:60:4b:9c:47:6a

inet addr:10.10.1.1 Bcast:10.10.1.255 Mask:255.255.255.0

inet6 addr: fe80::1260:4bff:fe9c:476a/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:6 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:492 (492.0 B)

Interrupt:37 Memory:f2000000-f2012800

lnevers@localhost:~$ ping 10.10.1.2

PING 10.10.1.2 (10.10.1.2) 56(84) bytes of data.

64 bytes from 10.10.1.2: icmp_req=1 ttl=64 time=0.103 ms

64 bytes from 10.10.1.2: icmp_req=2 ttl=64 time=0.091 ms

Step 6. Release physical node resource

Released the sliver compute resources:

$ omni.py deletesliver -a ig-gpo IG-EXP-1 INFO:omni:Loading config file /home/lnevers/.gcf/omni_config INFO:omni:Using control framework pg INFO:omni:Substituting AM nickname ig-gpo with URL http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN INFO:omni:Slice urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-EXP-1 expires on 2013-01-25 21:04:16 UTC INFO:omni:Substituting AM nickname ig-gpo with URL http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0, URN unspecified_AM_URN INFO:omni:Deleted sliver urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-EXP-1 on unspecified_AM_URN at http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0 INFO:omni: ------------------------------------------------------------ INFO:omni: Completed deletesliver: Options as run: aggregate: ['ig-gpo'] framework: pg Args: deletesliver IG-EXP-1 Result Summary: Deleted sliver urn:publicid:IDN+pgeni.gpolab.bbn.com+slice+IG-EXP-1 on unspecified_AM_URN at http://instageni.gpolab.bbn.com/protogeni/xmlrpc/am/2.0 INFO:omni: ============================================================

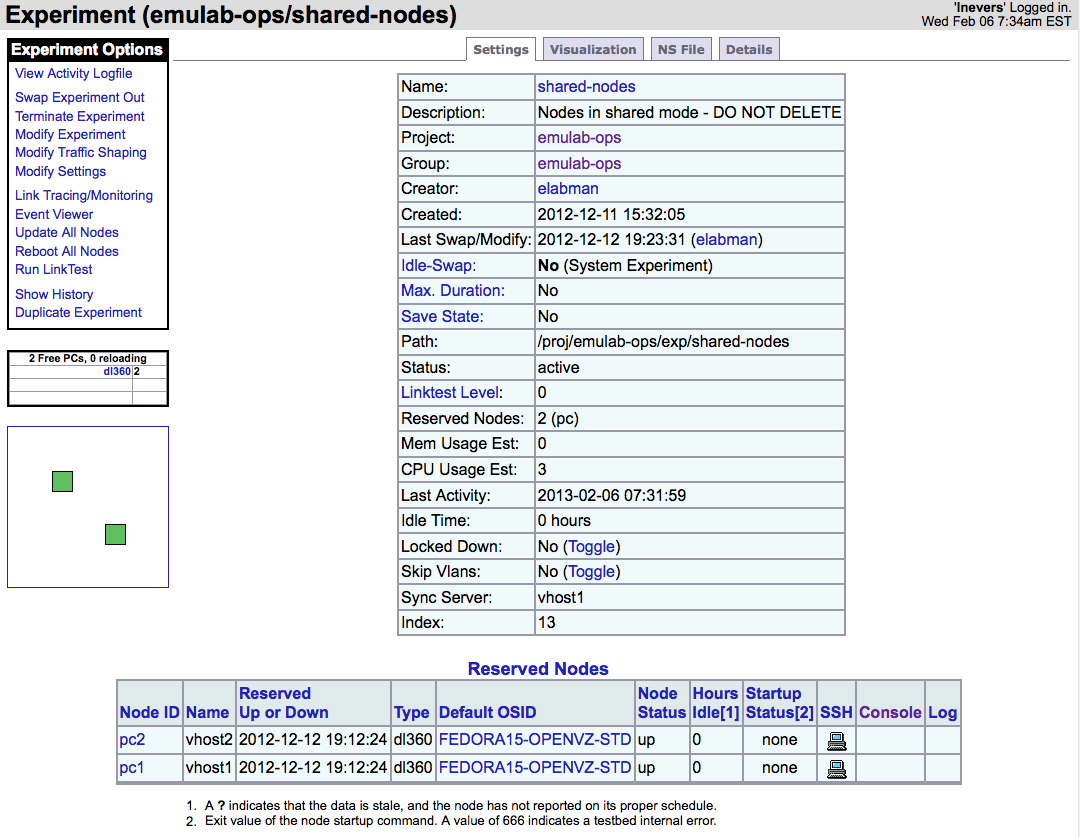

Step 7. Modify Aggregate resource allocation for the rack to add node

This steps validates the ability to adjust the shared node allocation by following the instructions available in the Setup Shared Nodes page. The procedure executes the following:

- Checked resource InstaGENI GPO rack to find a dedicated node that is available for re-allocation

- Follow the instructions to add the node to the shared pool as defined at the Setup Shared Nodes page.

- Verify that the node becomes part of the shared pool via listresources component_id "exclusive" tag.

- Verify that no VMs are in use for the new shared node by checking that the tag "<emulab:node_type type_slots="100"/> ".

- Modify shared-nodes experiment to remove the node, (if not in use).

- Verify that the node is no longer part of the shared pool via listresources component_id "exclusive" tag.

Here are details for each step that is executed:

- Checked resource status in GPO rack:

Referenced the PCs status page, to determine available node to re-allocate. The following was the status for the nodes: Image(IG-EXP-1-pre-alloc.jpg? The status show 3 nodes in used pc1, pc2, and pc3. The two nodes that are used as by the shared-nodes experiment (exclusive=false) are pc1 and pc2. Will add pc4 to the shared node pool.

- Use instructions in Setup Shared Nodes page

Displayed the initial NS file for the shared-nodes experiment:

source tb_compat.tcl set ns [new Simulator] set vhost1 [$ns node] tb-set-node-os $vhost1 FEDORA15-OPENVZ-STD tb-set-node-sharingmode $vhost1 "shared_local" set vhost2 [$ns node] tb-set-node-os $vhost2 FEDORA15-OPENVZ-STD tb-set-node-sharingmode $vhost2 "shared_local" tb-fix-node $vhost1 pc1 tb-fix-node $vhost2 pc2 $ns rtproto Static $ns run

While in red-dot mode", selected the shared-nodes experiment:

On the page above, selected the option "Modify Experiment" link, and used the Edit:" feature to modify the original NS file to add pc4:

source tb_compat.tcl set ns [new Simulator] set vhost1 [$ns node] tb-set-node-os $vhost1 FEDORA15-OPENVZ-STD tb-set-node-sharingmode $vhost1 "shared_local" set vhost2 [$ns node] tb-set-node-os $vhost2 FEDORA15-OPENVZ-STD tb-set-node-sharingmode $vhost2 "shared_local" set vhost3 [$ns node] tb-set-node-os $vhost3 FEDORA15-OPENVZ-STD tb-set-node-sharingmode $vhost3 "shared_local" tb-fix-node $vhost1 pc1 tb-fix-node $vhost2 pc2 tb-fix-node $vhost3 pc4 $ns rtproto Static $ns run

Attachments (1)

- IG-EXP-1-a.jpg (684.1 KB) - added by 11 years ago.

Download all attachments as: .zip