| Version 28 (modified by , 7 years ago) (diff) |

|---|

OpenFlow NAT Router

TinyUrl: http://tinyurl.com/geni-nfv-nat

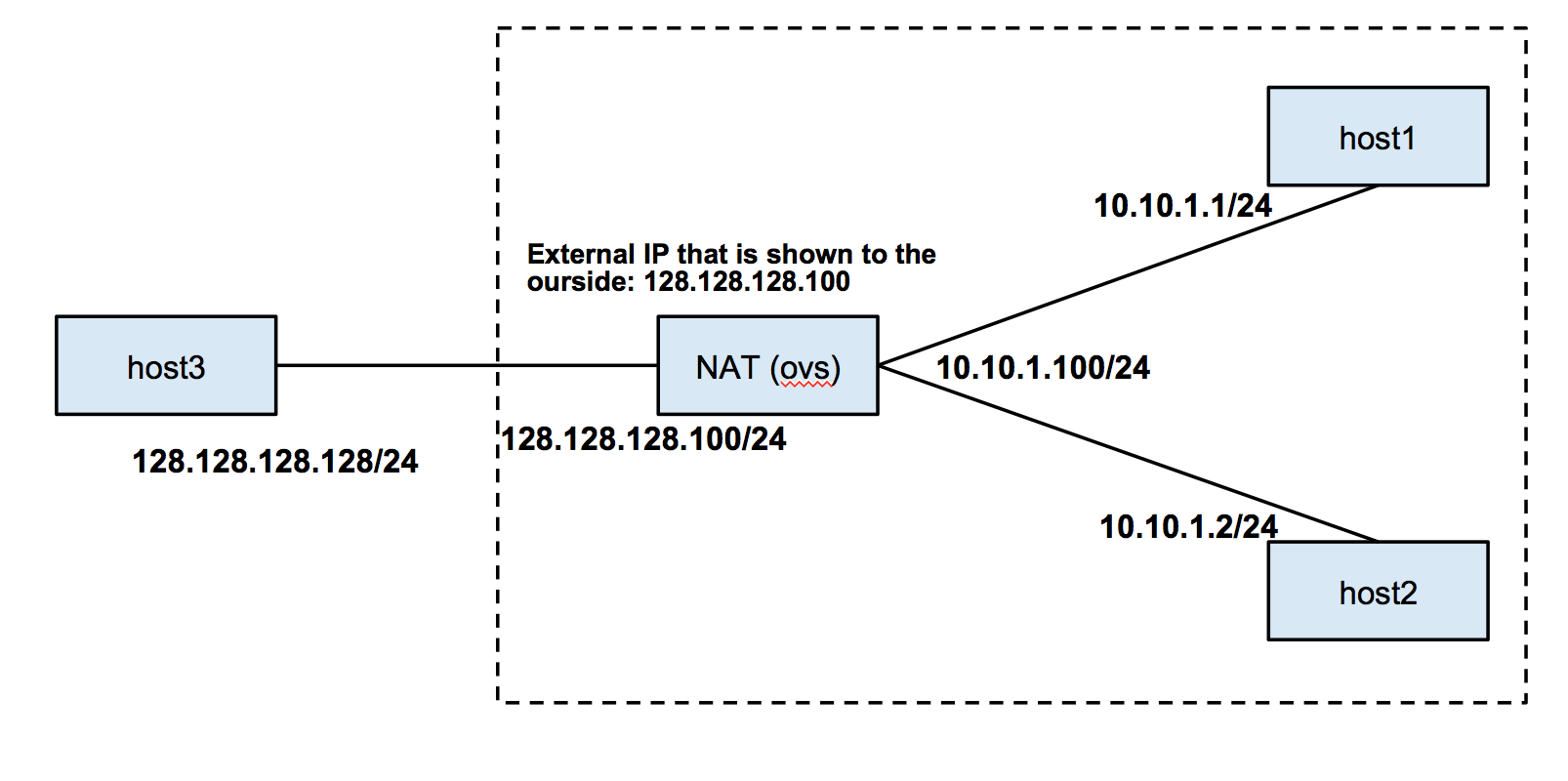

Overview:In this tutorial you will learn how to build a router for a network with a private address space that needs a one-to-many NAT (IP Masquerade) using OpenFlow. We will use the following network topology for this experiment. You will also learn how to take advantage of kernel L3 routing while using OVS .

|

|

Prerequisites:For this tutorial you need :

|

Tools:All the tools will already be installed at your nodes. For your reference we are going to use a Ryu controller. |

|

Where to get help:For any questions or problem with the tutorial please email geni-users@googlegroups.com |

|

If you have already reserved the topology from a previous tutorial you can move to Execute.

1. Verify your Environment Setup:

This exercise assumes you have already setup your account at the GENI Portal. In particular ensure that:- You can login to the GENI Portal

- You are a member of a GENI Project (there is at least one project listed under the ''Projects'' tab)

- You have setup your ssh keys (there is at least one key listed under the ''Profile->SSH Keys'' tab)

2. Setup the Topology:

- Login to the GENI Portal

- Reserve:

- the topology from an InstaGENI rack using the OpenFlow OVS all XEN RSpec (In Portal: "OpenFlow OVS all XEN"; URL: https://raw.githubusercontent.com/GENI-NSF/geni-tutorials/master/OpenFlowNAT/openflowovs-all-xen.rspec.xml)

- at a different InstaGENI rack reserve a XEN OpenFlow Controller RSpec (In Portal: "XEN OpenFlow Controller"; URL: https://raw.githubusercontent.com/GENI-NSF/geni-tutorials/master/OpenFlowNAT/xen-openflow-controller-rspec.xml)

|

3. Test reachability before starting controller

3.1 Login to your hosts

To start our experiment we need to ssh into all the hosts (controller, ovs, host1, host2, host3). Depending on which tool and OS you are using there is a slightly different process for logging in. If you don't know how to SSH to your reserved hosts take a look in this page. Once you have logged in, follow the rest of the instructions.

3.1a Configure OVS

- Write down the interface names that correspond to the connections to your hosts (use ifconfig). The correspondence is:

- h1_if: Interface with IP 10.10.1.11 to host1 - ethX

- h2_if: Interface with IP 10.10.1.12 to host2 - ethY

- h3_if: Interface with IP 10.10.1.13 to host3 - ethZ

- In the OVS node run:

wget https://raw.githubusercontent.com/GENI-NSF/geni-tutorials/master/OpenFlowNAT/ovs-nat-conf.sh chmod +x ovs-nat-conf.sh sudo ./ovs-nat-conf.sh <h1_if> <h2_if> <h3_if> <controller_ip>

The controller_ip is the public IP of your controller node, look at the end for a tip on how to get the IP of a node. - Look around to see what the script did:

sudo ovs-vsctl show ifconfig

3.1b Configure hosts

The hosts in your topology are all in the same subnet, 10.10.1.0/24. We will move host3 to a different subnet:

- host3: Assign 128.128.128.128 to host3.

sudo ifconfig eth1 128.128.128.128/24

- host1, host2: Setup routes at

host1andhost1to 128.128.128.0/24 subnet:sudo route add -net 128.128.128.0 netmask 255.255.255.0 gw 10.10.1.100

3.2 Test reachability

- First we start a ping from

ihost1tohost2, which should work since they are both inside the same LAN.host1:~$ ping 10.10.1.2 -c 10

- Then we start a ping from

host3tohost1, which should timeout as there is no routing information in its routing table. You can useroute -nto verify that.host3:~$ ping 10.10.1.2 -c 10

- Similarly, we cannot ping from

host1tohost3.

- You can also use Netcat (

nc) to test reachability of TCP and UDP. The behavior should be the same.

4 Start controller to enable NAT

4.1 Access a server from behind the NAT

You can try to write your own controller to implement NAT. However, we a provide you a functional controller.

- Download the NAT Ryu module. At your controller node run:

cd /tmp/ryu/ wget https://github.com/GENI-NSF/geni-tutorials/raw/master/OpenFlowNAT/ryu-nat.tar.gz tar xvfz ryu-nat.tar.gz

- Start the controller on

controllerhost:cd /tmp/ryu/; ./bin/ryu-manager ryu-nat.py

You should see output similar to following log after the switch is connected to the controller

loading app ryu-nat.py loading app ryu.controller.dpset loading app ryu.controller.ofp_handler instantiating app ryu.controller.dpset of DPSet instantiating app ryu.controller.ofp_handler of OFPHandler instantiating app ryu-nat.py of NAT switch connected <ryu.controller.controller.Datapath object at 0x2185210>

- On

host3, we start a nc server:host3:~$ nc -l 6666

and we start a nc client on host1 to connect it:

host1:~$ nc 128.128.128.128 6666

- Now send message between each other and try the same thing between

host3andhost2.

- On the terminal of

controller, in which you started your controller, you should see a log similar to:Created mapping 10.10.1.1 31596 to 128.128.128.100 59997

Note that there should be only one log per connection, because the rest of the communication will re-use the mapping.

5 Handle ARP and ICMP

One of very common mistakes that people make, when writing OF controller, is forgetting to handle ARP and ICMP message and finding their controller does not work as expected.

5.1 ARP

As we mentioned before, we should insert rules into the OF switch that allow ARP packets to go through, probably after the switch is connected.

5.2 ICMP

Handling ARP is trivial as NAT does not involve ARP. However, it's not the case for ICMP. If you only process translation for TCP/UDP, you will find you cannot ping between host3 and host1 while nc is working properly. Handling ICMP is even not as straightforward as for TCP/UDP. Because for ICMP, you cannot get port information to bind with. Our provided solution makes use of ICMP echo identifier. You may come up with different approach involves ICMP sequence number or others.

- On

host1, start a ping tohost3.host1:~$ ping 128.128.128.128

- Do the same thing on

host2.host2:~$ ping 128.128.128.128

You should see both pinging are working.

- On

host3, usetcpdumpto check the packets it receives.host3:~$ sudo tcpdump -i eth1 -n icmp

You should see it's receiving two groups of icmp packets, differentiated by id.

Make sure to delete the bridges especially if you are using the same topology for another tutorial.

sudo ovs-vsctl del-br OVS1 sudo ovs-vsctl del-br OVS2

|

6. Cleanup

After you are done with the exercise and you have captured everything requested for the writeup, you should release your resources so that other experimenters can use them. In order to cleanup your slice :- In Portal, press the Delete button in the bottom of your canvas

- Select Delete at used managers and confirm your selection.

Tips

- Remember that you can use “ifconfig” to determine which Ethernet interface (e.g., eth0) is bound to what IP address at each of the nodes.

- In order to enable IP forwarding of packets on a node you have to execute the following command:

sudo sh -c 'echo 1 > /proc/sys/net/ipv4/ip_forward'