| Version 104 (modified by , 13 years ago) (diff) |

|---|

Project Number

1602

Project Title

Sensor Virtualization and Slivering in an Outdoor Wide-Area Wireless GENI Sensor/Actuator Network Testbed

a.k.a. ViSE, VISE (short name for tickets), Sensor/Actuator Network (obsolete)

Technical Contacts

Principal Investigator:

Prashant Shenoy

University of Massachusetts, Amherst

shenoy@cs.umass.edu

http://www.cs.umass.edu/~shenoy/

Co-Principal Investigator:

Michael Zink

University of Massachusetts, Amherst

zink@cs.umass.edu

http://www-net.cs.umass.edu/~zink/umasshome/pmwiki.php

Co-Principal Investigator:

Deepak Ganesan

University of Massachusetts, Amherst

dganesan@cs.umass.edu

http://www.cs.umass.edu/~dganesan/

Co-Principal Investigator:

Jim Kurose

University of Massachusetts, Amherst

kurose@cs.umass.edu

http://www-net.cs.umass.edu/personnel/kurose.html

Research Staff:

David Irwin

University of Massachusetts, Amherst

David Irwin

http://www.cs.umass.edu/~irwin/

ViSE team is pictured from left to right: Navin Sharma (graduate student), Prashant Shenoy (PI), David Irwin (Research Scientist), and Michael Zink (Co-PI). Deepak Ganesan and Jim Kurose (both Co-PIs) are not pictured.

ViSE team is pictured from left to right: Navin Sharma (graduate student), Prashant Shenoy (PI), David Irwin (Research Scientist), and Michael Zink (Co-PI). Deepak Ganesan and Jim Kurose (both Co-PIs) are not pictured.

Participating Organizations

ViSE web site

Center for Collaborative Adaptive Sensing of the Atmosphere (CASA)

UMassAmherst, Amherst, MA

Related Projects

DOME Project

Diverse Outdoor Mobile Environment (DOME) web site

GPO Liaison System Engineer

Harry Mussman hmussman@geni.net

Scope

The scope of work on this project is to extend your outdoor, wide-area sensor/actuator network testbed to support slivering and utilize a GENI candidate control framework (ORCA/Shirako), and then bring it into an environment of GENI federated testbeds. This includes: 1) Virtualization of your sensor/actuator system. 2) Integration with GENI-compliant Software Artifacts, including the use of Shirako software (part of the ORCA project) as the base for the control framework. 3) Making your testbed publicly available to GENI users, starting in year 1, and integrate it into an environment of GENI federated testbeds by the end of year 2. 4) Providing documentation for testbed users, administrators, and developers.

Operational Capabilities

During Spiral 1, we completed deployment of these sensor nodes:

ViSE Node Deployment on UMass CS Building (with Mt. Toby in background)

ViSE Node Deployment on UMass CS Building (with Mt. Toby in background)

ViSE Node Deployment at UMass MA2 Tower

ViSE Node Deployment at UMass MA2 Tower

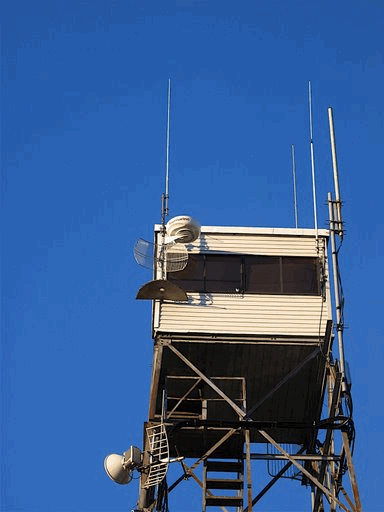

ViSE Node Deployment on Mt. Toby Fire Tower

ViSE Node Deployment on Mt. Toby Fire Tower

Completed Xen sensor virtualization

We completed an initial research-grade implementation of sensor virtualization in Xen and released a technical report that applies the approach to Pan-Tilt-Zoom video cameras.

As detailed in our previous quarterly status report we have faced challenges in applying the same

techniques to the Raymarine radars in the ViSE testbed because their drivers do not operate by default inside of

Xens Domain-0 management domain. The problem affects other high-bandwidth I/O devices that use Xen, and

is being actively worked on in the Xen community. As these problems are worked out, we have transitioned to

using vservers as ViSEs preferred virtualization technology, and developed vserver handlers for Orca. We are also

porting MultiSense to work with vservers as well as with Xen; its modular design makes this port straightforward.

Our demonstration at GEC5 in Seattle showed non-slivered VM access to radar control and data using vservers;

once we complete our port of MultiSense we will be able to support slivered access.

A more detailed description of these Xen, vservers, and sensors in ViSE is available in the quarter 2 quarterly report for ViSE. Since releasing our technical report, we have improved the work and re-submitted an extended technical report to the NSDI conference. This improved work includes experiments with steerable radars.

Completed integration with ORCA in Cluster D

ViSE is running the latest reference implementation of the Shirako/Orca codebase. Note that Milestone 1c (completed

February 1st, 2009) required ViSE to perform an initial integration of Shirako/Orca prior to an official

reference implementation being released. See that milestone for specific details related to the integration. Incorporating

the latest reference implementation required only minor code porting. Additionally, as a result of the

Orca-fest conference call on May 28th, the GENI Project Office and Cluster D set mini-milestones that were not

in the original Statement of Work. These milestones are related to Milestone 1e, since they involve the particular

instantiation of Orca that Cluster D will use. In particular, by June 15th, 2009, we upgraded our ORCA actors to

support secure SOAP messages.

As part of this mini-milestone, Brian Lynn of the DOME project and the ViSE project also setup a control plane server that will host the aggregate manager and portal servers for both the DOME and ViSE projects. This server has the DNS name geni.cs.umass.edu. The server includes 4 network interface cards: one connects to a gateway ViSE node on the CS department roof, one will connect to an Internet2 backbone site (via a VLAN), one connects to the public Internet, and one connects to an internal development ViSE node. During the Orca-fest and subsequent Cluster D meetings we set the milestone for shifting within the range of August 15th, 2009 and September 1st, 2009.

While not in our official list of milestones, Harry Mussman asked our Cluster to switch to using a remote

Clearinghouse by October 1st. We have made this switch. We first made use of a jail that we controlled at

RENCI/Duke to setup and test this Clearinghouse in mid-August, and on September 28th, 2009 sent email to

RENCI asking them to switch us over to their Clearinghouse.

Made testbed available for public use within our cluster.

Our testbed is available for limited use within our cluster. We are soliciting a select group of users to allow us

to work out the bugs/kinks in the testbed and figure out what needs to be improved. The portal for our testbed is

available at http://geni.cs.umass.edu/vise.

Note that as we develop our sensor virtualization technology

we are initially allowing users to safely access dedicated hardware—the sensors and the wireless NIC.

Right

now, we are targeting two types of users for our testbed. The first type is users that wish to experiment with long distance

802.11b wireless communication. Long-distance links are difficult to setup because they require access to

towers and other infrastructure to provide line-of-sight. Our two 10km links are thus useful to outside researchers

working on these problems. There are a number of students at UMass-Amherst using the testbed to solve problems

in this area.

The second type of user is radar researchers that can leverage our radar deployment. We are working with students from Puerto Rico and other researchers in CASA to interpret and improve the quality of our radar’s data and test them for detection algorithms. We are soliciting feedback from these users about what they need to do on these nodes, and how the testbed can satisfy their needs. Note that our testbed interacts with a remote Clearinghouse run by RENCI/Duke to facilitate resource allocation.

Milestones

MilestoneDate(ViSE: S1.a Assembly of three x86 sensor nodes)?

status

MilestoneDate(ViSE: S1.b Field deployment of three sensor nodes)?

status

MilestoneDate(ViSE: S1.c Initial Shirako/ORCA integration-)?

status

MilestoneDate(ViSE: S1.d Operational web portal and testbed; Application demo)?

status

MilestoneDate(ViSE: S1.c2 Complete initial Shirako/ORCA integration-)?

status

MilestoneDate(ViSE: S1.e Contingent upon availability of reference implementation of Shirako/ORCA at 6 months, import and then integrate)?

status

MilestoneDate(ViSE: S1.f Complete Xen sensor virtualization)?

status

MilestoneDate(ViSE: S1.g Contingent upon available budget, provide a VLAN connection from your testbed to the Internet2)?

status

MilestoneDate(ViSE: S1.h Virtualization of actuators; single guest VM; demo)?

status

MilestoneDate(ViSE: S1.i Testbed available for public use within our cluster)?

status

ViSE: 1out Outreach activities for year 1

status

MilestoneDate(ViSE: S2.a Complete Xen sensor slivering)?

MilestoneDate(ViSE: S2.b Complete integration with broker in clearinghouse)?

MilestoneDate(ViSE: S2.c Deliver first release of ViSE software)?

MilestoneDate(ViSE: S2.d Cluster plan for VLANs between testbeds)?

MilestoneDate(ViSE: S2.e Installation of x86 sensor node)?

MilestoneDate(ViSE: S2.f Installation of cameras)?

MilestoneDate(ViSE: S2.g Integration of virtualization)?

MilestoneDate(ViSE: S2.h Import extended ORCA v2.1)?

MilestoneDate(ViSE: S2.i Demo control of VLAN connections)?

MilestoneDate(ViSE: S2.j Import extended ORCA v2.2)?

MilestoneDate(ViSE: S2.k Allocation policy for sensors)?

MilestoneDate(ViSE: S2.l Demo experiment with another testbed)?

MilestoneDate(ViSE: S2.m Available to GENI users)?

MilestoneDate(ViSE: S2.n POC to GENI response team)?

MilestoneDate(ViSE: S2.o POC to GENI security team)?

MilestoneDate(ViSE: S2.p Contribution to GENI outreach)?

MilestoneDate(ViSE: S3.a Demonstration at GEC9 and Experimenter Outreach)?

MilestoneDate(ViSE: S3.b Documentation and Code Release)?

MilestoneDate(ViSE: S3.c Demonstration at GEC10 and Experimenter Outreach)?

MilestoneDate(ViSE: S3.d Documentation and Code Release)?

MilestoneDate(ViSE: S3.e Demonstration at GEC11 and Experimenter Outreach)?

MilestoneDate(ViSE: S3.f Final report and code release)?

Status Reports and Demonstrations

4Q08 Status Report

1Q09 Status Report

2Q09 Status Report

3Q09 Status Report

4Q09 Status Report

1Q10 Status Report

2Q10 Status Report

Spiral 2 Review

GEC9 Nowcasting Demo

Post-GEC9 Status Report

Post-GEC10 Status Report

Technical Documents

"Simulation of Minimal Infrastructure Short-Range Radar Networks"

OTGsim: Simulation of an Off-the-Grid Radar Network with High Sensing Energy Cost

"ViSE Substrate Description"

VSense: Virtualizing Stateful Sensors With Actuators

MultiSense: Fine-grained Multiplexing for Steerable Sensor Networks

Software Releases

We have released the setup for our GEC9 nowcasting demonstration as Amazon Virtual Machine Instances that are stored on S3 and available. Within each image there are short instructions on how to execute the demonstration.

Additionally, the DiCloud? code used as part of the demonstration is available at the DiCloud? website.

The Amazon Machine Image id numbers for the two images are ami-bad621d3 (for the Amazon machine that processes Nowcasts) and ami-a4d720cd (for the Amazon machine that we use to feed data).

A full report of the demonstration is available in the post-GEC9 report that is on the Wiki.

Connectivity

Location of equipment: For Spiral 1, work will be done in a lab at UMassAmherst and at three field locations in Massachusetts.

Equipment information is found at "ViSE Substrate Description"

Layer 3 Connectivity: IP access will be through UMass Amherst's campus network, using their public IP addresses.

Layer 2 Connectivity: In cooperation with OIT at UMass-Amherst we have provided a VLAN connection from our control plane server geni.cs.umass.edu to an Internet2 point-of-presence in Boston. An MOU was agreed upon with the UMass Office of Information Technology (OIT) regarding connecting Internet2 to the DOME and ViSE servers, along with

VLAN access. The OIT contact is Rick Tuthill, tuthill email at oit.umass.edu. The agreements include:

1) CS shall order OIT-provisioned network jacks in appropriate locations in the Computer Science building using normal OIT processes. (completed)

2) OIT shall configure these jacks into a single VLAN that shall be extended over existing OIT-managed network infrastructure between the Computer Science building and the Northern Crossroads (NoX) Internet2 Gigapop located at 300 Bent St in Cambridge, MA.

3) OIT agrees to provide a single VLAN for “proof-of-concept” testing and initial GENI research activities.

4) The interconnection of the provided VLAN between the NoX termination point and other Internet2 locations remains strictly the province of the CS researchers and the GENI organization.

5) This service shall be provided by OIT at no charge to CS for the term of one year in the interest of OIT learning more about effectively supporting network-related research efforts on campus.

In an email dated September 28th, 2009 Rick Tuthill of UMass-Amherst OIT updated us on the status of this connection, as follows:

6) The two existing ports at the CS building in room 218A and room 226 and all intermediary equipment are now configured to provide layer-2 VLAN transport from these networks jacks to the UMass/Northern Crossroads(NoX) handoff at 300 Bent

St in Cambridge, MA.

7) The NoX folks are not doing anything with this research VLAN at this time. They need further guidance from GENI on exactly what they’re supposed to do with the VLAN.

8) Also, once IP addressing is clarified for this VLAN, we’ll need to configure some OIT network equipment to allow the selected address range(s) to pass through.

We intend this VLAN connection to service both the ViSE and the DOME testbeds. Note that Layer 2 ethernets will not extend to the DieselNet nodes, due to limitations in the existing deployed systems, but IP tunnels to the layer 2 VLAN termination points should be feasible for connecting mobile endpoints to the GENI virtual ethernets.

In the coming year, we have committed to planning with our peers in Cluster D and the GPO on how to best use this new capability. As part of this plan, and before we can send/receive traffic on this link, we will discuss the roles and capabilities of Internet2 in forwarding our traffic to its correct destination.

Attachments (26)

-

tmp_2lid01gx.pdf (797.8 KB) - added by 15 years ago.

Simulation of Minimal Infrastructure Short-Range Radar Networks

-

tmp_x26gqb9o.pdf (3.6 MB) - added by 15 years ago.

OTGsim: Simulation of an Off-the-Grid Radar Network with High Sensing Energy Cost

-

otg_node.jpg (77.4 KB) - added by 15 years ago.

Photo of OTG Node

- ViSE project QSR.pdf (17.9 KB) - added by 15 years ago.

- vise-catalog.pdf (79.1 KB) - added by 15 years ago.

- ViSE images 2008.zip (291.3 KB) - added by 15 years ago.

-

pastedGraphic.jpg (146.0 KB) - added by 15 years ago.

VISE node on firetower (converted from .tiff original in VISE images 2008.zip

- vise-geni-teamb.jpg (50.0 KB) - added by 15 years ago.

- vise4-smaller-rotate.jpg (78.7 KB) - added by 15 years ago.

- vise3-smaller-rotate.jpg (99.9 KB) - added by 15 years ago.

- vise2-smaller.jpg (65.6 KB) - added by 15 years ago.

- vise1-smaller-rotate.jpg (77.8 KB) - added by 15 years ago.

- vise-quarterly-report2.pdf (64.1 KB) - added by 15 years ago.

- vise-quarterly3.pdf (55.6 KB) - added by 15 years ago.

- quarter4.pdf (61.4 KB) - added by 15 years ago.

-

vsense.pdf (428.8 KB) - added by 15 years ago.

VSense: Virtualizing Stateful Sensors With Actuators

-

multisense.pdf (640.8 KB) - added by 15 years ago.

MultiSense: Fine-grained Multiplexing for Steerable Sensor Networks

- vise 4q09 quarter5.pdf (66.1 KB) - added by 14 years ago.

- 1Q10 vise-quarterly.pdf (526.9 KB) - added by 14 years ago.

- 2Q10 QSR quarter7.pdf (53.1 KB) - added by 14 years ago.

-

ViSE-project-review.pptx (257.0 KB) - added by 14 years ago.

Spiral 2 review

-

ViSE-project-review.2.2.pptx (256.9 KB) - added by 14 years ago.

Spiral 2 review

-

ViSE-project-review.2.pptx (257.0 KB) - added by 14 years ago.

Spiral 2 review

-

quarter8.pdf (53.2 KB) - added by 13 years ago.

Project Report due after GEC9

-

vise-progress-pdf.pdf (120.8 KB) - added by 13 years ago.

Post-GEC10 ViSE Project Report

- nowcasting gec9 demos.pptx (2.0 MB) - added by 13 years ago.