| Version 1 (modified by , 14 years ago) (diff) |

|---|

PrimoGENI Aggregate 1.0 Design Document

Introduction

About This Document

This document describes the design of the PrimoGENI aggregate and how it will be integrated with the ProtoGENI control framework. The document will be used as a guideline for the implementation of the PrimoGENI aggregate, and as a baseline for the validation of its functionalities.

Some of the material used in this document is drawn from the following documents:

- GENI System Overview Document (http://groups.geni.net/geni/wiki/GeniSysOvrvw).

- Lifecycle of a GENI Experiment (http://groups.geni.net/geni/wiki/ExperimentLifecycleDocument).

- GENI Control Framework Architecture (http://groups.geni.net/geni/wiki/GeniControlFrameworkArchitecture).

- ProtoGENI GENI Control Framework Overview (http://groups.geni.net/geni/wiki/ProtoGeniControlFrameworkOverview).

This document shall be reviewed by the ProtoGENI cluster, GENI security team, GPO and any other interested parties. Feedbacks will be collected to improve the PrimoGENI design and implementation.

PrimoGENI Overview

Simulation is effective for studying the behavior of large complex systems that are otherwise intractable to close-form mathematical/analytical solutions. Simulation can also offer scalability and flexibility beyond the capabilities of live experimentation and emulation. Real-time network simulation refers to simulation of potentially large-scale networks in real time so that the virtual network can interact with real implementations of network protocols, network services, and distributed applications. Real-time simulation supports network immersion, where a simulated network is made indistinguishable from a physical testbed in terms of conducting network traffic.

The PrimoGENI project aims to incorporate real-time network simulation capabilities into the GENI "ecosystem". We will extend our existing real-time network simulator, called the Parallel Real-time Immersive network Modeling Environment, or PRIME (https://www.primessf.net/bin/view/Public/PRIMEProject), to become part of the GENI federation. PRIME is a real-time extension to the well-known SSFNet simulator, which is a high-performance parallel simulator for large-scale networks. We will augment PRIME with the GENI aggregate interface, through which PRIME will interoperate with the rest GENI infrastructure to support large-scale experiments involving both physical and simulated network entities.

This document details the design of the PrimoGENI aggregate, which directs our development and prototyping activities with the objective of substantially broadening GENI’s prospect in supporting realistic, scalable, and flexible experimental networking studies. Core activities include:

- An early adoption of the ProtoGENI control framework, through which researchers will be able to remotely launch, monitor, control, and thus realize large-scale network experiments consisting of both PrimoGENI and other GENI components.

- A prototype implementation of PrimoGENI, which includes augmenting PRIME with the GENI aggregate interface, so that PrimoGENI will be able to support large-scale network experiments consisting of simulated, emulated and physical network entities.

- An exploitation of the full potential of real-time simulation capabilities, with special emphasis on the design and implementation of PrimoGENI experiment workflow (including network model construction, resource specification, allocation and sharing), experiment monitoring, instrumentation and measurement capabilities.

PrimoGENI Design Goal

Real-time simulation is aligned with the GENI concept of federating global physical/virtual resources as a shared experimental network infrastructure. Our immersive large-scale network simulator, PRIME, supports network experiments potentially with millions of simulated network entities (hosts, routers, and links) and thousands of emulated elements running unmodified network protocols and distributed applications/services.

PRIME can be readily extended to support GENI experiments. In order to interoperate with other GENI facilities, PRIME will function as a GENI aggregate (thus the name, PrimoGENI), so that GENI users, developers and administrators can use the well-defined interface to remotely control and realize network experiments consisting of both physical, simulated and emulated network entities exchanging real network traffic.

The overarching goal of PrimoGENI is to provide a fully functional aggregate that allows seamless interaction with the clearinghouse(s) and research organizations as an integral part of the GENI control framework architecture. PrimoGENI will run on high-performance computing clusters capable of supporting multiple experiments (i.e., slicing), each of which may consist of physical network entities (i.e., resources from other GENI aggregates), simulated networks and emulated hosts (from this or other PrimoGENI aggregates), all connected via high-performance connections.

Our immediate goal is to allow interoperability with the ProtoGENI control framework and to provide experiment support to streamline potentially large-scale network experiments, including model configuration, resource specification, simulation deployment and execution, online monitoring and control, data collection, inspection, visualization and analysis.

PrimoGENI Design

Definitions

- Substrate: physical facility on which PrimoGENI is operating; it describes the physical resources, layout and interconnection topology. PrimoGENI will be built on the ProtoGENI/Emulab facilities extended to allow real-time simulation and emulation.

- Resources: abstraction of sharable features of a component (computation, communication, measurement and storage) that are allocated by a component manager and described by RSpec. The original definition of GENI resources is ambiguous; there are two different kinds of shareable resources defined in PrimoGENI:

- Meta resources: hosts and other resources managed by and accessible within the ProtoGENI/Emulab suite, which include the cluster nodes, the switching fabric, and the layer-2 connectivity with other GENI facilities; We call these resources "meta resources" to distinguish them from physical resources defined in the substrate, since some of these hosts and connections can be virtual machines and virtual network tunnels.

- Virtual resources: elements of the virtual network simulated and emulated by PrimoGENI for supporting GENI experiments, which include simulated hosts, routers, links, protocols, and emulated hosts. We call these resources "virtual resources" as they represent the target computing and network environment of the GENI experiments; they encompass both simulated network entities and emulated hosts (which are run on the virtual machines).

- Slice: an interconnected set of reserved resources, or slivers, on multiple, potentially heterogeneous aggregates/components; a slice is also a container into which experiments can be instantiated. Researchers can remotely discover, reserve, configure, program, debug, operate, manage, and tear down resources within a slice to complete an experiment. PrimoGENI designates two kinds of RSpec corresponding to the two types of resources one uses within a slice:

- The RSpec used to describe the meta resources, i.e., hosts and resources of the ProtoGENI/Emulab facility. This is the same RSpec as currently been used by ProtoGENI and will be used by PrimoGENI during resource discovery and registration.

- The RSpec used to describe both virtual resources (i.e., the virtual network) and the meta resources (i.e., cluster nodes and switches of the ProtoGENI/Emulab facility). This type of RSpec will extend ProtoGENI's current definition of RSpec, by adding a reference to a detailed and yet succinct definition/configuration of the virtual network, and will be used by PrimoGENI during slice/sliver creation.

- Aggregate manager and interface: the PrimoGENI aggregate manages all resources, including meta resources controlled by and accessible in the ProtoGENI/Emulab suite, which includes cluster nodes, switches, and other resources, and virtual resources as exported by the PrimoGENI real-time simulation and emulation framework. It exports an aggregate interface as defined by the ProtoGENI control framework. PrimoGENI provides mechanisms for instantiating the virtual network onto the ProtoGENI/Emulab facilities as defined and configured by a slice.

Major Design Decisions

PrimoGENI will be an aggregate consisting of both meta resources and virtual resources. That is, a sliver at the PrimoGENI aggregate will consist of virtual resources, i.e., the virtual network that includes both simulated elements (routers, hosts, links, and protocols) and emulated elements (hosts and routers running on virtual machines), and the associated meta resources that the virtual network is instantiated upon.

PrimoGENI will use the ProtoGENI/Emulab suite to manage, control and access the meta resources as they are instantiated from the physical facilities (substrate) defined in the ProtoGENI/Emulab environment.

PrimoGENI will use PRIME, the high-performance real-time network simulator and the associated emulation infrastructure, to create, manage, control and access the virtual resources as they are instantiated on the meta resources provided by ProtoGENI/Emulab.

PrimoGENI will export an aggregate interface for it to interact with other elements of the control framework. PrimoGENI will use the ProtoGENI Component Manager API for communicating with the clearinghouse(s) and researchers (principals) using XMLRPC, HTTPS, and SSH.

PrimoGENI will provide an experiment control tool to streamline the "life cycle" of a GENI network experiment that involves PrimoGENI elements. The experiment control tool will help researchers with model construction, configuration, resource specification and assignment. It will include other function, such as model deployment, execution, online monitoring and control, experiment data collection, inspection, visualization and analysis, at a later design stage.

PrimoGENI System Architecture

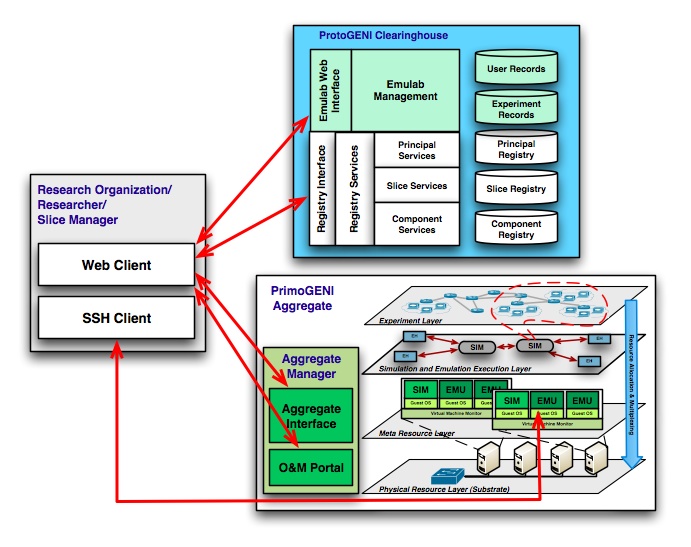

The following figure shows the PrimoGENI aggregate situated in the ProtoGENI control framework.

We list the main building blocks of the system in the following and highlight the particular aspects concerning with the design of the PrimoGENI aggregate:

We list the main building blocks of the system in the following and highlight the particular aspects concerning with the design of the PrimoGENI aggregate:

- Research organization: From the research organization, a researcher will communicate, using the slice manager, with the clearinghouse(s) and the aggregate managers (including PrimoGENI) using the web services model provided by ProtoGENI. Web services massages will utilize XMLRPC and HTTPS. In addition, once a sliver has been set up, researcher can log onto individual emulated hosts in PrimoGENI (running on virtual machines) through SSH.

- Clearinghouse: A grouping of architectural elements and services for operations and management. ProtoGENI clearinghouse includes separate registries for principal, slice and aggregate/component records and a set of common registry services, and certain specialized services for principal, slice and component services. The (supposedly separate) Slice Authority Services are provided within the Emulab suite. PrimoGENI needs to register with the clearinghouse.

- Aggregate: ProtoGENI aggregate manager is provided to manage hosts and other resources accessible within an Emulab suite. The PrimoGENI aggregate extends its functionality by adding virtual resources in the sliver definition, which are instantiated upon the (meta) resources managed by the Emulab suite. The aggregate manager needs to report system status and network events (e.g., failures or attacks) to the rest of the control framework via its O&M Portal. (This operation has not been defined in the current ProtoGENI implementation.)

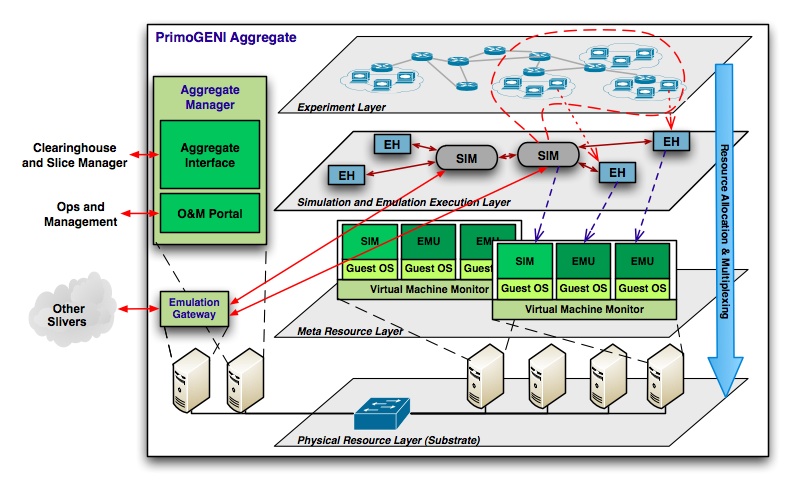

A detailed view of the PrimoGENI aggregate is shown as follows.

The PrimoGENI aggregate can be viewed as a layered system.

The PrimoGENI aggregate can be viewed as a layered system.

At the lowest layer is the physical resources layer (substrate), which is composed of cluster nodes, switches, and other resources that constitute the Emulab suite. These resources will be made known to the clearinghouse(s) and can be queried by researchers during the resource discovery process. In addition, two servers are set up to run the aggregate manager (for exporting an aggregate interface to researchers and clearinghouses) and the emulation gateway (for communicating with other slivers on other aggregates).

A meta resources layer is created upon resource assignment in a sliver. PrimoGENI uses the Emulab suite to allocate the (meta) resources (including a subset of cluster nodes, VLAN connectivity among the nodes, and possible GRE channels created for communicating with resources off site). Each physical cluster node is viewed by PrimoGENI as an independent scaling unit loaded with an operating system image that supports virtual machines (e.g., OpenVZ). Separate virtual machines are created to run the PRIME real-time simulator and the emulated hosts, respectively. In particular, the simulator runs on a (privileged) virtual machine, and the emulated hosts are mapped to the virtual machines so that they can run unmodified applications.

A simulation and emulation execution layer is created according to the virtual network specification of a sliver. The simulator instances and emulated hosts are mapped to the meta resources at the layer below. High-performance inter-VM communication channels are established between the emulated hosts and the corresponding real-time simulator instance, so that traffic generated by the emulated hosts are captured by the real-time simulator and conducted on the simulated network with appropriate delays and losses according to the simulated network conditions. Each real-time simulator instance handles a sub-partition of the virtual network; they communicate through VLAN channels created by the Emulab suite at the meta resources layer. They also establish connections to the emulation gateway for traffic to and fro slivers on other aggregates.

Once the slivers are created and the slice is operational, researchers can run experiment on the experiment layer (logical). Researcher can log into individual emulated hosts, load software, and launch it. Traffic between the emulated hosts will be conducted on the virtual network. Traffic originated from or destined to other physical network entities will be redirected through the emulation gateway. Experiment data can be collected and viewed in real time on demand through the measurement facility (not shown). This functionality is expected to be added in the next design iteration.

Sliver

A sliver in PrimoGENI consists of both meta resources and virtual resources.

The meta resources are allocated and controlled by the Emulab suite, which can consist of local cluster nodes with VLAN links between them, and cluster nodes at different sites with GRE tunnels between them. (We don't handle PlanetLab nodes at this iteration).

The virtual resources are instantiated and controlled by the PRIME real-time simulator. The virtual network can consist of simulated hosts, routers, links, and emulated hosts. PRIME supports parallel and distributed simulation, where multiple simulator instances can run in parallel, each handling a sub-partition of the virtual network mapped to a separate scaling unit at a different physical host. These simulator instances are synchronized to proceed in real time; they communicate via the VLAN links provided by Emulab. The emulated hosts are run on separate virtual machines and communicate with the corresponding simulator instance at the same scaling unit.

Once the resources are committed and the sliver created, researchers can directly log onto the emulated hosts (through SSH) to program, configure and conduct experiments.

Identification

Each identifiable GENI element has an associated GENI Identifier (GID). In ProtoGENI, GID is an SSL certificate with a distinguished name (DN) field that includes a human readable name (HRN), a UUID (which is a random number generated per X.667), and a user email address.

While GIDs are used to identify various PrimoGENI aggregate elements as expected, we do not assign GIDs to virtual network elements for the obvious reason that this might hamper scalability of the simulator. The entire virtual network is identified as a single GENI element. That is, the same certificate will be used to access all simulated and emulated elements. Within the virtual network, in order to for us to identify each network element (host, router, interface, link, subnetwork, or any functional unit inside a host or router, such as a protocol), we create a separate name space, which is intrinsic to the hierarchical nature of the virtual network.

Resource Specification

RSpec describes an aggregate/component in terms of the resources it possesses, and constraints and dependencies on the allocation of those resources. It is the mechanism for advertising, requesting, and describing the resources used by experimenters. We follow the same classification method defined by ProtoGENI, by dividing RSpec into three different closely related languages to address the three distinct purposes:

- Advertisements are used to describe the meta resources available on the PrimoGENI aggregate (during aggregate registration and resource discovery). They contain information used by clients to choose the meta resources. Here, PrimoGENI uses the same RSpec as defined by ProtoGENI.

- Requests specify which resources a client is selecting from the PrimoGENI aggregate. The resources contain both meta resources and virtual resources. For meta resources, PrimoGENI uses the same definitions as in ProtoGENI, which also contain a (perhaps incomplete) mapping between the meta resources and the physical resources (substrate), if the request is a bound request. For virtual resources, we use the same network specification language defined by the PRIME real-time simulator. Currently, PRIME uses the so-called domain modeling language (DML) for describing (potentially very large) network models. We are currently developing a python extension to allow the experimenters to specify network configurations programmatically. As such, we require that the experimenters embed a URL in the RSpec that points to a file which specifies the virtual network in the appropriate language. The file also needs to include a mapping between the virtual resources and the meta resources.

- Manifests provide useful information about the slivers actually allocated by the PrimoGENI aggregate to a client. This information may not be known until the slivers are actually created (i.e. dynamically assigned IP addresses, host names); also additional configuration options can be provided to a client. The PrimoGENI aggregate will also supply the researchers with the information about the emulated hosts for them to log onto the virtual machines.

Experiment Setup

Aggregate Registration

PrimoGENI registers with the ProtoGENI clearinghouse.

Resource Discovery

Researcher gets a list of all physical resources -- whether or not they are currently available.

Researcher gets a list of resources that are currently available to her and determines the virtual network (including both simulated and emulated elements) she wants to run on PrimoGENI, though an experiment support tool provided by PrimoGENI. The experiment support tool allows the research to configure the virtual network and helps map the virtual network onto the available physical resources. Once completed, the experiment support tool creates a request (RSpec).

Resource Authorization and Policy Implementation

Researcher sends the request to PrimoGENI aggregate manager.

PrimoGENI aggregate manager decides to authorize the requested resources according to the local policy (currently admitting all) and issue a ticket to the researcher.

Resource Assignment

Researcher presents the ticket to PrimoGENI aggregate manager in an attempt to redeem the ticket.

PrimoGENI uses the Emulab facilities to allocate the meta resources according to the request. This also includes setting up (GRE) connectivity with resources that belong to other aggregates. Once completed, PrimoGENI establishes the meta resources layer.

PrimoGENI loads the operating system images, launches the virtual machines, starts the simulator instances (based on the virtual network configuration embedded in the request), and sets up the emulation infrastructure (connecting the emulated hosts with the simulator instances). This step establishes the simulation and emulation execution layer.

PrimoGENI informs the researcher (or the slice manager) of the status of the resource assignment through manifest.

Experiment Initiation

Once the slice has been created successfully, the researcher can log into emulated hosts, load code, and run the experiment.

Attachments (2)

- primogeni-aggr.jpg (144.8 KB) - added by 14 years ago.

- primogeni-cra.jpg (129.4 KB) - added by 14 years ago.

Download all attachments as: .zip