| Version 15 (modified by , 12 years ago) (diff) |

|---|

Sample Experiment: Writing a Web Server

Purpose

The goal of this assignment is to build a functional web server. This assignment will guide you through you the basics of distributed programming, client/server structures, and issues in building high performance servers.

This assignment is based on a course assignment used at the Distributed Systems Course offered by the Computer Science Department of Williams College and taught by Professor Jeannie Albrecht.

Prerequisites

Before beginning this experiment, you should be prepared with the following.

- You have GENI credentials to obtain GENI resources. (If not, see SignMeUp).

- You are able to use Flack to request GENI resources. (If not, see the Flack tutorial).

- You are comfortable using ssh and executing basic commands using a UNIX shell.

- You are comfortable with coding in C or C++

Setup

- Download the attached rspec file and save it on your machine. (Make sure to save in raw format.)

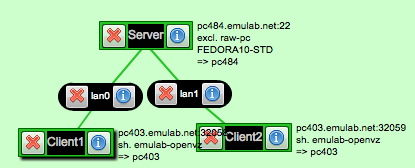

- Start Flack, create a new slice, load rspec websrv.rspec and submit for sliver creation (also fine to use omni, if you prefer). Your sliver should look something like this:

In this setup, there is one host acting as a web server. To test that the webserver is up visit the web page of the Server host. To do this

- either press on the (i) button in Flack and then press the Visit button

- or open a web browser and go to the webpage http://<pcname>.emulab.net, in the above example this would be http://pc484.emulab.net).

If the installation is successful you should see a page that is similar to this:

Techniques

You will use the following techniques during this experiment.

Start and stop the web server

In the original setup of your sliver there a webserver already installed and running on the Server host. As you implement your own webserver you might need to stop

or start the installed webserver.

- To Stop the webserver run:

sudo /sbin/service httpd stop

To verify that you have stopped the webserver, try to visit the above web page, you should get an error. - To Start the webserver run:

sudo /sbin/service httpd start

Command Line Web Transfers

Except from using a web browser you can also use command line tools for web transfers. To do this, follow these steps:

- Log in to

Client1. - You can download the web page using this command

[inki@Client1 ~]$ wget http://server --2012-07-06 04:59:09-- http://server/ Resolving server... 10.10.1.1 Connecting to server|10.10.1.1|:80... connected. HTTP request sent, awaiting response... 200 OK Length: 548 [text/html] Saving to: “index.html.1” 100%[======================================>] 548 --.-K/s in 0s 2012-07-06 04:59:09 (120 MB/s) - “index.html.1” saved [548/548]

Note: In the above command we usedhttp://serverinstead ofhttp://pc484.emulab.netso that we can contact the web server over the private connection we have created, instead of the server's public interface. The private connections are the ones that are represented with lines between hosts in Flack.

- The above command only downloads the

index.htmlfile from the webserver. As we are going to see later a web page might consist of multiple web pages or other objects such as pictures, videos etc. In order to force wget to download all dependencies of a page use the following options :[inki@Client1 ~]$ wget -m -p http://server

This will produce a directory with the followin data structure, run:[inki@Client1 ~]$ ls server/ home.html index.html links.html media top.html

Build your own Server

At a high level, a web server listens for connections on a socket (bound to a specific port on a host machine). Clients connect to this socket and use a simple text-based protocol to retrieve files from the server. For example, you might try the following command on Client1:

% telnet server 80 GET /index.html HTTP/1.0

(Type two carriage returns after the "GET" command). This will return to you (on the command line) the HTML representing the "front page" of the web server that is running on the Server host.)

One of the key things to keep in mind in building your web server is that the server is translating relative filenames (such as index.html ) to absolute filenames in a local filesystem. For example, you might decide to keep all the files for your server in ~10abc/cs339/server/files/, which we call the document root. When your server gets a request for index.html (which is the default web page if no file is specified), it will prepend the document root to the specified file and determine if the file exists, and if the proper permissions are set on the file (typically the file has to be world readable). If the file does not exist, a file not found error is returned. If a file is present but the proper permissions are not set, a permission denied error is returned. Otherwise, an HTTP OK message is returned along with the contents of a file.

In our setup we are using the Apache web server. The default document root for Apache on a host running Fedora 10 is under /var/www/html.

- Login to the

Serverhost - Run

[inki@server ~]$ ls /var/www/html/*

You should also note that since index.html is the default file, web servers typically translate "GET /" to "GET /index.html". That way index.html is assumed to be the filename if no explicit filename is present. This is also why the two URLs http://www.cs.williams.edu and http://www.cs.williams.edu/index.html return equivalent results.

When you type a URL into a web browser, the server retrieves the contents of the requested file. If the file is of type text/html and HTTP/1.0 is being used, the browser will parse the html for embedded links (such as images) and then make separate connections to the web server to retrieve the embedded files. If a web page contains 4 images, a total of five separate connections will be made to the web server to retrieve the html and the four image files.

Using HTTP/1.0, a separate connection is used for each requested file. This implies that the TCP connections being used never get out of the slow start phase. HTTP/1.1 attempts to address this limitation. When using HTTP/1.1, the server keeps connections to clients open, allowing for "persistent" connections and pipelining of client requests. That is, after the results of a single request are returned (e.g., index.html), the server should by default leave the connection open for some period of time, allowing the client to reuse that connection to make subsequent requests. One key issue here is determining how long to keep the connection open. This timeout needs to be configured in the server and ideally should be dynamic based on the number of other active connections the server is currently supporting. Thus if the server is idle, it can afford to leave the connection open for a relatively long period of time. If the server is busy servicing several clients at once, it may not be able to afford to have an idle connection sitting around (consuming kernel/thread resources) for very long. You should develop a simple heuristic to determine this timeout in your server.

For this assignment, you will need to support enough of the HTTP/1.0 and HTTP/1.1 protocols to allow an existing web browser (Firefox) to connect to your web server and retrieve the contents of the Willams CS front page from your server. (Of course, this will require that you copy the appropriate files to your server's document directory.) Note that you DO NOT have to support script parsing (php, javascript), and you do not have to support HTTP POST requests. You should support images, and you should return appropriate HTTP error messages as needed.

At a high level, your web server will be structured something like the following:

Forever loop: Listen for connections

Accept new connection from incoming client Parse HTTP request Ensure well-formed request (return error otherwise) Determine if target file exists and if permissions are set properly (return error otherwise) Transmit contents of file to connect (by performing reads on the file and writes on the socket) Close the connection (if HTTP/1.0)

You will have three main choices in how you structure your web server in the context of the above simple structure:

1) A multi-threaded approach will spawn a new thread for each incoming connection. That is, once the server accepts a connection, it will spawn a thread to parse the request, transmit the file, etc.

2) A multi-process approach maintains a worker pool of active processes to hand requests off to from the main server. This approach is largely appropriate because of its portability (relative to assuming the presence of a given threads package across multiple hardware/software platform). It does face increased context-switch overhead relative to a multi-threaded approach.

3) An event-driven architecture will keep a list of active connections and loop over them, performing a little bit of work on behalf of each connection. For example, there might be a loop that first checks to see if any new connections are pending to the server (performing appropriate bookkeeping if so), and then it will loop overall all existing client connections and send a "block" of file data to each (e.g., 4096 bytes, or 8192 bytes, matching the granularity of disk block size). This event-driven architecture has the primary advantage of avoiding any synchronization issues associated with a multi-threaded model (though synchronization effects should be limited in your simple web server) and avoids the performance overhead of context switching among a number of threads.

You may choose from C or C++ to build your web server but you must do it in Linux (although the code should run on any Unix system). In C/C++, you will want to become familiar with the interactions of the following system calls to build your system: socket(), select(), listen(), accept(), connect() . We outline a number of resources below with additional information on these system calls. A good book is also available on this topic (there is a reference copy of this in the lab).

In this experiment, you'll be changing the characteristics of the link and measuring how they affect UDT and TCP performance.

- Log into your delay node as you do with any other node. Then, on your delay node, use this command:

%sudo ipfw pipe show

You'll get something like this:

60111: 100.000 Mbit/s 1 ms 50 sl. 1 queues (1 buckets) droptail

mask: 0x00 0x00000000/0x0000 -> 0x00000000/0x0000

BKT Prot ___Source IP/port____ ____Dest. IP/port____ Tot_pkt/bytes Pkt/Byte Drp

0 ip 207.167.175.72/0 195.123.216.8/6 7 1060 0 0 0

60121: 100.000 Mbit/s 1 ms 50 sl. 1 queues (1 buckets) droptail

mask: 0x00 0x00000000/0x0000 -> 0x00000000/0x0000

BKT Prot ___Source IP/port____ ____Dest. IP/port____ Tot_pkt/bytes Pkt/Byte Drp

0 ip 207.167.176.224/0 195.124.8.8/6 8 1138 0 0 0

This information shows the internal configuration of the "pipes" used to emulate network characteristics. (Your output may look different, depending on the version of ipfw installed on your delay node. In any case, the information you need is on the first line of output for each pipe.)

You'll want to make note of the two pipe numbers, one for each direction of traffic along your link. In the example above, they are 60111 and 60121.

There are three link characteristics we'll manipulate in this experiment: bandwidth, delay, and packet loss rate. You'll find their values listed in the ipfw output above. The link bandwidth appears on the first line immediately after the pipe number. It's 100Mbps in the example shown above. The next value shown is the delay, 1 ms in the example above. The packet loss rate (PLR) is omitted if it's zero, as shown above. If non-zero, you'll see something like plr 0.000100 immediately after the "50 sl." on the first output line.

It is possible to adjust the parameters of the two directions of your link separately, to emulate asymmetric links. In this experiment, however, we are looking at symmetric links, so we'll always change the settings on both pipes together.

Here are the command sequences you'll need to change your link parameters. In each case, you'll need to provide the correct pipe numbers, if they're different from the example.

- To change bandwidth (100M means 100Mbits/s):

sudo ipfw pipe 60111 config bw 100M sudo ipfw pipe 60121 config bw 100M

- Request a bandwidth of zero to use the full capacity of the link (unlimited):

sudo ipfw pipe 60111 config bw 0 sudo ipfw pipe 60121 config bw 0

- To change link delay (delays are measured in ms):

sudo ipfw pipe 60111 config delay 10 sudo ipfw pipe 60121 config delay 10

- To change packet loss rate (rate is a probability, so 0.001 means 0.1% packet loss):

sudo ipfw pipe 60111 config plr .0001 sudo ipfw pipe 60121 config plr .0001

- You can combine settings for bandwidth, delay, and loss by specifying more than one in a single ipfw command. We'll use this form in the procedure below.

Experiment Procedure

- Set your link parameters to use maximum bandwidth, no delay, no packet loss:

sudo ipfw pipe 60111 config bw 0 delay 0 plr 0 sudo ipfw pipe 60121 config bw 0 delay 0 plr 0

- Verify with

sudo ipfw pipe show

60111: unlimited 0 ms 50 sl. 1 queues (1 buckets) droptail

mask: 0x00 0x00000000/0x0000 -> 0x00000000/0x0000

BKT Prot ___Source IP/port____ ____Dest. IP/port____ Tot_pkt/bytes Pkt/Byte Drp

0 ip 207.167.175.72/0 195.123.216.8/6 7 1060 0 0 0

60121: unlimited 0 ms 50 sl. 1 queues (1 buckets) droptail

mask: 0x00 0x00000000/0x0000 -> 0x00000000/0x0000

BKT Prot ___Source IP/port____ ____Dest. IP/port____ Tot_pkt/bytes Pkt/Byte Drp

0 ip 207.167.176.224/0 195.124.8.8/6 8 1138 0 0 0

Note that bandwidth is set to unlimited, delay to 0 ms, and no PLR is shown.

- Using this initial setting, try a few UDT transfers, including the larger files. Now try FTP transfers. Record the transfer sizes and rates.

- Now change the link parameters to reduce the available bandwidth to 10Mbps:

sudo ipfw pipe 60111 config bw 10M delay 0 plr 0 sudo ipfw pipe 60121 config bw 10M delay 0 plr 0

- Repeat your file transfers with the new settings. As before, note the transfer sizes and rates, as well as the link settings.

- Continue with additional trials, varying each of the three link parameters over a range sufficient to observe meaningful performance differences. Record your data.

What to hand in

- Your raw data and appropriate graphs illustrating changes in performance for the two transfer protocols with differing link parameters.

- Your analysis. Here are some questions to consider.

- Does one protocol outperform the other?

- Under what conditions are performance differences most clearly seen? Why?

- What shortcomings in the experiment design may affect your results? How might you improve the experiment design?

- What interesting characteristics of the transfer protocols are not measured in this experiment? How might you design an experiment to investigate these?

Attachments (3)

- websrv.rspec (2.4 KB) - added by 12 years ago.

- WebsrvIndex.png (153.9 KB) - added by 12 years ago.

- WebsrvExampleSliver.png (27.5 KB) - added by 12 years ago.

Download all attachments as: .zip