| Version 24 (modified by , 11 years ago) (diff) |

|---|

- ExoGENI Acceptance Test Plan

-

Acceptance Tests Descriptions

- Administration Prerequisite Tests

- Monitoring/Rack Inspection Prerequisite Tests

-

Experimenter Acceptance Tests

- EG-EXP-1: Bare Metal Support Acceptance Test

- EG-EXP-2: ExoGENI Single Site Acceptance Test

- EG-EXP-3: ExoGENI Single Site 100 VM Test

- EG-EXP-4: ExoGENI Multi-site Acceptance Test

- EG-EXP-5: ExoGENI OpenFlow Network Resources Acceptance Test

- EG-EXP-6: ExoGENI and Meso-scale Multi-site OpenFlow Acceptance Test

- Additional Administration Acceptance Tests

- Additional Monitoring Acceptance Tests

- Test Methodology and Reporting

- Requirements Validation

- Glossary

ExoGENI Acceptance Test Plan

This page captures the GENI Racks Acceptance Test Plan to be executed for the ExoGENI project. Tests in this plan are based on the ExoGENI Use Cases and each test provides a mapping to the GENI Rack Requirements that it validates. This plan defines tests that cover the following types of requirements: Integration (C), Monitoring(D), Experimenter(G) and Local Aggregate (F) requirements. The GENI AM API Acceptance tests suite covers Software (A and B) requirements that are not covered in this plan. This plan covers high-priority functional tests; ExoGENI racks support more functions than those highlighted here.

The GPO infrastructure team will perform the tests described in this page and the GPO software team will run the software acceptance tests in cooperation with the ExoGENI rack team. The GPO will execute all of this test plan for the first two ExoGENI racks delivered on the GENI network (GPO and RENCI). The ExoGENI acceptance test effort will capture findings and unresolved issues, which will be published in an Acceptance Test Report. GENI operations (beginning with the GPO) will run a shorter version of the acceptance tests for each subsequent site installed. Issues from site installation tests will be tracked in operations tickets. See the ExoGENI Acceptance Test Status page for details about the current status.

Assumptions and Dependencies

The following assumptions are made for all tests described in this plan:

- GPO ProtoGENI credentials from https://pgeni.gpolab.bbn.com are used for all tests.

- GPO ProtoGENI is the Slice Authority for all tests.

- Resources for each test will be requested from the local broker whenever possible.

- Compute resources are VMs unless otherwise stated.

- All Service Manager (SM) requests MUST be made via the Omni command line tool which uses the GENI AM API.

- In all scenarios, one experiment is always equal to one slice.

- ORCA will be used as the OpenFlow aggregate manager for ExoGENI resources in the OpenFlow test cases.

- FOAM will be used as the OpenFlow aggregate manager for Meso-scale resources in the OpenFlow test cases.

- Because we expect NLR Layer 2 Static VLANs to be available at early test sites first, we will start testing with NLR.

The following technical dependencies will be verified before test cases are executed:

- ORCA RSpec/NDL conversion service is available to convert GENI requests.

It is expected that bare metal nodes may not be available initially at BBN and RENCI. If so, VMs will substitute for bare metal nodes in each of the topologies for the initial evaluation. These tests will be re-executed when the bare metal solution becomes available.

Test Traffic Profile:

- Experiment traffic includes UDP and TCP data streams at low rates to ensure end-to-end delivery

- Traffic exchange is used to verify that the appropriate data paths are used and that traffic is delivered successfully for each test described.

- Performance measurement is not a goal of these acceptance tests.

Acceptance Tests Descriptions

This section describes each acceptance test by defining its goals, topology, and outline test procedure. Test cases are listed by priority in sections below. The cases that verify the largest number of requirement criteria are typically listed at a higher priority. The prerequisite tests will be executed first to verify that baseline monitoring and administrative functions are available. This will allow the execution of the experimenter test cases. Additional monitoring and administrative tests described in later sections will also run before the completion of the acceptance test effort.

Administration Prerequisite Tests

Administrative Acceptance tests will verify support of administrative management tasks and focus on verifying priority functions for each of the rack components. The set of administrative features described in this section will be verified initially. Additional administrative tests are described in a later section which will be executed before the acceptance test completion.

EG-ADM-1: Rack Receipt and Inventory Test

This "test" uses BBN as an example site by verifying that we can do all the things we need to do to integrate the rack into our standard local procedures for systems we host.

Procedure

- ExoGENI and GPO power and wire the BBN rack

- GPO configures the exogeni.gpolab.bbn.com DNS namespace and 192.1.242.0/25 IP space, and enters all public IP addresses for the BBN rack into DNS.

- GPO requests and receives administrator accounts on the rack and read access to ExoGENI Nagios for GPO sysadmins.

- GPO inventories the physical rack contents, network connections and VLAN configuration, and power connectivity, using our standard operational inventories.

- GPO, ExoGENI, and GMOC share information about contact information and change control procedures, and ExoGENI operators subscribe to GENI operations mailing lists and submit their contact information to GMOC.

EG-ADM-2: Rack Administrator Access Test

This test verifies local and remote administrative access to rack devices.

Procedure

- For each type of rack infrastructure node, including the head node and a worker node configured for OpenStack, use a site administrator account to test:

- Login to the node using public-key SSH.

- Verify that you cannot login to the node using password-based SSH, nor via any unencrypted login protocol.

- When logged in, run a command via sudo to verify root privileges.

- For each rack infrastructure device (switches, remote PDUs if any), use a site administrator account to test:

- Login via SSH.

- Login via a serial console (if the device has one).

- Verify that you cannot login to the device via an unencrypted login protocol.

- Use the "enable" command or equivalent to verify privileged access.

- Test that IMM (the ExoGENI remote console solution for rack hosts) can be used to access the consoles of the head node and a worker node:

- Login via SSH or other encrypted protocol.

- Verify that you cannot login via an unencrypted login protocol.

Monitoring/Rack Inspection Prerequisite Tests

These tests verify that GPO can locate and access information which may be needed to determine rack state and debug problems during experimental testing, and also verify by observation that rack components are ready to be tested. Additional monitoring tests are defined in a later section to complete the validation in this section.

EG-MON-1: Control Network Software and VLAN Inspection Test

This test inspects the state of the rack control network, infrastructure nodes, and system software.

Procedure

- A site administrator enumerates processes on each of the head node and an OpenStack worker node which listen for network connections from other nodes, identifies what version of what software package is in use for each, and verifies that we know the source of each piece of software and could get access to its source code.

- A site administrator reviews the configuration of the rack management switch and verifies that each worker node's control interfaces are on the expected VLANs for that worker node's function (OpenStack or bare metal).

- A site administrator reviews the MAC address table on the management switch, and verifies that all entries are identifiable and expected.

EG-MON-2: GENI Software Configuration Inspection Test

This test inspects the state of the GENI AM software in use on the rack.

Procedure

- A site administrator uses available system data sources (process listings, monitoring output, system logs, etc) and/or AM administrative interfaces to determine the configuration of ExoGENI resources:

- How many VMs are assigned to each of the BBN rack SM and the global ExoSM

- How many bare metal nodes are configured on the rack and whether they are controlled by the BBN rack SM or by ExoSM.

- How many unbound VLANs are in the rack's available pool and whether they are controlled by the BBN rack SM or by ExoSM.

- Whether the BBN ExoGENI AM, the RENCI ExoGENI AM, and ExoSM trust the pgeni.gpolab.bbn.com slice authority, which will be used for testing.

- A site administrator uses available system data sources to determine the configuration of OpenFlow resources according to FOAM, ExoGENI, and FlowVisor.

EG-MON-3: GENI Active Experiment Inspection Test

This test inspects the state of the rack data plane and control networks when experiments are running, and verifies that a site administrator can find information about running experiments.

Procedure

- An experimenter from the GPO starts up experiments to ensure there is data to look at:

- An experimenter runs an experiment containing at least one rack VM, and terminates it.

- An experimenter runs an experiment containing at least one rack VM, and leaves it running.

- A site administrator uses available system and experiment data sources to determine current experimental state, including:

- How many VMs are running and which experimenters own them

- How many VMs were terminated within the past day, and which experimenters owned them

- What OpenFlow controllers the data plane switch, the rack FlowVisor, and the rack FOAM are communicating with

- A site administrator examines the switches and other rack data sources, and determines:

- What MAC addresses are currently visible on the data plane switch and what experiments do they belong to?

- For some experiment which was terminated within the past day, what data plane and control MAC and IP addresses did the experiment use?

- For some experimental data path which is actively sending traffic on the data plane switch, do changes in interface counters show approximately the expected amount of traffic into and out of the switch?

Experimenter Acceptance Tests

EG-EXP-1: Bare Metal Support Acceptance Test

Bare metal nodes are exclusive nodes that are used throughout the experimenter test cases. This section outlines features to be verified which are not explicitly validated in other scenarios. It is currently expected that Microsoft Windows support will be initially tested on VMs, and on bare metal when available.

- Determine which nodes can be used as bare metal, aka exclusive node.

- Obtain a list of OS images which can be loaded on bare metal nodes from the ExoGENI team. (List should be based on successful bare metal loads by ExoGENI team or others in GENI community and should be available on a public web page.)

- Obtain 2 licensed recent Microsoft OS images for bare metal nodes from the site (BBN).

- Reserve and boot 2 bare metal node using the Microsoft image.

- Obtain a recent Linux OS image for bare metal nodes from the ExoGENI team's successful test list.

- Reserve and boot a bare metal node using this Linux OS image.

- Release bare metal resource.

- Modify Aggregate resource allocation for the rack to add 1 additional bare metal node (2 total) for use in experimenter test cases.

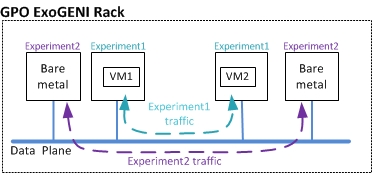

EG-EXP-2: ExoGENI Single Site Acceptance Test

This one site test run on the BBN ExoGENI rack includes two experiments. Each experiment requests local compute resources, which generate bidirectional traffic over a Layer 2 data plane network connection. The goal of this test is to verify basic operations of VMs and data flows within one rack.

Test Topology

This test uses this topology:

Note: The diagram shows the logical end-points for each experiment traffic exchange. The VMs may or may not be on different worker nodes.

Prerequisites

This test has these prerequisites:

- Two GPO Ubuntu images have been tested in the ExoGENI image playpen environment and have been uploaded to the RENCI VM image repository using available ExoGENI documentation. One Ubuntu image is for the VM and one Ubuntu image is for the bare metal node in this test.

- Traffic generation tools may be part of image or may be installed at experiment runtime.

- Administrative accounts have been created for GPO staff on the BBN ExoGENI rack.

- GENI Experimenter1 and Experimenter2 accounts exist.

- Baseline Monitoring is in place for the entire BBN site, to ensure that any problems are quickly identified.

Procedure

Do the following:

- As Experimenter1, request ListResources from BBN ExoGENI.

- Review advertisement RSpec for a list of OS images which can be loaded, and identify available resources.

- Verify that the GPO Ubuntu image is available.

- Define a request RSpec for two VMs, each with a GPO Ubuntu image.

- Create the first slice.

- Create a sliver in the first slice, using the RSpec defined in step 4.

- Log in to each of the systems, and send traffic to the other system sharing a VLAN.

- Using root privileges on one of the VMs load a Kernel module.

- As Experimenter2, request ListResources from BBN ExoGENI.

- Define a request RSpec for two bare metal nodes, both using the uploaded GPO Ubuntu images.

- Create the second slice.

- Create a sliver in the second slice, using the RSpec defined in step 10.

- Log in to each of the systems, and send traffic to the other system.

- Verify that experimenters 1 and 2 cannot use the control plane to access each other's resources (e.g. via unauthenticated SSH, shared writable filesystem mount)

- Review system statistics and VM isolation and network isolation on data plane.

- Verify that each VM has a distinct MAC address for that interface.

- Verify that VMs' MAC addresses are learned on the data plane switch.

- Stop traffic and delete slivers.

EG-EXP-3: ExoGENI Single Site 100 VM Test

This one site test runs on the BBN ExoGENI rack and includes one experiment to validate compute resource requirements for VMs. The goal of this test is not to validate the ExoGENI limits, but simply to verify that the ExoGENI rack can provide 100 VMs with its worker nodes. This test will evenly distribute the VM requests across the available worker nodes.

Test Topology

This test uses this topology:

Prerequisites

This test has these prerequisites:

- Traffic generation tools may be part of image or installed at experiment runtime.

- Administrative accounts exist for GPO staff on the GPO rack.

- GENI Experimenter1 account exists.

- Baseline Monitoring is in place for the entire GPO site, to ensure that any problems are quickly identified.

Procedure

Do the following:

- Request ListResources from BBN ExoGENI.

- Review ListResources output, and identify available resources.

- Write a RSpec that requests 100 VMs evenly distributed across the worker nodes using the default image.

- Create a slice.

- Create a sliver in the slice, using the RSpec defined in step 3.

- Log into several of the VMs, and send traffic to several other systems.

- Step up traffic rates to verify VMs continue to operate with realistic traffic loads.

- Review system statistics and VM isolation (does not include network isolation)

- Review monitoring statistics and check for resource status for CPU, disk, memory utilization, interface counters, uptime, process counts, and active user counts.

- Verify that several VMs running on the same worker node have a distinct MAC address for their interface.

- Verify for several VMs running on the same worker node, that their MAC addresses are learned on the data plane switch.

- Review monitoring statistics and check for resource status for CPU, disk, memory utilization, interface counters, uptime, process counts, and active user counts.

- Stop traffic and delete sliver.

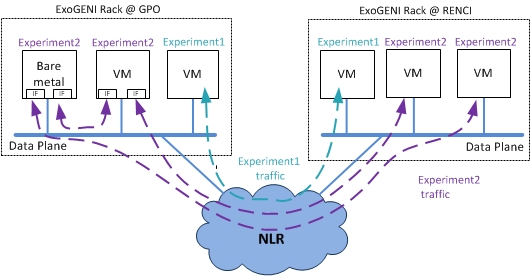

EG-EXP-4: ExoGENI Multi-site Acceptance Test

This test includes two sites and two experiments, using resources in the BBN and RENCI ExoGENI racks. Each of the compute resources will exchange traffic over the GENI core network. In addition, the BBN VM and bare metal resources in Experiment2 will use multiple data interfaces. All site-to-site experiments will take place over a wide-area Layer 2 data plane network connection via NLR using VLANs allocated by the RENCI ExoSM. The goal of this test is to verify basic operations of VMs and data flows between the two racks.

Test Topology

This test uses this topology:

Note: Each of the Experiment2 traffic dashed lines equals a VLAN. The VMs shown may or may not be on different worker nodes.

Prerequisites

This test has these prerequisites:

- BBN ExoGENI connectivity statistics will be monitored at the BBN ExoGENI Monitoring site.

- This test will be scheduled at a time when site contacts are available to address any problems.

- Administrative accounts have been created for GPO staff at each rack.

- The VLANs used will be allocated by the RENCI ExoSM.

- Baseline Monitoring is in place at each site, to ensure that any problems are quickly identified.

- ExoGENI manages private address allocation for the endpoints in this test.

Procedure

Do the following:

- As Experimenter1, Request ListResources RENCI ExoGENI.

- Review ListResources output.

- Define a request RSpec for a VM at BBN ExoGENI, a VM at RENCI ExoGENI and an unbound exclusive non-OpenFlow VLAN to connect the 2 endpoints.

- Create the first slice.

- Create a sliver at the RENCI ExoGENI aggregate using the RSpecs defined above.

- Log in to each of the systems, and send traffic to the other system, leave traffic running.

- As Experimenter2, Request ListResources from RENCI ExoGENI.

- Define a request RSpec for one VM and one bare metal node each with two interfaces in the BBN ExoGENI rack, two VMs at RENCI, and two VLANs to connect the BBN ExoGENI to the RENCI ExoGENI.

- Create a second slice.

- In the second slice, create a sliver at the RENCI ExoGENI aggregate using the RSpecs defined above.

- Log in to each of the end-point systems, and send traffic to the other end-point system which shares the same VLAN.

- Verify traffic handling per experiment, VM isolation, and MAC address assignment.

- Construct and send a non-IP ethernet packet over the data plane interface.

- Review baseline monitoring statistics.

- Run test for at least 4 hours.

- Review baseline monitoring statistics.

- Stop traffic and delete slivers.

Note: After a successful test run, this test will be revisited and the procedure will be re-executed as a longevity test for a minimum of 24 hours rather than 4 hours.

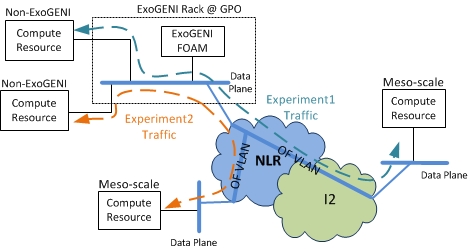

EG-EXP-5: ExoGENI OpenFlow Network Resources Acceptance Test

This is a three site experiment that uses ExoGENI OpenFlow network resources (no ExoGENI compute resources) and two non-ExoGENI compute resources. The experiment will use the ExoGENI FOAM to configure the ExoGENI site OpenFlow switch. The goal of this test is to verify OpenFlow operations and integration with meso-scale compute resources and other compute resources external to the ExoGENI rack.

Test Topology

Note: The NLR and Internet2 OpenFlow VLANs are the GENI Network Core static VLANs.

Prerequisites

- A GPO site network is connected to the ExoGENI OpenFlow switch

- ExoGENI FOAM server is running and can manage the ExoGENI OpenFlow switch

- Two meso-scale remote sites make compute resources and OpenFlow meso-scale resources available for this test

- This test is scheduled at a time when site contacts are available to address any problems.

- All sites'OpenFlow VLANs are implemented and recorded on the GENI meso-scale network pages for this test. (Current example is meso-scale VLAN 1750)

- GMOC data collection for the meso-scale and ExoGENI rack resources is functioning for the OpenFlow and traffic measurements required in this test. (This requires only a subset of the Additional Monitoring GMOC Data Collection tests to be successful.)

Procedure

The following operations are to be executed:

- As Experimenter1, Determine GPO compute resources and define RSpec.

- Determine remote meso-scale compute resources and define RSpec.

- Define a request RSpec for OF network resources at the BBN ExoGENI FOAM.

- Define a request RSpec for OF network resources at the remote I2 meso-scale site.

- Define a request RSpec for the OpenFlow Core resources

- Create the first slice

- Create a sliver for the GPO compute resources.

- Create a sliver at the I2 meso-scale site using FOAM at site.

- Create a sliver at of the BBN ExoGENI FOAM Aggregate.

- Create a sliver for the OpenFlow resources in the core.

- Create a sliver for the meso-scale compute resources.

- Log in to each of the compute resources and send traffic to the other end-point.

- Verify that traffic is delivered to target.

- Review baseline, GMOC, and meso-scale monitoring statistics.

- As Experimenter2, determine GPO compute resources and define RSpec.

- Determine remote meso-scale compute resources and define RSpec.

- Define a request RSpec for OF network resources at the BBN ExoGENI FOAM.

- Define a request RSpec for OF network resources at the remote NLR meso-scale site.

- Define a request RSpec for the OpenFlow Core resources

- Create the second slice

- Create a sliver for the GPO compute resources.

- Create a sliver at the meso-scale site using FOAM at site.

- Create a sliver at of the BBN ExoGENI FOAM Aggregate.

- Create a sliver for the OpenFlow resources in the core.

- Create a sliver for the meso-scale compute resources.

- Log in to each of the compute resources and send traffic to the other endpoint.

- As Experimenter2, insert flowmods and send packet-outs only for traffic assigned to the slivers.

- Verify that traffic is delivered to target according to the flowmods settings.

- Review baseline, GMOC, and monitoring statistics.

- Stop traffic and delete slivers.

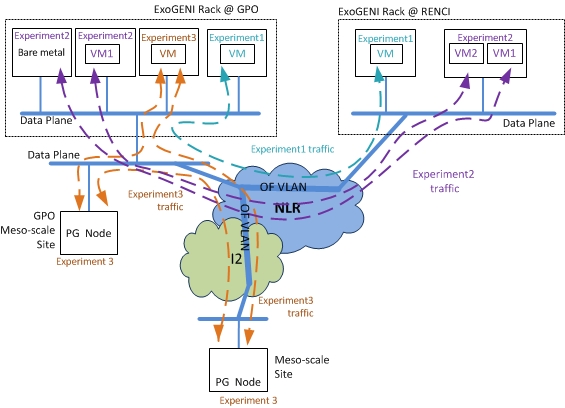

EG-EXP-6: ExoGENI and Meso-scale Multi-site OpenFlow Acceptance Test

This test includes three sites and three experiments, using resources in the GPO and RENCI ExoGENI racks as well as meso-scale resources, where the network resources are the core OpenFlow-controlled VLANs. Each of the compute resources will exchange traffic with the others in its slice, over a wide-area Layer 2 data plane network connection, using NLR and Internet2 VLANs. In particular, the following slices will be set up for this test:

- Slice 1: One ExoGENI VM at each of BBN and RENCI.

- Slice 2: One bare metal ExoGENI node and one ExoGENI VM at BBN; two ExoGENI VMs at RENCI.

- Slice 3: An ExoGENI VM at BBN, a PG node at BBN, and a meso-scale Wide-Area ProtoGENI (WAPG) node.

The goal of this test is to verify ExoGENI rack interoperability with other meso-scale GENI sites.

Test Topology

This test uses this topology:

Note: The NLR and Internet2 OpenFlow VLANs are the GENI Network Core static VLANs.

Note: The two RENCI VMs in Experiment2 must be on the same worker node. This is not the case for other experiments.

Prerequisites

This test has these prerequisites:

- One Meso-scale site with a PG node is available for testing

- BBN ExoGENI connectivity statistics are monitored at the GPO ExoGENI Monitoring site.

- GENI Experimenter1, Experimenter2 and Experimenter3 accounts exist.

- This test is scheduled at a time when site contacts are available to address any problems.

- Both ExoGENI aggregates can link to static VLANs.

- All sites'OpenFlow VLANs are implemented and recorded on the GENI meso-scale network pages for this test. (Current example is meso-scale VLAN 1750)

- Baseline Monitoring is in place at each site, to ensure that any problems are quickly identified.

- GMOC data collection for the meso-scale and ExoGENI rack resources is functioning for the OpenFlow and traffic measurements required in this test. (This requires only a subset of the Additional Monitoring GMOC Data Collection tests to be successful.)

- An OpenFlow controller is run by the experimenter and is accessible by FlowVisor via DNS hostname (or IP address) and TCP port.

Note: If Utah PG access to OpenFlow is available when this test is executed, a PG node will be added to the third slice. This node is not shown in the diagram above. (This is an optional part of the test.)

Procedure

Do the following:

- As Experimenter1, request ListResources from BBN ExoGENI, RENCI ExoGENI, and FOAM at NLR Site. (The NLR "site" is actually the FOAM for the NLR core meso-scale OpenFlow network, not an actual physical site.)

- Review ListResources output from all AMs.

- Define a request RSpec for a VM at the BBN ExoGENI.

- Define a request RSpec for a VM at the RENCI ExoGENI.

- Define request RSpecs for OpenFlow resources from BBN FOAM to access GENI OpenFlow core resources.

- Define request RSpecs for OpenFlow core resources at NLR FOAM.

- Create the first slice.

- Create a sliver in the first slice at each AM, using the RSpecs defined above.

- Log in to each of the systems, verify IP address assignment. Send traffic to the other system, leave traffic running.

- As Experimenter2, define a request RSpec for one VM and one bare metal node at BBN ExoGENI.

- Define a request RSpec for two VMs on the same worker node at RENCI ExoGENI.

- Define request RSpecs for OpenFlow resources from GPO FOAM to access GENI OpenFlow core resources.

- Define request RSpecs for OpenFlow core resources at NLR FOAM.

- Create a second slice.

- Create a sliver in the second slice at each AM, using the RSpecs defined above.

- Log in to each of the systems in the slice, and send traffic to each other systems; leave traffic running

- As Experimenter3, request ListResources from BBN ExoGENI, GPO FOAM, and FOAM at Meso-scale Site (NLR Site GPO and Internet2 site TBD).

- Review ListResources output from all AMs.

- Define a request RSpec for a VM at the BBN ExoGENI.

- Define a request RSpec for a compute resource at the GPO Meso-scale site.

- Define a request RSpec for a compute resource at a Meso-scale site.

- Define request RSpecs for OpenFlow resources to allow connection from OF BBN ExoGENI to Meso-scale OF sites(GPO and second site TBD) (NLR and I2).

- Create a third slice.

- Create a sliver that connects the Internet2 Meso-scale OpenFlow site to the BBN ExoGENI Site, and the GPO Meso-scale site.

- Log in to each of the compute resources in the slice, configure data plane network interfaces on any non-ExoGENI resources as necessary, and send traffic to each other systems; leave traffic running.

- Verify that all three experiments continue to run without impacting each other's traffic, and that data is exchanged over the path along which data is supposed to flow.

- Review baseline, GMOC, and monitoring statistics.

- As site administrator, identify all controllers that the BBN ExoGENI OpenFlow switch is connected to

- As Experimenter3, verify that traffic only flows on the network resources assigned to slivers as specified by the controller.

- Verify that no default controller, switch fail-open behavior, or other resource other than experimenters' controllers, can control how traffic flows on network resources assigned to experimenters' slivers.

- Set the hard and soft timeout of flowtable entries

- Get switch statistics and flowtable entries for slivers from the OpenFlow switch.

- Get layer 2 topology information about slivers in each slice.

- Install flows that match on layer 2 fields and/or layer 3 fields.

- Run test for at least 4 hours.

- Review monitoring statistics and checks as above.

- Stop traffic and delete slivers.

Note: If Utah PG access to OpenFlow is available when this test is executed, a PG node will be added to the third slice. This node is not shown in the diagram above. (This is an optional part of the test.)

Documentation check:

- Verify public access to documentation about which OpenFlow match actions can be performed in hardware for the ExoGENI switches.

Additional Administration Acceptance Tests

These tests will be performed as needed after the administration baseline tests complete successfully. For example, the Software Update Test will be performed at least once when the rack team provides new software for testing. We expect these tests to be interspersed with other tests in this plan at times that are agreeable to the GPO and the participants, not just run in a block at the end of testing. The goal of these tests is to verify that sites have adequate documentation, procedures, and tools to satisfy all GENI site requirements.

EG-ADM-3: Full Rack Reboot Test

In this test, a full rack reboot is performed as a drill of a procedure which a site administrator may need to perform for site maintenance.

Procedure

- Review relevant rack documentation about shutdown options and make a plan for the order in which to shutdown each component.

- Cleanly shutdown and/or hard-power-off all devices in the rack, and verify that everything in the rack is powered down.

- Power on all devices, bring all logical components back online, and use monitoring and comprehensive health tests to verify that the rack is healthy again.

EG-ADM-4: Emergency Stop Test

In this test, an Emergency Stop drill is performed on a sliver in the rack.

Prerequisites

- GMOC's updated Emergency Stop procedure is approved and published on a public wiki.

- ExoGENI's procedure for performing a shutdown operation on any type of sliver in an ExoGENI rack is published on a public wiki or on a protected wiki that all ExoGENI site administrators (including GPO) can access.

- An Emergency Stop test is scheduled at a convenient time for all participants and documented in GMOC ticket(s).

- A test experiment is running that involves a slice with connections to at least one ExoGENI rack compute resource.

Procedure

- A site administrator reviews the Emergency Stop and sliver shutdown procedures, and verifies that these two documents combined fully document the campus side of the Emergency Stop procedure.

- A second administrator (or the GPO) submits an Emergency Stop request to GMOC, referencing activity from a public IP address assigned to a compute sliver in the rack that is part of the test experiment.

- GMOC and the first site administrator perform an Emergency Stop drill in which the site administrator successfully shuts down the sliver in coordination with GMOC.

- GMOC completes the Emergency Stop workflow, including updating/closing GMOC tickets.

EG-ADM-5: Software Update Test

In this test, we update software on the rack as a test of the software update procedure.

Prerequisites

Minor updates of system RPM packages, ExoGENI local AM software, and FOAM are available to be installed on the rack. This test may need to be scheduled to take advantage of a time when these updates are available.

Procedure

- A BBN site administrator reviews the procedure for performing software updates of GENI and non-GENI software on the rack. If there is a procedure for updating any version tracking documentation (e.g. a wiki page) or checking any version tracking tools, the administrator reviews that as well.

- Following that procedure, the administrator performs minor software updates on rack components, including as many as possible of the following (depending on availability of updates):

- At least one update of a standard (non-GENI) RPM package on each of the head node and a worker node

- An update of ExoGENI local AM software

- An update of FOAM software

- The admin confirms that the software updates completed successfully

- The admin updates any appropriate version tracking documentation or runs appropriate tool checks indicated by the version tracking procedure.

EG-ADM-6: Control Network Disconnection Test

In this test, we disconnect parts of the rack control network or its dependencies to test partial rack functionality in an outage situation.

Procedure

- Simulate an outage of geni.renci.org by inserting a firewall rule on the GPO router blocking the rack from reaching it. Verify that an administrator can still access the rack, that rack monitoring to GMOC continues through the outage, and that some experimenter operations still succeed.

- Simulate an outage of each of the rack head node and management switch by disabling their respective interfaces on the GPO's control network switch. Verify that GPO, ExoGENI, and GMOC monitoring all see the outage.

EG-ADM-7: Documentation Review Test

Although this is not a single test per-se, this section lists required documents that the rack teams will write. Draft documents should be delivered prior to testing of the functional areas to which they apply. Final documents must be delivered before Spiral 4 site installations at non-developer sites. Final documents will be public, unless there is some specific reason a particular document cannot be public (e.g. a security concern from a GENI rack site).

Procedure

Review each required document listed below, and verify that:

- The document has been provided in a public location (e.g. the GENI wiki, or any other public website)

- The document contains the required information.

- The documented information appears to be accurate.

Note: this tests only the documentation, not the rack behavior which is documented. Rack behavior related to any or all of these documents may be tested elsewhere in this plan.

Documents to review:

- Pre-installation document that lists specific minimum requirements for all site-provided services for potential rack sites (e.g. space, number and type of power plugs, number and type of power circuits, cooling load, public addresses, NLR or Internet2 layer2 connections, etc.). This document should also list all standard expected rack interfaces (e.g. 10GBE links to at least one research network).

- Summary GENI Rack parts list, including vendor part numbers for "standard" equipment intended for all sites (e.g. a VM server) and per-site equipment options (e.g. transceivers, PDUs etc.), if any. This document should also indicate approximately how much headroom, if any, remains in the standard rack PDUs' power budget to support other equipment that sites may add to the rack.

- Procedure for identifying the software versions and system file configurations running on a rack, and how to get information about recent changes to the rack software and configuration.

- Explanation of how and when software and OS updates can be performed on a rack, including plans for notification and update if important security vulnerabilities in rack software are discovered.

- Description of the GENI software running on a standard rack, and explanation of how to get access to the source code of each piece of standard GENI software.

- Description of all the GENI experimental resources within the rack, and what policy options exist for each, including: how to configure rack nodes as bare metal vs. VM server, what options exist for configuring automated approval of compute and network resource requests and how to set them, how to configure rack aggregates to trust additional GENI slice authorities, and whether it is possible to trust local users within the rack.

- Description of the expected state of all the GENI experimental resources in the rack, including how to determine the state of an experimental resource and what state is expected for an unallocated bare metal node.

- Procedure for creating new site administrator and operator accounts.

- Procedure for changing IP addresses for all rack components.

- Procedure for cleanly shutting down an entire rack in case of a scheduled site outage.

- Procedure for performing a shutdown operation on any type of sliver on a rack, in support of an Emergency Stop request.

- Procedure for performing comprehensive health checks for a rack (or, if those health checks are being run automatically, how to view the current/recent results).

- Technical plan for handing off primary rack operations to site operators at all sites.

- Per-site documentation. This documentation should be prepared before sites are installed and kept updated after installation to reflect any changes or upgrades after delivery. Text, network diagrams, wiring diagrams and labeled photos are all acceptable for site documents. Per-site documentation should include the following items for each site:

- Part numbers and quantities of PDUs, with NEMA input power connector types, and an inventory of which equipment connects to which PDU.

- Physical network interfaces for each control and data plane port that connects to the site's existing network(s), including type, part numbers, maximum speed etc. (eg. 10-GB-SR fiber)

- Public IP addresses allocated to the rack, including: number of distinct IP ranges and size of each range, hostname to IP mappings which should be placed in site DNS, whether the last-hop routers for public IP ranges subnets sit within the rack or elsewhere on the site, and what firewall configuration is desired for the control network.

- Data plane network connectivity and procedures for each rack, including core backbone connectivity and documentation, switch configuration options to set for compatibility with the L2 core, and the site and rack procedures for connecting non-rack-controlled VLANs and resources to the rack data plane. A network diagram is highly recommended (See existing OpenFlow meso-scale network diagrams on the GENI wiki for examples.)

Additional Monitoring Acceptance Tests

These tests will be performed as needed after the monitoring baseline tests complete successfully. For example, the GMOC data collection test will be performed during the ExoGENI Network Resources Acceptance test, where we already use the GMOC for meso-scale OpenFlow monitoring. We expect these tests to be interspersed with other tests in this plan at times that are agreeable to the GPO and the participants, not just run in a block at the end of testing. The goal of these tests is to verify that sites have adequate tools to view and share GENI rack data that satisfies all GENI monitoring requirements.

EG-MON-4: Infrastructure Device Performance Test

This test verifies that the rack head node performs well enough to run all the services it needs to run.

Procedure

While experiments involving FOAM-controlled OpenFlow slivers and compute slivers are running:

- View OpenFlow control monitoring at GMOC and verify that no monitoring data is missing

- View VLAN 1750 data plane monitoring, which pings the rack's interface on VLAN 1750, and verify that packets are not being dropped

- Verify that the CPU idle percentage on the head node is nonzero.

EG-MON-5: GMOC Data Collection Test

This test verifies the rack's submission of monitoring data to GMOC.

Note: This test relies on a GMOC API and data definitions which are under development. Availability of the appropriate API and definitions is a prerequisite for submitting each type of data. We expect ExoGENI will be able to submit a small number of operational data items by GEC14.

Procedure

View the dataset collected at GMOC for the BBN and RENCI ExoGENI racks. For each piece of required data, attempt to verify that:

- The data is being collected and accepted by GMOC and can be viewed at gmoc-db.grnoc.iu.edu

- The data's "site" tag indicates that it is being reported for the rack located at the

gpolabor RENCI site (as appropriate for that rack). - The data has been reported within the past 10 minutes.

- For each piece of data, either verify that it is being collected at least once a minute, or verify that it requires more complicated processing than a simple file read to collect, and thus can be collected less often.

Verify that the following pieces of data are being reported:

- Is each of the rack ExoGENI and FOAM AMs reachable via the GENI AM API right now?

- Is each compute or unbound VLAN resource at each rack AM online? Is it available or in use?

- Sliver count and percentage of compute and unbound VLAN resources in use for the rack SM.

- Identities of current slivers on each rack AM, including creation time for each.

- Per-sliver interface counters for compute and VLAN resources (where these values can be easily collected).

- Is the rack data plane switch online?

- Interface counters and VLAN memberships for each rack data plane switch interface

- MAC address table contents for shared VLANs which appear on rack data plane switches

- Is each rack worker node online?

- For each rack worker node configured as an OpenStack VM server, overall CPU, disk, and memory utilization for the host, current VM count and total VM capacity of the host.

- For each rack worker node configured as an OpenStack VM server, interface counters for each data plane interface.

- Results of at least one end-to-end health check which simulates an experimenter reserving and using at least one resource in the rack.

Verify that per-rack or per-aggregate summaries are collected of the count of distinct users who have been active on the rack, either by providing raw sliver data containing sliver users to GMOC, or by collecting data locally and producing trending summaries on demand.

Test Methodology and Reporting

Test Case Execution

- All test procedure steps will be executed until there is a blocking issue.

- If a blocking issue is found for a test case, testing will be stopped for that test case.

- Testing focus will shift to another test case while waiting for a solution to a blocking issue.

- If a non-blocking issue is found, testing will continue toward completion of the procedure.

- When a software resolution or workaround is available for a blocking issue, the test impacted by the issue is re-executed until it can be completed successfully.

- Supporting documentation will be used whenever available.

- Questions that are not answered by existing documentation will be gathered during the acceptance testing and published on the GENI wiki for followup.

Issue Tracking

- All issues discovered in acceptance testing regardless of priority are to be tracked in a bug tracking system.

- ExoGENI rack team should propose a bug tracking system that the team, the GPO, and the GMOC can access to enter or respond to bugs. Viewing for GENI bugs should be public. (We will use the groups.geni.net ticket system if there is no better proposal from the ExoGENI team.)

- All types of issues encountered (documentation error, software bug, missing features, missing documentation, etc.) will be tracked.

- All unresolved issues will be reviewed and published at the end of the acceptance test as part of the acceptance test report.

- An initial response to a logged bug should happen within 24 hours (excepting weekend and holiday hours). An initial response does not require resolution, but should at minimum include a comment/question and indicate who is working on the bug.

Status Updates and Reporting

- A periodic status update will be generated, as the acceptance test plan is being executed.

- Periodic (once per business day) status update will be posted to the rack team mail list (exogeni-design@geni.net).

- Upon acceptance test completion, all findings and unresolved issue will be captured in an acceptance test report.

- Supporting configuration and RSpecs used in testing will be part of the public acceptance test report, except where site security or privacy concerns require holding back that information.

Test Case Naming

The test case in this plan follow a naming convention that uses EG-XXX-Y where EG is ExoGENI and XXX may equal any of the following: ADM for Administrative or EXP for Experimenter or MON for Monitoring. The final component of the test case name is the Y, which is the test case number.

Requirements Validation

This acceptance test plan verifies Integration (C), Monitoring (D), Experimenter (G) and Local Aggregate (F) requirements. As part of the test planing process, the GPO Infrastructure group mapped each of the GENI Rack Requirements to a set of validation criteria. For a detailed look at the validation criteria see the GENI Racks Acceptance Criteria page.

This plan does not validate any Software (B) requirements, as they are validated by the GPO Software team's GENI AM API Acceptance tests suite.

Some requirements are not verified in this test plan:

- C.2.a "Support at least 100 simultaneous active (e.g. actually passing data) layer 2 Ethernet VLAN connections to the rack. For this purpose, VLAN paths must terminate on separate rack VMs, not on the rack switch."

- Production Aggregate Requirements (E)

Glossary

Following is a glossary for terminology used in this plan, for additional terminology definition see the GENI Glossary page.

- Local Broker - An ORCA Broker provides the coordinating function needed to create slices. The rack's ORCA AM delegates a portion of the local resources to one or more brokers. Each rack has an ORCA AM that delegates resources to a local broker (for coordinating intra-rack resource allocations of compute resources and VLANs) and to the global broker.

- ORCA Actors - ExoGENI Site Authorities and Brokers which can communicate with each other. An actor requires ExoGENI Operations staff approval in order to start communications with other actors.

- ORCA Actor Registry - A secure service that allows distributed ExoGENI ORCA Actors to recognize each other and create security associations in order for them to communicate. Runs at Actor Registry web page. All active ORCA Actors are listed in this page.

- ORCA Aggregate Manager (AM) - An ORCA resource provider that handles requests for resources via the ORCA SM and coordinates brokers to delegate resources. The ORCA Aggregate Manager is not the same as the GENI Aggregate Manager.

- Site or Rack Service Manager (SM) - Exposes the ExoGENI Rack GENI AM API interface and the native XMLRPC interface to handles experimenter resource requests. The site SM receives requests from brokers (tickets) and redeems tickets with the ORCA AM. All Acceptance tests defined in this plan interact with a Service Manager (Site SM or the global ExoSM) using the GENI AM API interface via the Omni tool.

- ExoSM - A global ExoGENI Service Manager that provides access to resources from multiple ExoGENI racks and intermediate network providers. The ExoSM supports GENI AM API interactions.

- ORCA RSpec/NDL conversion service - A service running RENCI which is used by all ORCA SMs to conver RSPEC requests to NDL and NDL Manifests to RSpec.

- People:

- Experimenter: A person accessing the rack using a GENI credential and the GENI AM API.

- Administrator: A person who has fully-privileged access to, and responsibility for, the rack infrastructure (servers, network devices, etc) at a given location.

- Operator: A person who has unprivileged/partially-privileged access to the rack infrastructure at a given location, and has responsibility for one or a few particular functions.

- Baseline Monitoring: Set of monitoring functions which show aggregate health for VMs and switches and their interface status, and traffic counts for interfaces and VLANs. Baseline monitoring includes resource availability and utilization.

- Experimental compute resources:

- VM: An experimental compute resource which is a virtual machine located on a physical machine in the rack.

- Bare metal Node: An experimental exclusive compute resource which is a physical machine usable by experimenters without virtualization.

- Compute Resource: Either a VM or a bare metal node.

- Experimental compute resource components:

- logical interface: A network interface seen by a compute resource (e.g. a distinct listing in

ifconfigoutput). May be provided by a physical interface, or by virtualization of an interface.

- logical interface: A network interface seen by a compute resource (e.g. a distinct listing in

- Experimental network resources:

- VLAN: A data plane VLAN, which may or may not be OpenFlow-controlled.

- Bound VLAN: A VLAN which an experimenter requests by specifying the desired VLAN ID. (If the aggregate is unable to provide access to that numbered VLAN or to another VLAN which is bridged to the numbered VLAN, the experimenter's request will fail.)

- Unbound VLAN: A VLAN which an experimenter requests without specifying a VLAN ID. (The aggregate may provide any available VLAN to the experimenter.)

- Exclusive VLAN: A VLAN which is provided for the exclusive use of one experimenter.

- Shared VLAN: A VLAN which is shared among multiple experimenters.

We make the following assumptions about experimental network resources for these tests:

- Unbound VLANs are always exclusive.

- Bound VLANs may be either exclusive or shared, and this is determined on a per-VLAN basis and configured by operators, including in some cases operators outside GENI.

- Shared VLANs are always OpenFlow-controlled, with OpenFlow providing the slicing between experimenters who have access to the VLAN.

- If a VLAN provides an end-to-end path between multiple aggregates or organizations, it is considered "shared" if it is shared anywhere along its length --- even if only one experimenter can access the VLAN at some particular aggregate or organization (for whatever reason), a VLAN which is shared anywhere along its L2 path is called "shared".

Email help@geni.net for GENI support or email me with feedback on this page!

Attachments (5)

- ExoGENIMultiSiteAcceptanceTest.jpg (81.4 KB) - added by 12 years ago.

- ExoGENISingleSiteLimitsTest.jpg (35.5 KB) - added by 12 years ago.

- ExoGENISingleSiteAcceptanceTest.jpg (44.7 KB) - added by 12 years ago.

- ExoGENIMultiSiteOpenFlowAcceptanceTest.jpg (119.3 KB) - added by 12 years ago.

- ExoGENIOFNetworkResourceAcceptanceTest.jpg (63.0 KB) - added by 12 years ago.

Download all attachments as: .zip