Part I: Obtain Resources: create a slice and reserve resources

Instructions

1. Create a slice

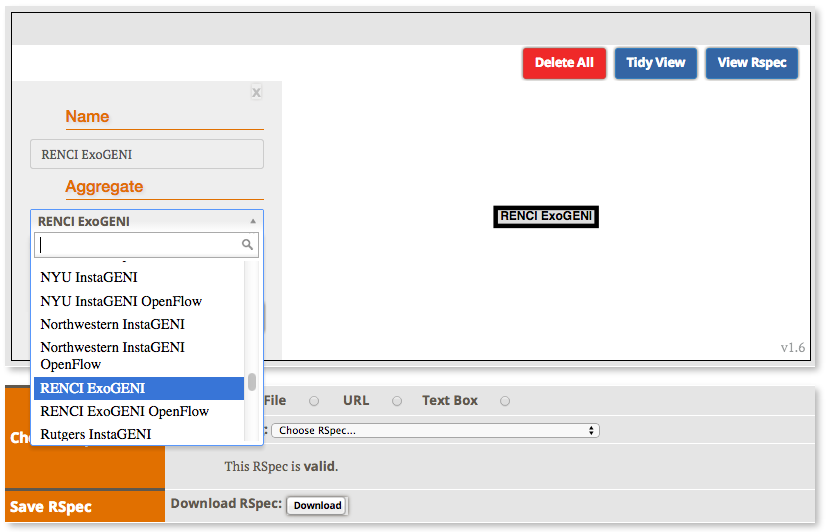

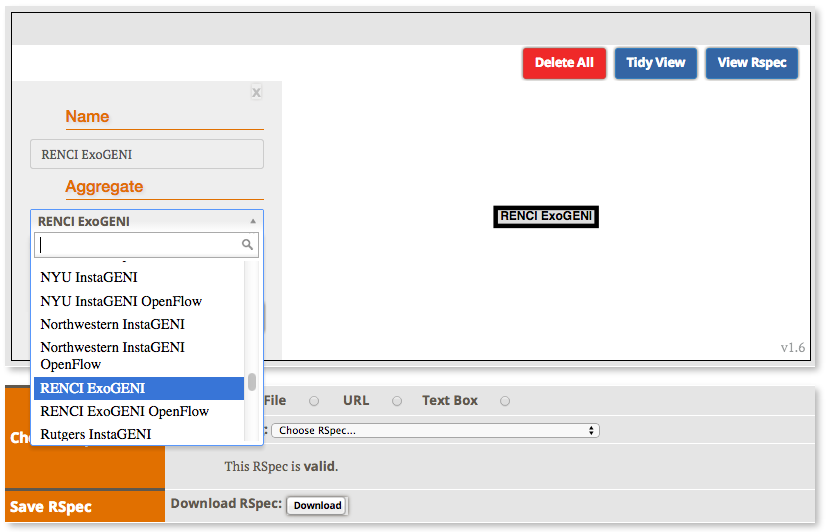

2. Bind the Slice

- Click the "Site 0" in the Jacks window

- Select an ExoGENI site (If you are participating in an organized tutorial, please bind the VMs to the rack assigned to you)

|

|

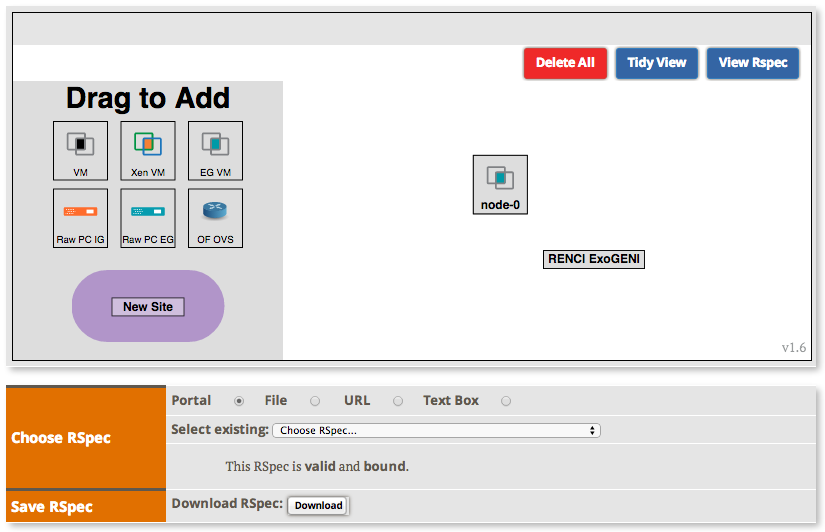

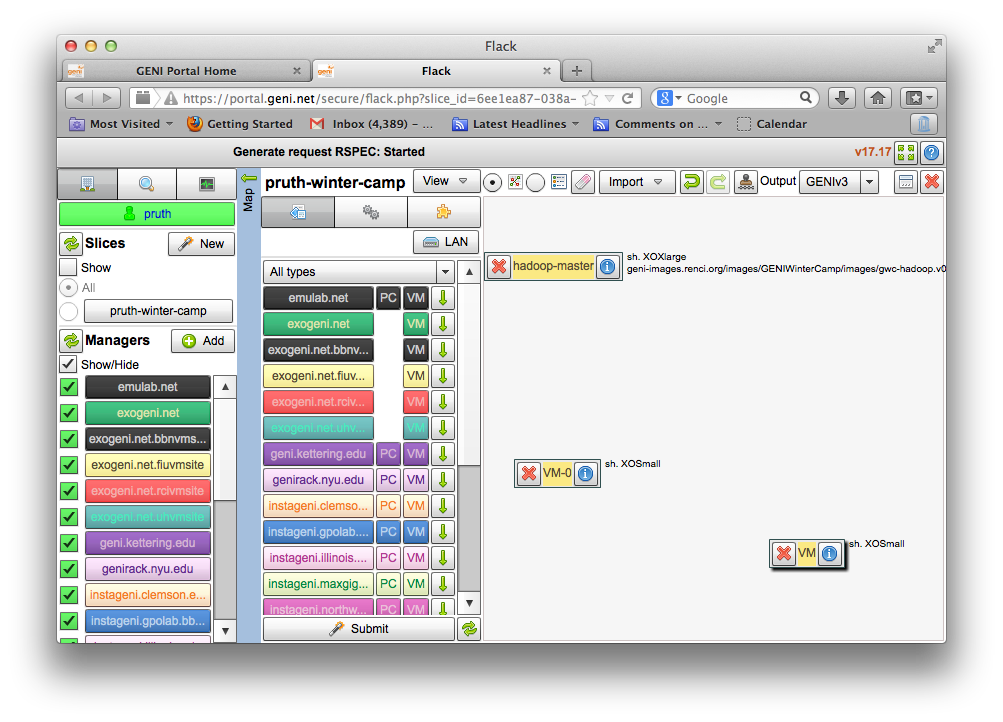

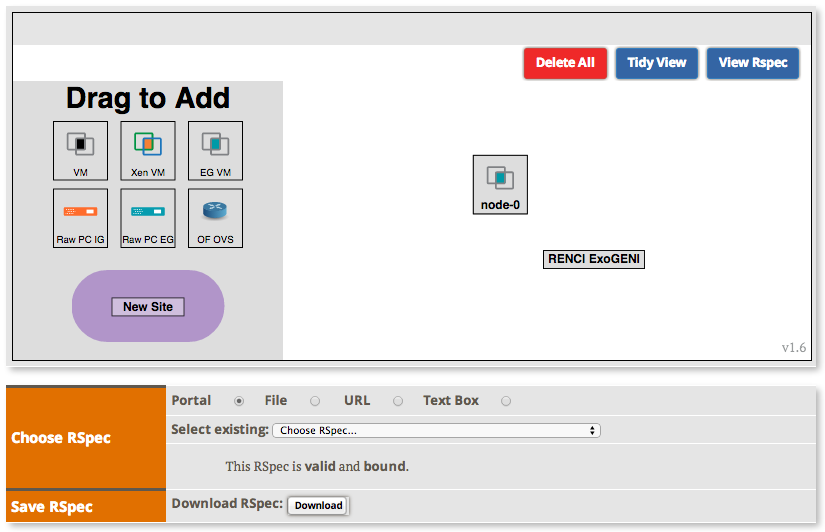

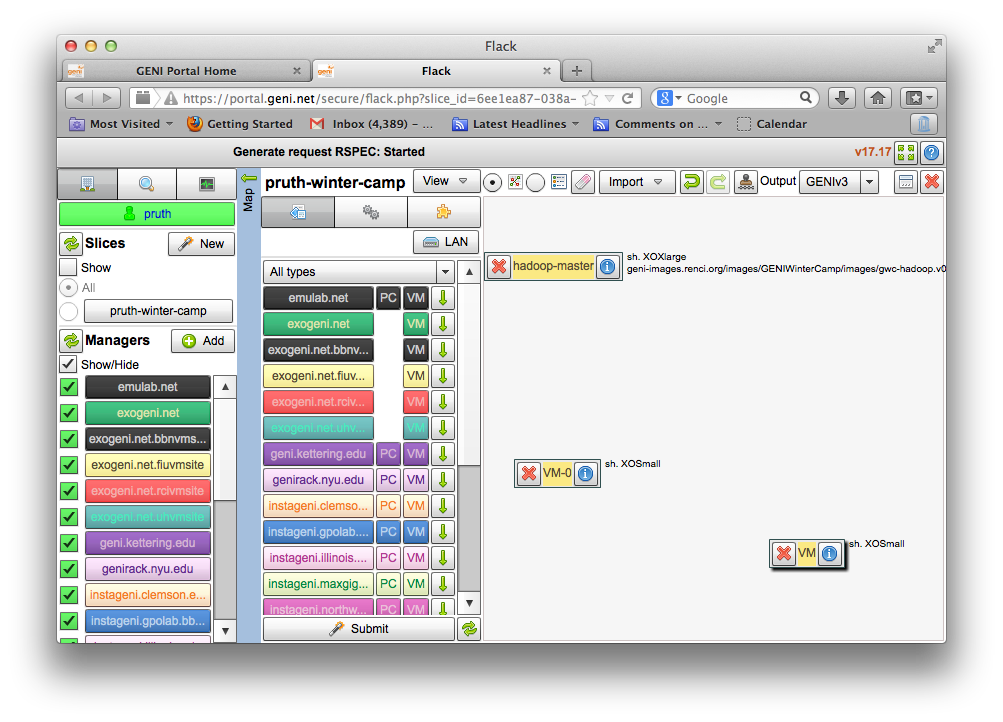

3. Create the Hadoop Master

- Add an ExoGENI VM to your slice.

|

|

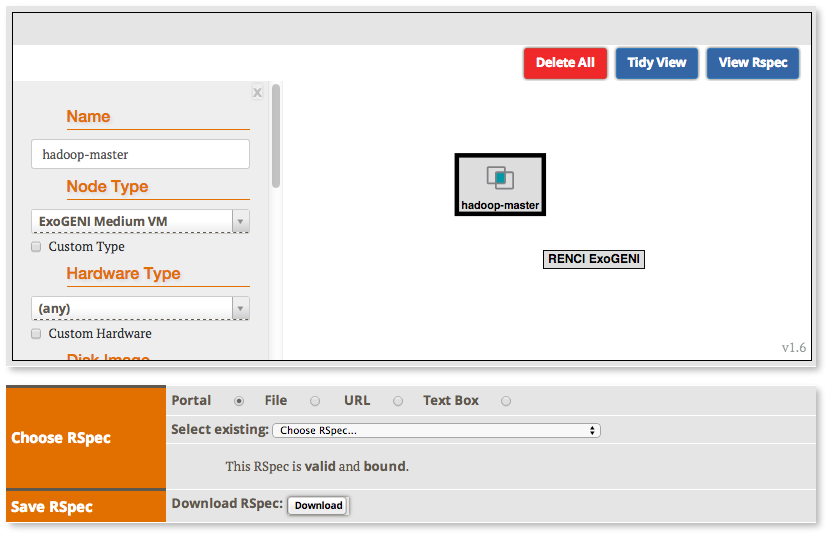

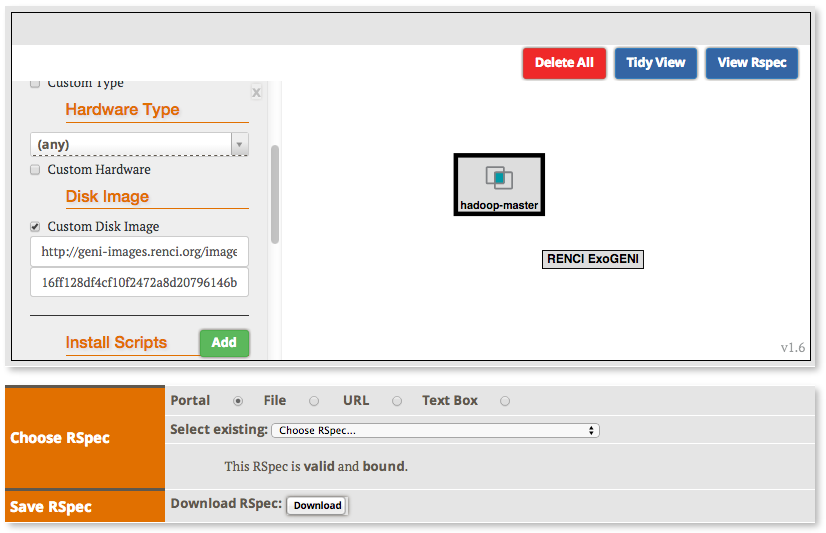

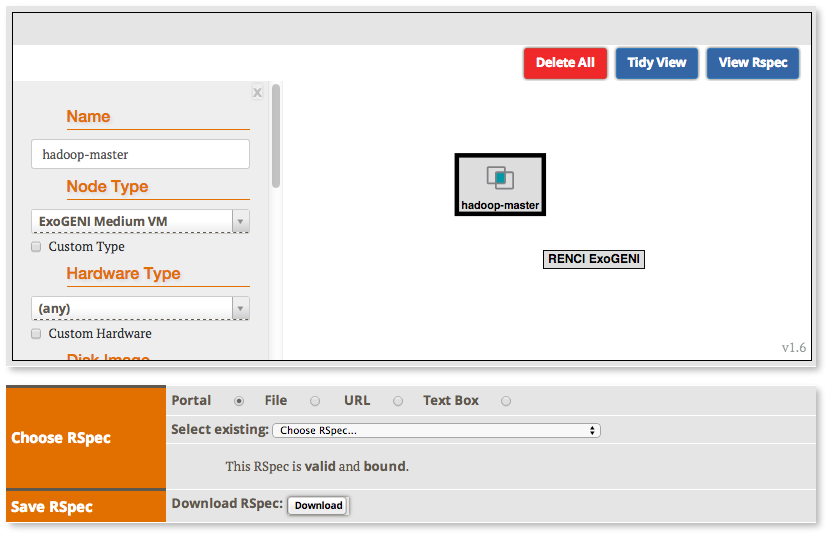

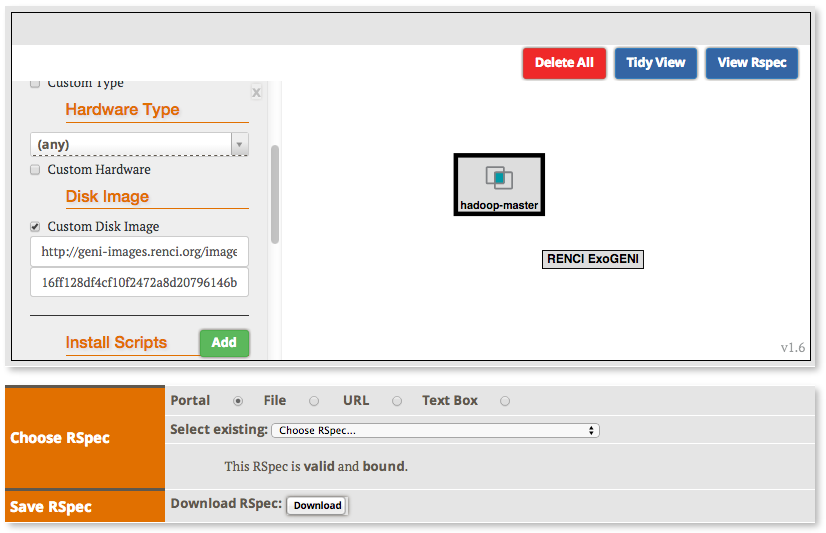

- Select the VM to set its properties:

- Name: hadoop-master

- Node Type: ExoGENI Medium

- Custom Disk Image Name: http://geni-images.renci.org/images/GENIWinterCamp/images/gwc-hadoop.v0.4a.xml

- Disk Image Version: 16ff128df4cf10f2472a8d20796146bcd5a5ddc3

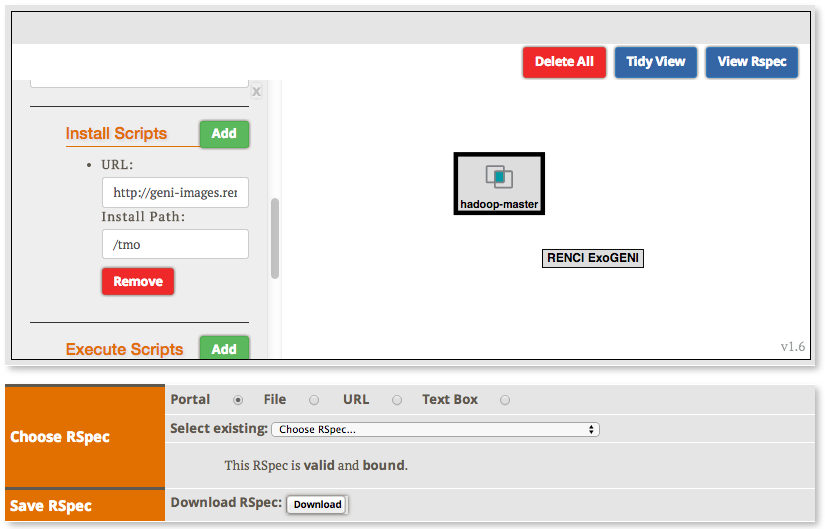

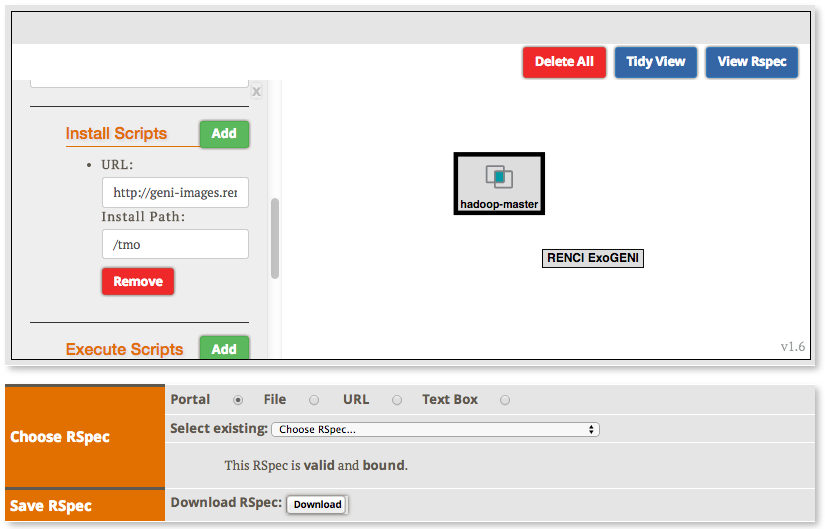

- Add an Install Script:

URL: http://geni-images.renci.org/images/GENIWinterCamp/master.sh

Path: /tmp

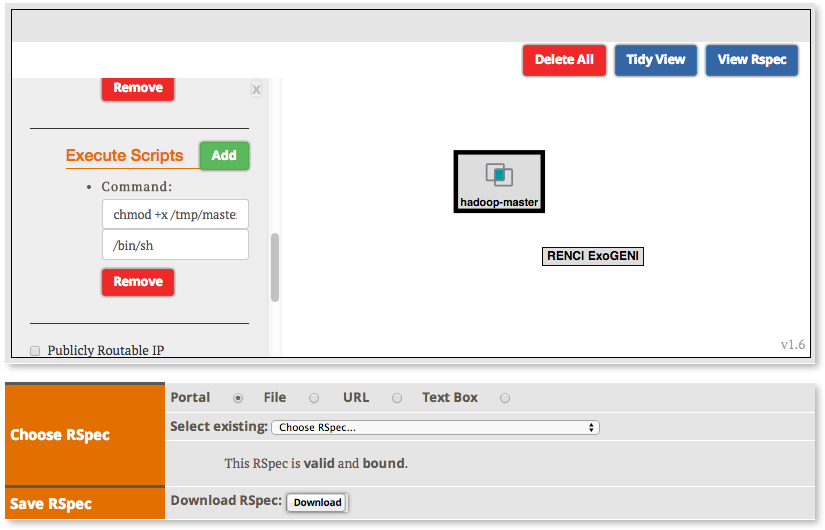

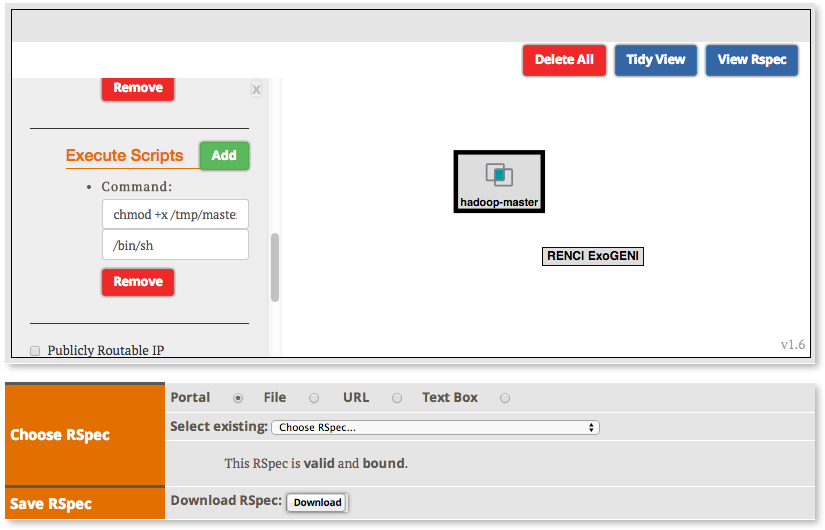

- Add an execute service to execute the script at boot time:

chmod +x /tmp/master.sh; /tmp/master.sh

|

3. Create the Hadoop Workers

- Add a 2 more VMs to the same rack as the first VM.

|

|

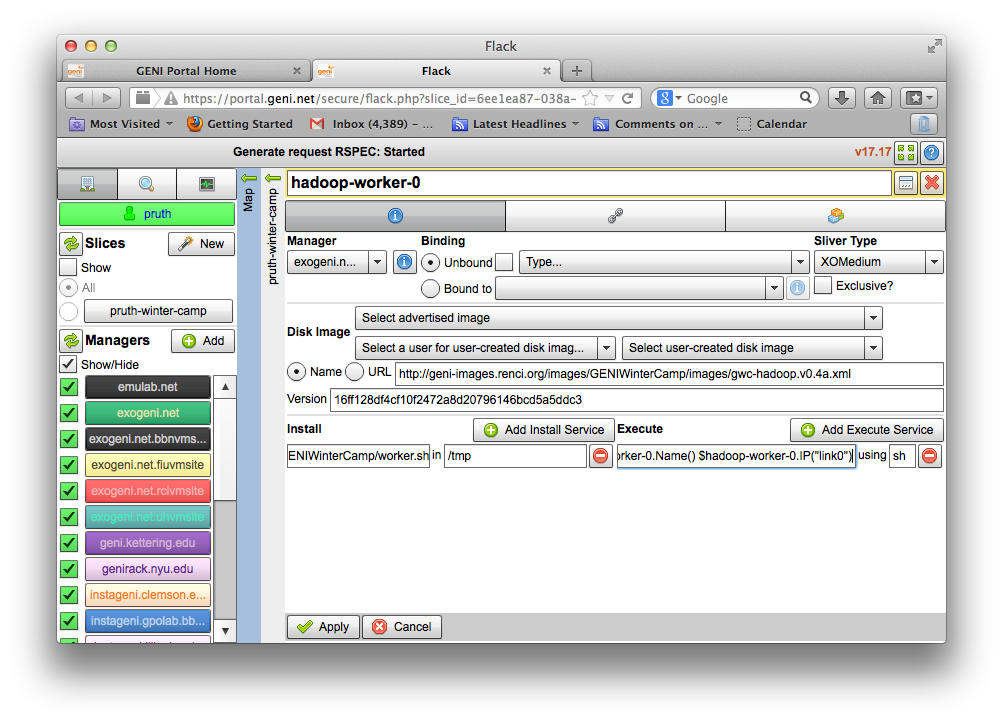

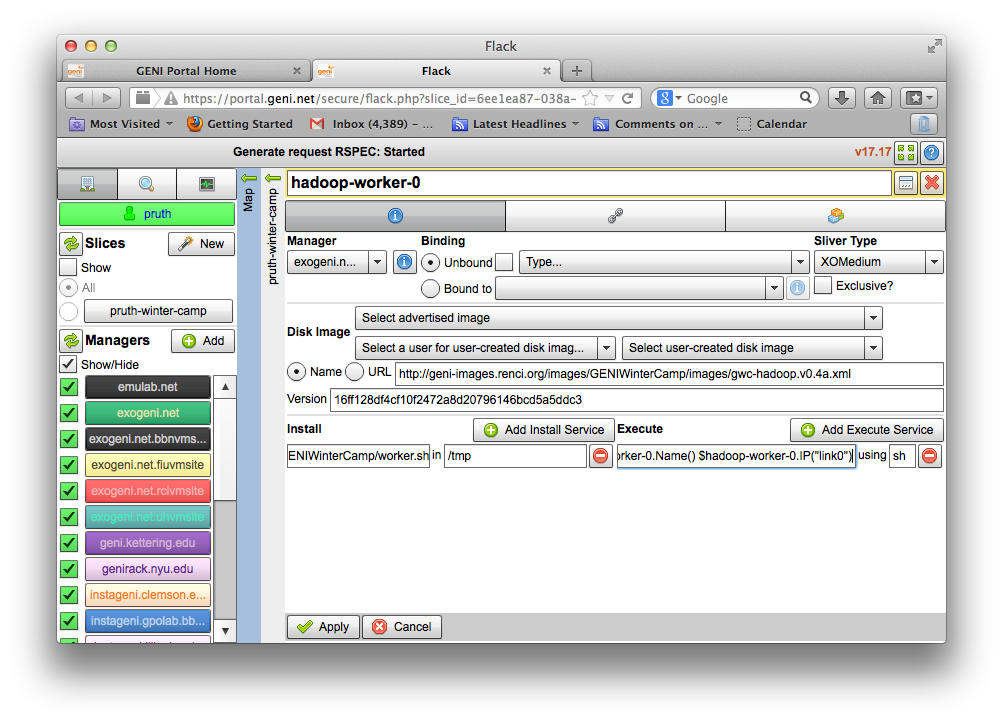

- Edit each worker’s attributes

- Names: hadoop-worker-0 and hadoop-worker-1

- Sliver Type: XOMedium

- Disk Image Name:

http://geni-images.renci.org/images/GENIWinterCamp/images/gwc-hadoop.v0.4a.xml

- Disk Image Version: 16ff128df4cf10f2472a8d20796146bcd5a5ddc3

- Add an install service to install following script in /tmp

http://geni-images.renci.org/images/GENIWinterCamp/worker.sh

- Add an execute service to execute the script at boot time. For each VM, substitute

the VM’s name for where the following uses “hadoop-worker-0”. (Note: the following

should be placed on one line)

chmod +x /tmp/worker.sh; /tmp/worker.sh $hadoop-master.Name() $hadoop-master.IP("link0") $hadoop-worker-0.Name() $hadoop-worker-0.IP("link0")

|

|

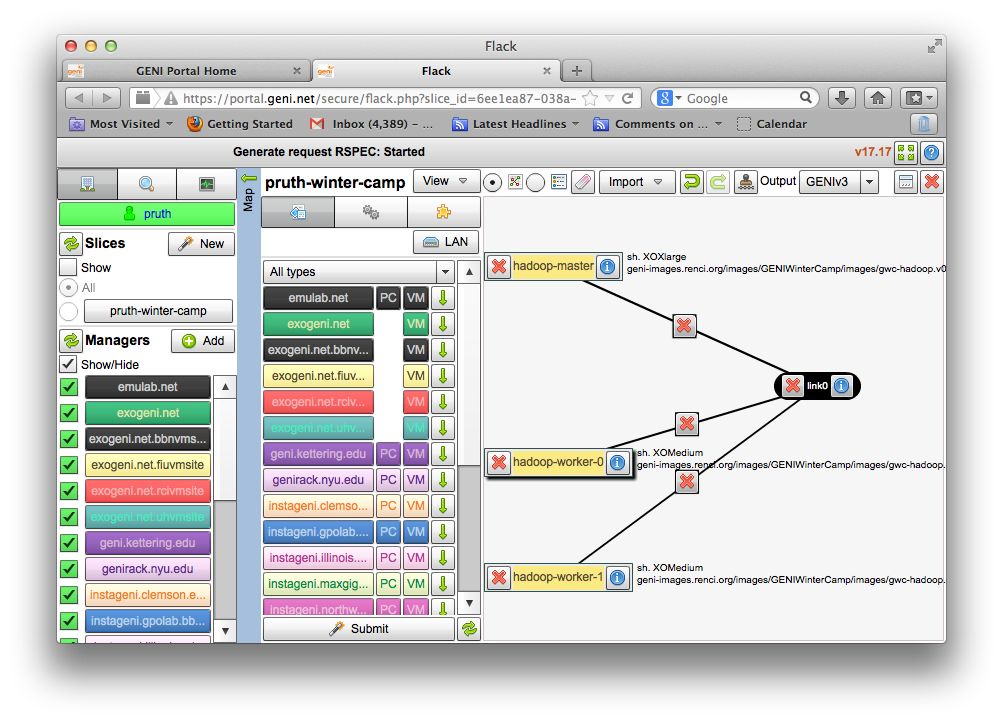

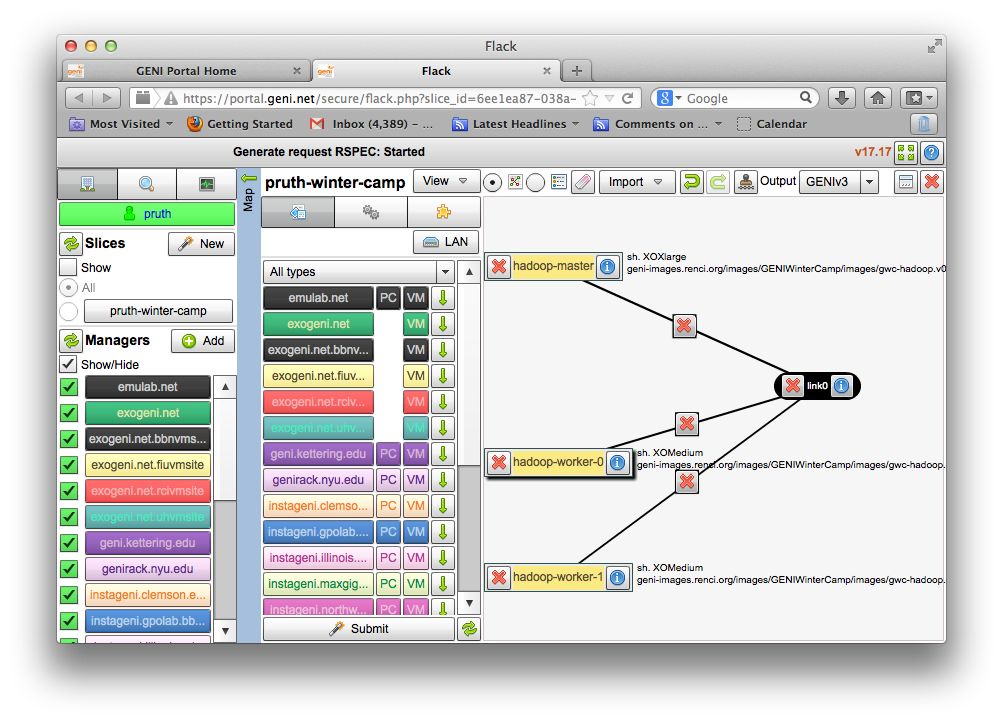

4. Create the Network

- Link all three VMs with a broadcast network

|

|

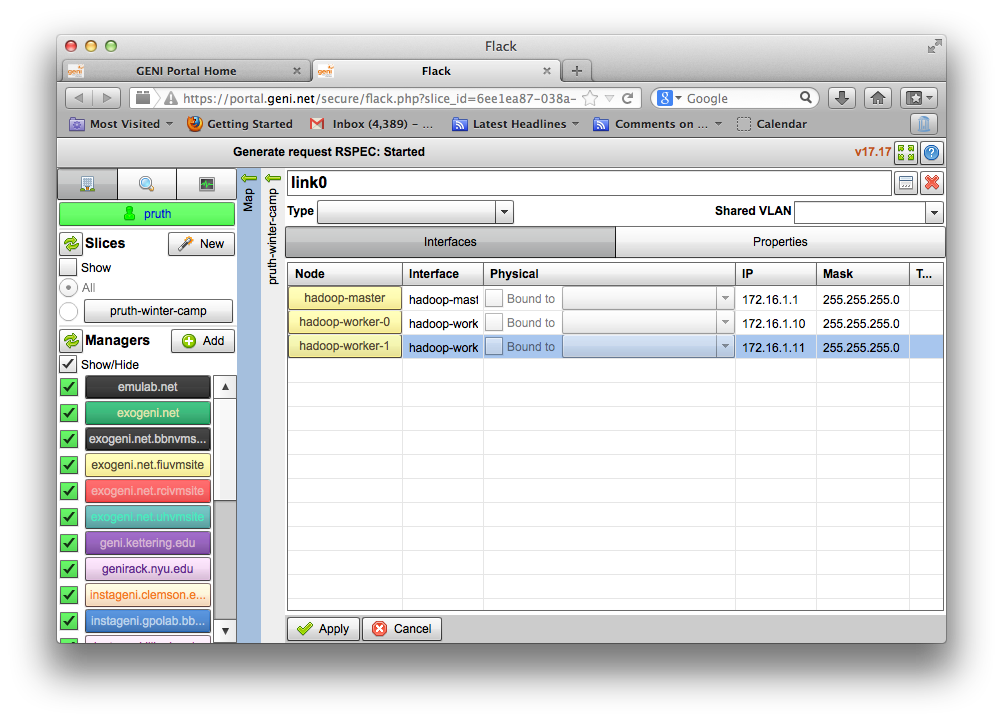

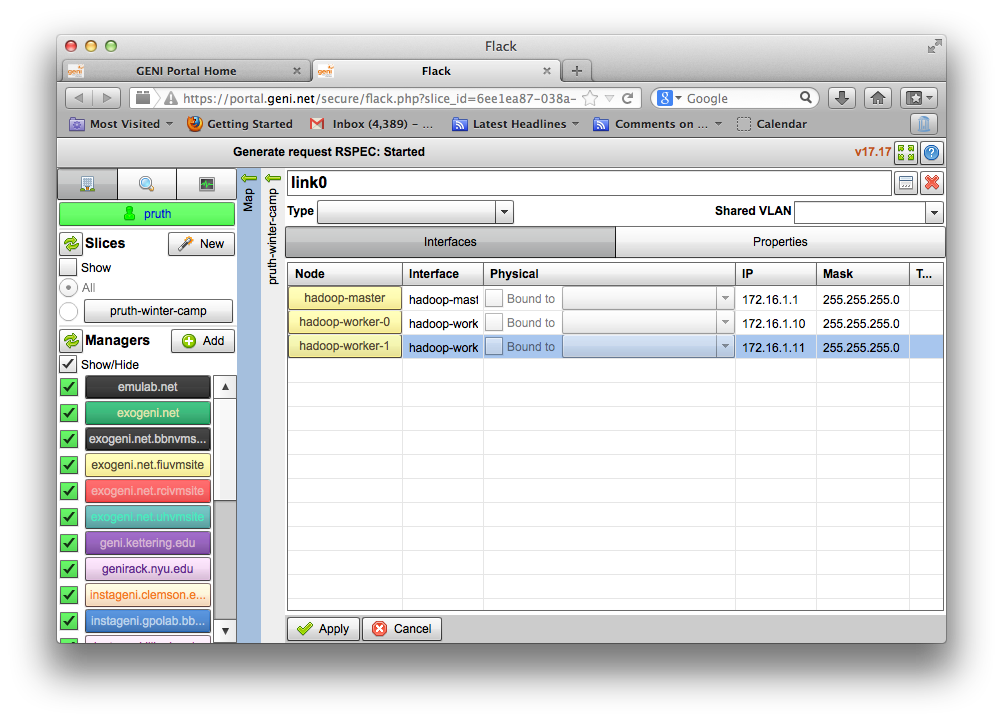

- Click the i button on the link to edit its properties.

- Name the link link0

- Under the interfaces tab add IP addresses and subnets to each VM’s interface.

hadoop-master: 172.16.1.1 and 255.255.255.0

hadoop-worker-0: 172.16.1.10 and 255.255.255.0

hadoop-worker-1: 172.16.1.11 and 255.255.255.0

|

|

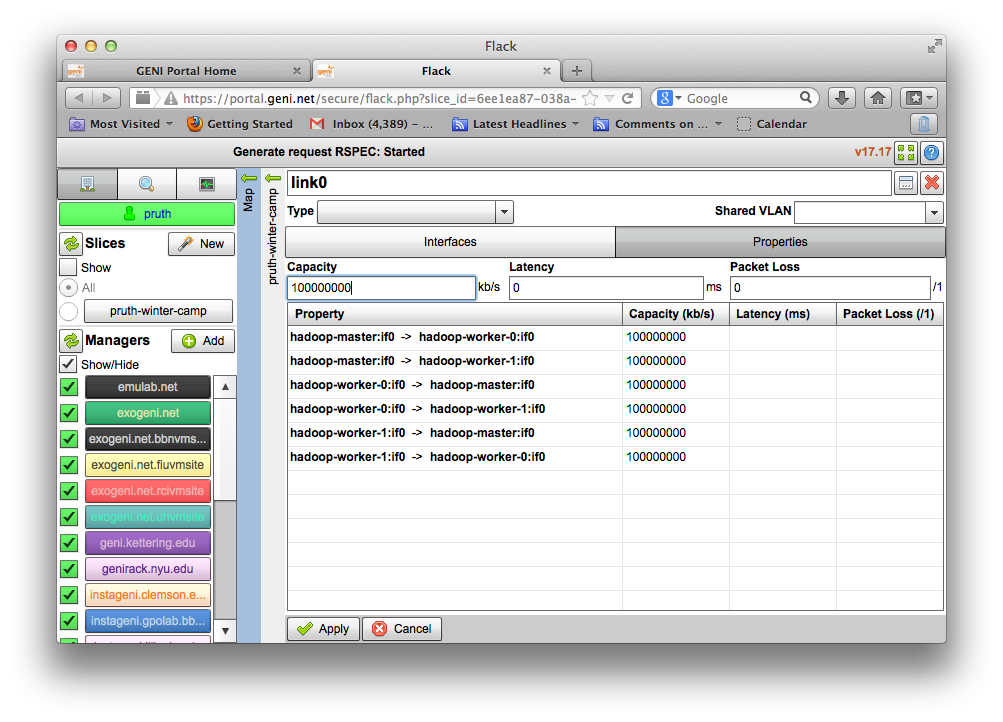

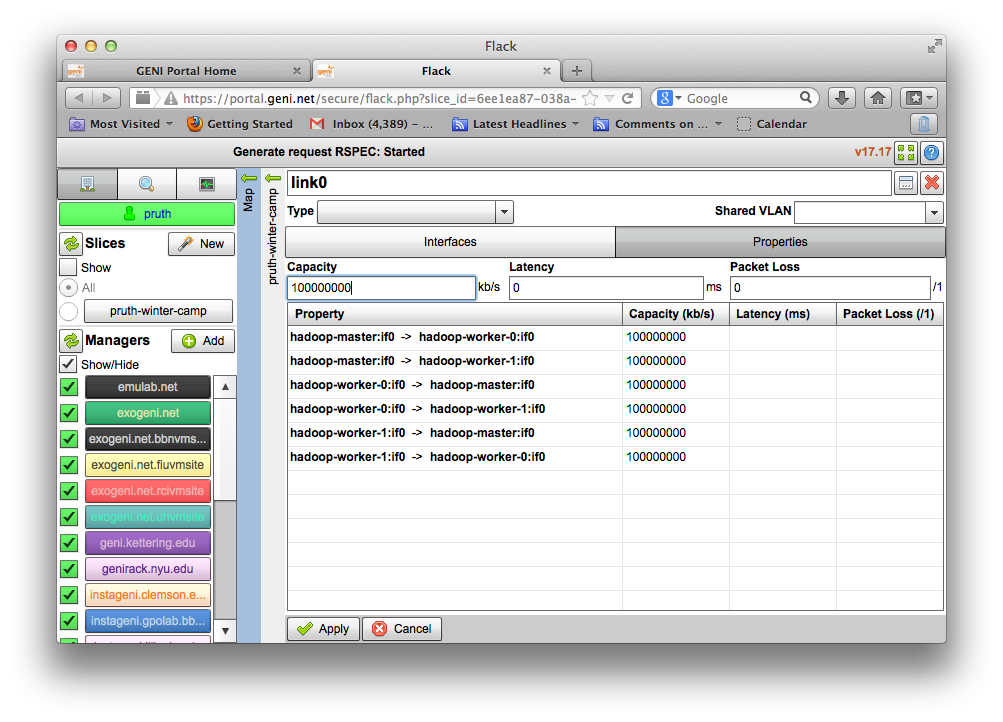

- Switch to the properties tab and set the link’s bandwidth 100Mb/s

Set capacity: 100000000 (note: Flack will report that this is 100Gb/s but it is actually

100Mb/s)

|

|

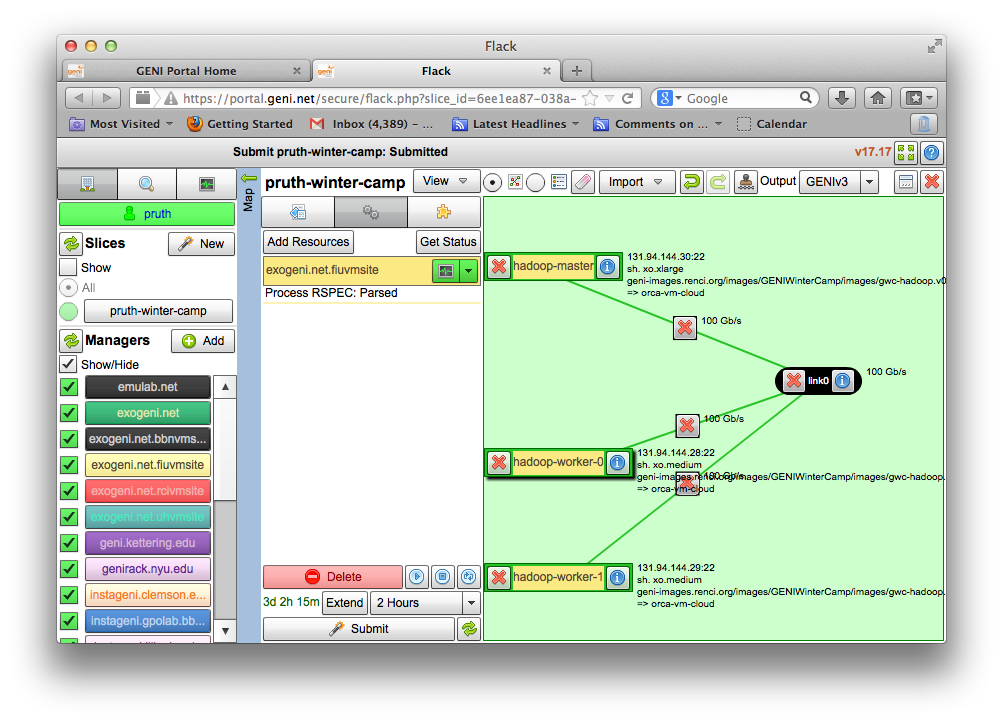

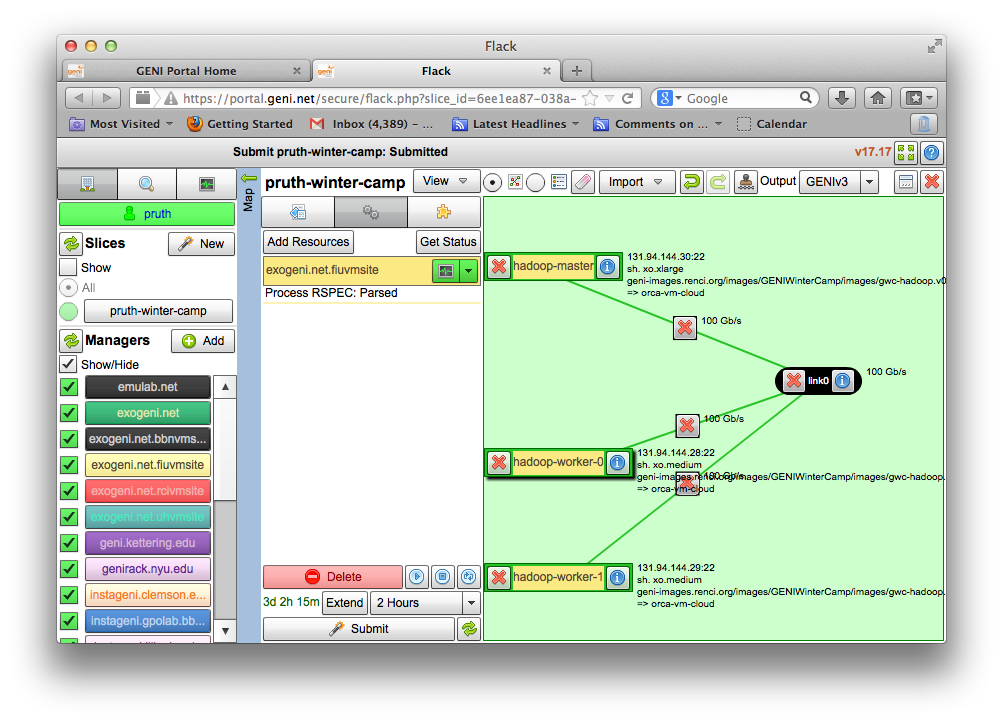

5. Instantiate the Slice

- Submit the request

- Wait until the slice is up

|

|