| Version 76 (modified by , 10 years ago) (diff) |

|---|

OpenFlow Load Balancer Tutorial

2. Configure and Initialize Services

2.1 Login to Nodes Switch, Aggregator, Inside and Outside

2.1.1 Get your reservation details

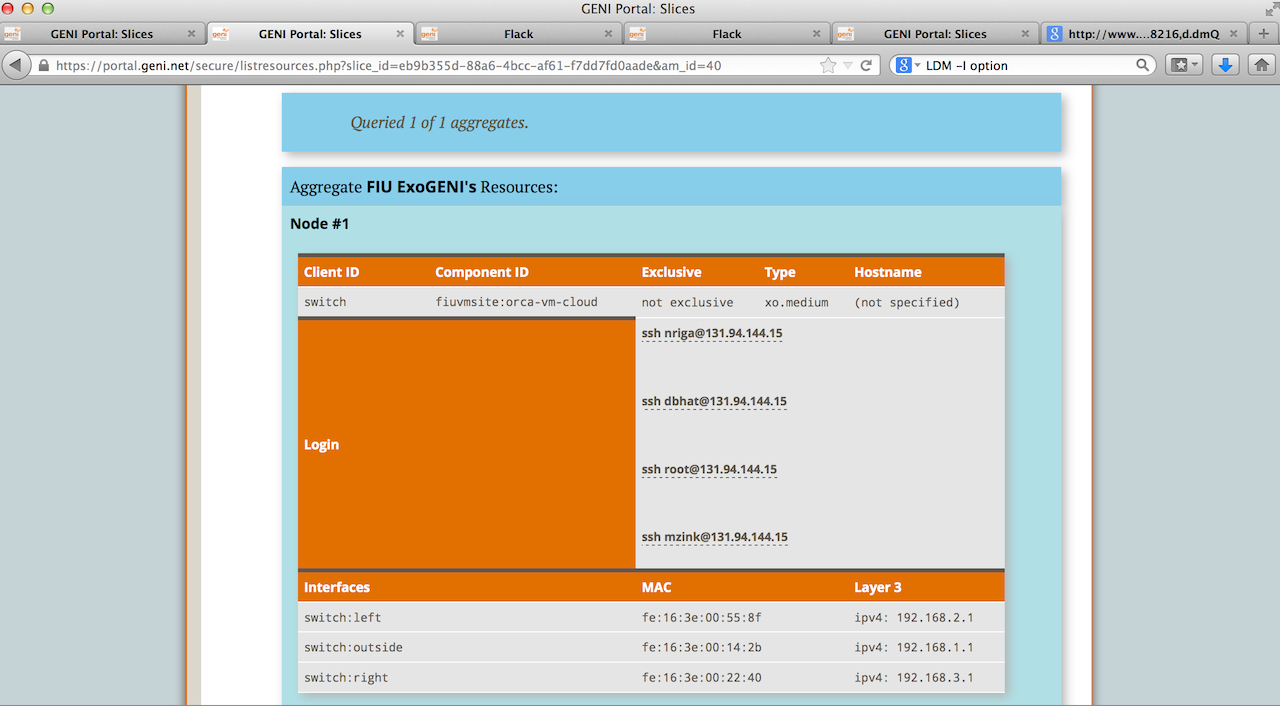

Click on your Slice name in the GENI Portal, scroll to the aggregate where you reserved your resources and click on "Details" next to it. You will see a page as below.

Use the Login information obtained here to login to any node using any SSH client. Please login on 4 different ssh windows to: switch, aggregator, inside, outside.

2.2. Setup your nodes

- 2.2.1 Check that all interfaces on the

switchand on theaggregatorare configured: Issue/sbin/ifconfigand make sure eth1, eth2, eth3 are up and assigned with valid IP addresses.

![]() You may not be able to see all interfaces up immediately when node "Switch" is ready; wait for some more time (about 1 min) then try "ifconfig" again. On the

You may not be able to see all interfaces up immediately when node "Switch" is ready; wait for some more time (about 1 min) then try "ifconfig" again. On the switch node note which interfaces have the following IP addresses:

192.168.2.1(left)

192.168.3.1(right)

- 2.2.2 Setup the

switchand theaggregator

On the Aggregator Node run

sudo bash source /etc/profile.d/rvm.sh trema run /tmp/aggregator/aggregator.rb >& /tmp/trema.run &

On the Switch Node run

sudo bash source /etc/profile.d/rvm.sh

We have already installed and configured the OVS switch, if you want to take a look at the configuration and understand more look at the output of these commands

ovs-vsctl list-br ovs-vsctl list-ports br0 ovs-vsctl show ovs-ofctl show br0

2.3. Configure LabWiki to orchestrate and monitor your experiment

- 2.3.1 Log on to LabWiki on http://emmy9.casa.umass.edu:<port>

use the port given to you

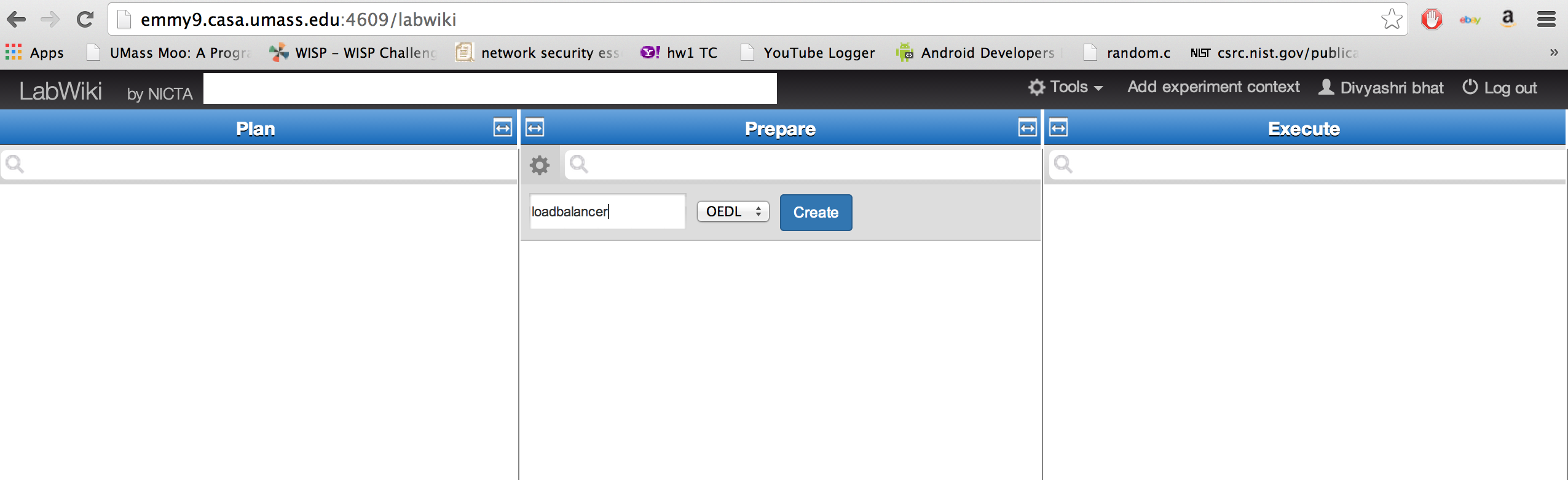

For this tutorial, we have a preloaded script (loadbalancer.rb). Type this in the Prepare column. Your script should appear. Click on it. If the script does not appear then create one yourself.

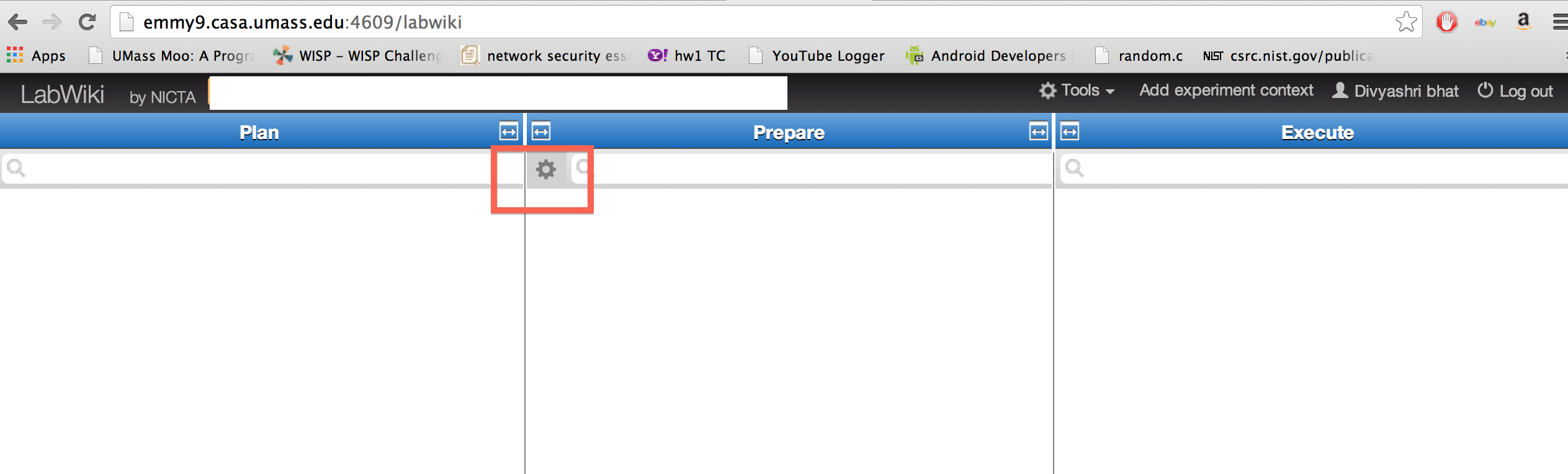

- in the

PrepareColumn, create a new Ruby script by clicking on the "*" at the top-left of the column.

Type a name for the script, eg. loadbalancer and save it as an OEDL file. Enter the name of the script you just created, in the prepare column. It is now ready for editing.

Copy the contents of the script from Appendix A, paste it in LabWiki and save it.

![]() Make sure that the save icon is deactivated after you press save, if it is not it means you have not successfully saved, try again.

Make sure that the save icon is deactivated after you press save, if it is not it means you have not successfully saved, try again.

3. Run your experiment

3.1 Start your experiment

- 3.1.1 Start the controller on switch.

An example OpenFlow Controller that assigns incoming TCP connections to alternating paths based on total number of flows (round robin) is already downloaded for you. You can find it (load-balancer.rb) in the home directory on node "Switch".

trema run /root/load-balancer.rb

After you started your Load Balancer, you should be able to see the following (Switch id may vary):OpenFlow Load Balancer Conltroller Started! Switch is Ready! Switch id: 196242264273477

This means the OpenFlow Switch is connected to your controller and you can start testing your OpenFlow Load Balancer now.

- 3.1.2 Start your experiment in LabWiki.

- 3.1.2.1 Drag the

file Iconat the left-top corner on your LabWiki page fromPreparecolumn and drop it toExecutecolumn. - 3.1.2.2 Fill in the the experiment properties in the

Executecolumn:- name of your LabWiki experiment (this can be anything that does not contain spaces, it is just to help you track the experiments you run)

- select your project from the drop-down list

- select your slice from the list,

- Change theSender, theReceiver and theSwitch to include your slice name. For e.g outside-loadbaltest should be outside-<yourslicename>

- fill in the left and right interfaces for the

switchnode as you gathered them at step 2.2.1

If you want instead of changing them at the

If you want instead of changing them at the Executepane every time you run an experiment you can change them once in the script. Just modify these lines to the appropriate values:

- 3.1.2.3 Press the "Start Experiment" button.

- 3.1.2.1 Drag the

- 3.1.2 When your experiment is finished:

- Kill the controller at the switch node by pressing

Ctrl-c - Kill the iperf processes on

outsideandinsidenodes. On both nodes do:ps aux | grep "iperf". Output should look like:root 4728 0.0 1.1 273368 6044 ? Ssl 19:13 0:00 /usr/bin/iperf_oml2 -s -p 6001 --oml-config /tmp/51449a37-ab3e-43a3-a872-37931c7785ee-1389294789.xml

Executekill -9 <process_id>for the above case <process_id> would be 4278

- Kill the controller at the switch node by pressing

3.2 Run the experiment in paths with different bandwidth

- 3.2.1 Log on to node "left" (use the

readyToLogin.pyscript) and change the link capacity for the interface with IP address "192.168.2.2" (use "ifconfig" to find the correct interface, here we assume eth1 is the interface connecting to node "Switch"):ovs-vsctl set Interface eth1 ingress_policing_rate=10000

The above will rate-limit the connection from node "Switch" to node "left" to have a bandwidth of 10Mbps. - Other ways to e.g., change link delay and loss-rate using "tc qdisc netem" can be found in Appendix D.

- 3.2.2 Re-run your experiment with the new setting following the same instructions as in 3.1.

Questions

- Did you see any difference from the graphs plotted on LabWiki, compared with the graphs plotted in the first experiment? why?

- Check out the output of the Load Balancer on node "Switch" and tell how many flows are directed to the left path and how many are on the right path, why?

- To answer the above question, you need to understand the Load Balancing controller. Check out the "load-balancer.rb" file in your home directory on node "Switch". Check Appendix B for hints/explanations about this OpenFlow Controller.

3.3 Modify the OpenFlow Controller to balance throughput among all the TCP flows

- You need to calculate the average per-flow throughput observed from both left and right paths. The modifications need to happen in the function "stats_reply" in load-balancer.rb

- In function "decide_path", change the path decision based on the calculated average per-flow throughput: forward the flow onto the path with more average per-flow throughput. (Why? TCP tries its best to consume the whole bandwidth so more throughput means network is not congested)

- Helpful tips about debugging your OpenFlow controller can be found in Appendix E

- If you really do not know where to start, the answer can be found on node "Switch", at /tmp/load-balancer/load-balancer-solution.rb

- Copy the above solution into your home directory then re-do the experiment on LabWiki.

You need to change your script to use the correct Load Balancing controller (e.g., if your controller is "load-balancer-solution.rb", you should run "/opt/trema-trema-f995284/trema run /root/load-balancer-solution.rb")

You need to change your script to use the correct Load Balancing controller (e.g., if your controller is "load-balancer-solution.rb", you should run "/opt/trema-trema-f995284/trema run /root/load-balancer-solution.rb")

- Rerun the experiment using your new OpenFlow Controller following the steps of Section 3.1, check the graphs plotted on LabWiki as well as the controller's log on node "Switch" and see the difference.

3.4 Automate your experiment using LabWiki

- 3.4.1 Add code in your LabWiki script to automate starting and stopping your OpenFlow Controller:

- 3.4.1.1 Go back to your LabWiki page, un-comment the script from line 184 to line 189 to start your OpenFlow Controller automatically on LabWiki

You might need to change line 185 to use the correct load balancer controller

You might need to change line 185 to use the correct load balancer controller

- 3.4.1.2 In your script, uncomment lines 205 to line 209 to stop your OpenFlow Controller automatically on LabWiki

- 3.4.1.1 Go back to your LabWiki page, un-comment the script from line 184 to line 189 to start your OpenFlow Controller automatically on LabWiki

- 3.4.2 On your LabWiki web page, drag and drop the

file iconand repeat the experiment, as described in section 3.1, using a different experiment name (the slice name should stay the same). - If you have more time or are interested in trying out things, go ahead and try section 3.5. The tutorial is over now and feel free to ask questions :-)

3.5(Optional) Try different kinds of OpenFlow Load Balancers

- You can find more load balancers under /tmp/load-balancer/ on node "Switch"

- To try out any one of them, follow the steps:

- At the home directory on node "Switch", copy the load balancer you want to try out, e.g.,

cp /tmp/load-balancer/load-balancer-random.rb /root/

- Change your LabWiki code at line 185 to use the correct OpenFlow controller.

- On LabWiki, drag and drop the "File" icon and re-do the experiment as described in section 3.1

- At the home directory on node "Switch", copy the load balancer you want to try out, e.g.,

- Some explanations about the different load balancers:

- "load-balancer-random.rb" is the load balancer that picks path randomly: each path has 50% of the chance to get picked

- "load-balancer-roundrobin.rb" is the load balancer that picks path in a round robin fashion: right path is picked first, then left path, etc.

- Load balancers that begin with "load-balancer-bytes" picks path based on the total number of bytes sent out to each path: the one with fewer bytes sent out is picked

- "load-balancer-bytes-thread.rb" sends out flow stats request in function "packet_in" upon the arrival of a new TCP flow and waits until flow stats reply is received in function "stats_reply" before a decision is made. As a result, this balancer gets the most up-to-date flow stats to make a decision. However, it needs to wait for at least the round-trip time from the controller to the switch (for the flow stats reply) before a decision can be made.

- "load-balancer-bytes-auto-thread.rb" sends out flow stats request once every 5 seconds in a separate thread, and makes path decisions based on the most recently received flow stats reply. As a result, this balancer makes path decisions based on some old statistics (up to 5 seconds) but reacts fast upon the arrival of a new TCP flow (i.e., no need to wait for flow stats reply)

- Load balancers that begin with "load-balancer-flows" picks path based on the total number of flows sent out to each path: the one with fewer flows sent out is picked

- Load balancers that begin with "load-balancer-throughput" picks path based on the total throughput sent out to each path: the one with more throughput is picked

Introduction

Next: Teardown Experiment

Attachments (8)

- OpenFlowLBExo.png (63.8 KB) - added by 11 years ago.

- LabWiki_newscript.png (98.5 KB) - added by 10 years ago.

- LabWiki_newscriptsel.png (115.0 KB) - added by 10 years ago.

- labwikinew.png (213.4 KB) - added by 10 years ago.

- labwikinew2.png (220.9 KB) - added by 10 years ago.

- FindAM.png (666.0 KB) - added by 10 years ago.

- LoginAM.png (257.6 KB) - added by 10 years ago.

- editscriptLW.png (286.3 KB) - added by 10 years ago.

Download all attachments as: .zip