| Version 6 (modified by , 12 years ago) (diff) |

|---|

Thin Client Performance Benchmarking based Resource Adaptation in Virtual Desktop Clouds

Popular applications such as email, image/video galleries, and file storage are increasingly being supported by cloud platforms in residential, academia and industry communities. The next frontier for these user communities will be to transition 'traditional desktops' that have dedicated hardware and software configurations into 'virtual desktop clouds' that are accessible via thin clients.

This project aims to develop optimal resource allocation frameworks and performance benchmarking tools that can enable building and managing thin-client based virtual desktop clouds at Internet-scale. Virtual desktop cloud experiments under realistic user and system loads are being conducted by leveraging multiple kinds of GENI resources such as aggregates, measurement services and experimenter workflow tools.

Project outcomes will help in minimizing costly cloud resource over-provisioning, and in avoiding thin client protocol configuration guesswork, while delivering optimum user experience.

Project Team

Institutions

- The Ohio State University

- Ohio Supercomputer Center

- OARnet

Team Members

- PI: Prasad Calyam, Prasad Calyam

- Rohit Patali

- Aishwarya Venkataraman

- Alex Berryman

- Mukundan Sridharan

- Rajiv Ramnath

Experiments

Investigation of resource management schemes for thin-client based virtual desktop clouds at Internet-scale

To allocate and manage VDC resources for Internet-scale desktop delivery, existing works focus mainly on managing server-side resources based on utility functions of CPU and memory loads, and do not consider network health and thin-client user experience. Resource allocations without combined utility-directed information of system loads, network health and thin-client user experience in VDC platforms inevitably results in costly guesswork and over-provisioning of resources.

In order to address this issue, we developed a utility-directed resource allocation model (U-RAM) that uses offline benchmarking based utility functions of system, network and human components to dynamically (i.e., online) create and place virtual desktops (VDs) in resource pools at distributed data centers, all while optimizing resource allocations along timeliness and coding efficiency quality dimensions.

To assess the VDC scalability that can be achieved by U-RAM, we conducted experiments guided by realistic utility functions of desktop pools that were obtained from a real-world VDC testbed i.e., VMLab. We compared performance of U-RAM with different resource allocation models:

- Fixed RAM (F-RAM): each VD is over provisioned which is common in today’s cloud platforms due to lack of system and network awareness

- Network-aware RAM (N-RAM): Allocation is aware of the required network resources, but over provisions system (RAM and CPU) resources due to lack of system awareness information

- System-aware RAM (S-RAM): Allocation is opposite of N-RAM

- Greedy RAM (G-RAM): Allocation is aware of the requirement in terms of both the system as well as the network resources based purely conservative rule-of-thumb information, and not based on objective profiling as in the case of U-RAM.

Accomplishments

We were able to setup our virtual desktop cloud experiment in the GENI facility using ProtoGENI system and network resources, OnTimeMeasure measurement service, and Gush experimenter-workflow tool. We successfully overcame several challenges in setting up a data center environment in ProtoGENI with very helpful assistance from the ProtoGENI team. The challenges primarily related to installing our data center hypervisor image on the ProtoGENI hardware in a manner that is consistent with the procedures ProtoGENI supports for custom OS image installations.

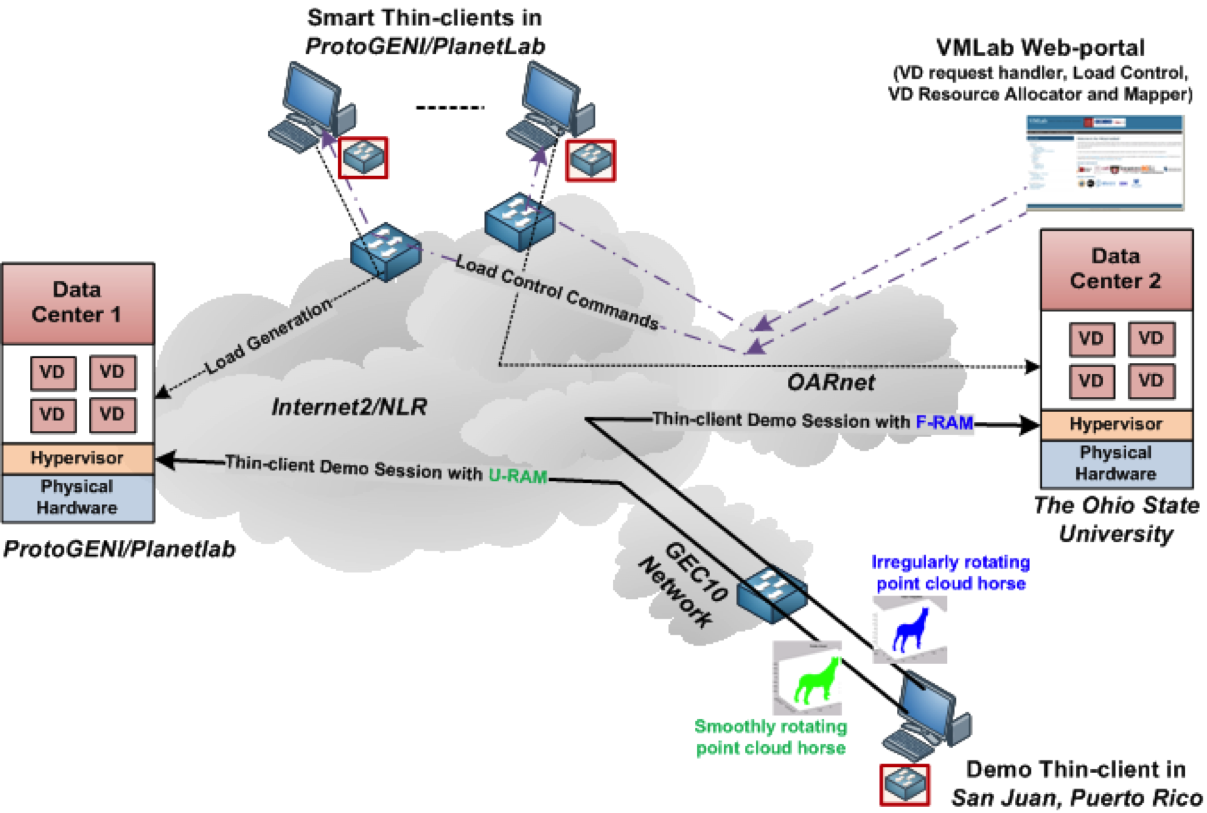

We gave a live demonstration of our virtual desktop cloud experiment in GENI at the GEC10 Networking Reception. We setup 2 data centers, one at OSU and one at Utah Emulab and reserved several other nodes to act as thin-client VD connectors. On the reserved nodes, we installed hypervisor, OnTimeMeasure, and VMware tools. In addition, we installed several measurement automation scripts on the reserved nodes that leveraged the other installed software to control the load generation of thin-client VD connections and their corresponding performance measurements in the network and host resources. OnTimeMeasure was instrumented with the Gush experiment XML files to control measurements within the experiment slice. We further set rate limits at the data centers to 10 Mbps network bandwidth using network emulators in order to setup a realistic environment for data center networking for ~15 VD users. Root Beacon of OnTimeMeasure was installed at the OSU data center, and several Node Beacons of OnTimeMeasure were installed at the thin-client VD nodes to measure end-to-end network path measurements. The OSU data center had F-RAM scripts and the Utah Emulab data center had U-RAM scripts. A web-portal was developed to live demonstrate increasing system and network loads at experiment’s data centers through generation of thin-client connections belonging to different user desktop pools. We used Matlab-based animation of a horse point cloud as the thin-client application and demonstrated that U-RAM provides “improve performance” and “increased scalability” in comparison to F-RAM.

GEC10-VDC-Horse-Demo-1 GEC10-VDC-Horse-Demo-2

Publications

- Prasad Calyam, Aishwarya Venkataraman, Alex Berryman, Marcio Faerman, "Experiences from Virtual Desktop Cloud Experiments in GENI", First GENI Research and Educational Experiment Workshop (GREE), 2012.

- Prasad Calyam, Rohit Patali, Alex Berryman, Albert Lai, Rajiv Ramnath, "Utility-directed Resource Allocation in Virtual Desktop Clouds", Elsevier Computer Networks Journal (COMNET), 2011. Slides: pdf

- Prasad Calyam, Mukundan Sridharan, Ying Xiao, K. Zhu, A. Berryman, R. Patali, "Enabling Performance Intelligence for Application Adaptation in the Future Internet", Journal of Communications and Networks (JCN), 2011.

- Mukundan Sridharan, Prasad Calyam, Aishwarya Venkataraman, Alex Berryman, "Defragmentation of Resources in Virtual Desktop Clouds for Cost-Aware Utility-Optimal Allocation", IEEE Conference on Utility and Cloud Computing (UCC), 2011. Slides: ppt

- Alex Berryman, Prasad Calyam, Albert Lai, Matthew Honigford, "VDBench: A Benchmarking Toolkit for Thin-client based Virtual Desktop Environments", IEEE Conference on Cloud Computing Technology and Science (CloudCom), 2010. Slides: pdf

Posters

Degrees

- Rohit Patali, MS, 2011, Thesis: 'Utility-Directed Resource Allocation in Virtual Desktop Clouds.'

- Aishwarya Venkataraman, MS, 2012, Thesis: 'Defragmentation of Resources in Virtual Desktop Clouds for Cost-Aware Utility-Maximal Allocation'.

Acknowledgements

This material is based upon work supported by the National Science Foundation under award number CNS-1050225, VMware, and Dell. Any opinions, findings, and conclusions or recommendations expressed in this publication are those of the author(s) and do not necessarily reflect the views of the National Science Foundation, VMware and Dell.

Attachments (8)

-

gec10-vdc-demo.png (374.7 KB) - added by 12 years ago.

GEC10 VDC Experiment Demonstration Setup

-

VDC-GENI-Expt-GEC13.pdf (1.0 MB) - added by 12 years ago.

GREE12 Paper on Virtual Desktop Cloud Experiments in GENI

-

gec15-vdc-demo.png (496.0 KB) - added by 11 years ago.

GEC15 VDC Demo Setup

-

vdc-defrag-ucc11.pdf (662.5 KB) - added by 11 years ago.

IEEE UCC Paper on Defragmentation of VDCs

-

fi-ontimemeasure-vdcloud_jcn11.pdf (1.7 MB) - added by 11 years ago.

JCN paper on OnTimeMeasure and VDCloud

-

vdcloud_comnet11.pdf (2.3 MB) - added by 11 years ago.

COMNET U-RAM paper

-

vmlab_vtj12.pdf (518.3 KB) - added by 11 years ago.

VMware Journal paper on VMLab

-

OpenFlow-VDC-LB-IM13.pdf (798.2 KB) - added by 11 years ago.

OpenFlow VDCloud OnTimeMeasure I&M Paper