| Version 5 (modified by , 14 years ago) (diff) |

|---|

Connectivity Overview

Summary

This document provides a high-level overview of various connectivity options for a campus to participate in inter-campus GENI network experiments. There are multiple options for connecting experimental networks in GENI; the choice depends on your experiment needs, existing networks, and possibilities for new connections.

Figure 1 - Overview A generic single experimental network shared between two campuses through regional and national networks.

Requirements

Each campus participates in experiments by using any combination of network nodes or compute resources. There are many GENI experiments that require only commercial Internet access for the experimenter and compute resources. The experimenter must register with one of the GENI clearinghouses to obtain GENI credentials to run a GENI slice (reference URLs here). The experimenter may also wish to add resources to GENI for the experiment by registering them with a GENI aggregate manager. The experimenter may choose to run a new GENI aggregate manager if no existing aggregate managers is appropriate for their resources. No additional network arrangements are needed for this type of experiment. Many experiments in GENI investigate new protocols and systems for building networks. These experiments may require that the researcher have more ability to control network configurations and connections than commercial Internets provide. This overview summarizes common options for connecting campuses in a network experiment. GENI network engineers in the GENI Project Office work with campus IT professionals to engineer appropriate connections for new experiments. For the purpose of this summary, we assume wired campus networks, but GENI also supports many wireless networks. The GENI wireless aggregates manage and control the additional capabilities of attached wireless networks.

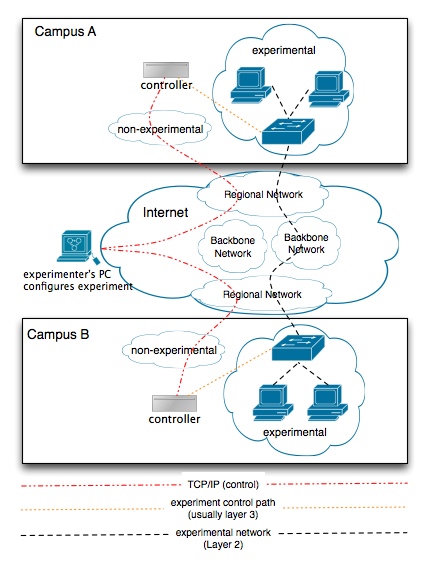

Figure 2 – paths Simplified typical network connection topology.

- This is a high-level diagram. GENI services such as Clearinghouses or Aggregate Managers are not shown.

- The experimenter’s laptop is not shown in a campus for clarity, though it can be in a particular campus. The experimenter does not produce any experimental traffic in this example.

- The controller may or may not be present for a given experiment. For OpenFlow, the controller represents dedicated hardware running OpenFlow FlowVisor and OpenFlow Controller software (e.g. NOX).

- The experimental control path is any out-of-band experimental traffic such as control and monitoring data. For OpenFlow, this path represents the TCP/IP and SSL OpenFlow Protocol.

- Paths through multiple backbones would require multiple Layer 2 paths.

Inter-campus Connectivity Options

As shown in Figure 2, the GENI data plane can include both layer 3 and layer 2 options, using the existing campus connections to regional and national research networks on each campus. Layer 2 data planes in particular allow experimenters to work with protocols other than IP in their experiments. In addition to the data plane, most GENI experiments require layer 3 IP connectivity for out-of-band control and monitoring, and this control path differs from the experimental data path. For example, OpenFlow may require TCP/IP connections from an experimenter’s laptop to configure OpenFlow software Controllers and TCP/IP connections between the OpenFlow Controllers and OpenFlow-capable switches. GENI aggregate managers also typically communicate with GENI clearinghouses using TCP/IP. This section lists some common layer 2 data plane options.

Single VLAN

This option is the most restrictive, though also the most straightforward. GENI participants negotiate a common VLAN ID between campuses as well as the intermediate regional and national networks.

VLAN Translation

There are often conflicts in VLAN numbering across different networks. With VLAN translation, each unique campus, regional, or backbone network may use a different VLAN to represent the experimental GENI layer 2 connection. The experiment’s VLAN ID is translated at the edge of each network. This approach allows campuses to use different VLAN IDs to participate in the same experiment without reserving the same VLAN ID between each participating campus. VLAN translation is not usually available in less expensive network equipment.

Layer 2 Tunneling

QinQ allows campuses to tunnel an agreed upon VLAN ID through an intermediate network. The intermediate network can then use a different VLAN ID to control the topology. The regional or backbone networks may use Provider Backbone Bridging in addition to QinQ. Other layer 2 tunnel alternatives may be available through a regional network, such as MPLS. Both Internet2 and NLR GENI core networks support QinQ tunneling, as do most regional research networks.

Direct Fiber Connection

Internet2 and NLR can also support direct fiber connections to their equipment at some GENI locations, which generally allows for higher layer 2 throughput and more flexibility for experimenters. For example, campuses may arrange for direct fiber connections to Internet2 or NLR’s OpenFlow core networks. Dedicated fiber connections generally have higher cost and lead times than other network options.

Higher Layer Tunneling

Experimenters without layer 2 data connections to GENI can use tunneling such as GRE to interoperate with each other and with layer 2 experimenters. OpenVPN software is also used in GENI to manage connections across diverse networks.

References

editable figures

GENI

- http://www.geni.net

- http://groups.geni.net/geni/wiki/GeniNewcomers

- http://groups.geni.net/geni/wiki/GeniSysOvrvw

- http://groups.geni.net/geni/wiki/CampusConnectivity

OpenFlow

- http://www.openflowswitch.org/

- http://www.openflowswitch.org/foswiki/bin/view/OpenFlow/Deployment/ (getting started)

- http://openflowswitch.org/wk/index.php/Tunneling (documents various tunneling approaches)

- http://www.openflowswitch.org/foswiki/bin/view/OpenFlow/Deployment/HOWTO/LabSetup

- http://www.openflowswitch.org/foswiki/bin/view/OpenFlow/Deployment/HOWTO/ProductionSetup/ExampleNetwork

Networking

- QinQ: http://en.wikipedia.org/wiki/QinQ

- Provider Bridges: http://en.wikipedia.org/wiki/802.1ad

- Provider Backbone Switches: http://en.wikipedia.org/wiki/IEEE_802.1ah-2008

- NPLS: http://en.wikipedia.org/wiki/Multiprotocol_Label_Switching

Email us with questions and feedback on this page!

Attachments (3)

- connectivityLayers.jpg (93.0 KB) - added by 14 years ago.

- CampusConnectivityOverview.jpg (54.9 KB) - added by 14 years ago.

- ConnectivityLayers.jpg (89.7 KB) - added by 13 years ago.

Download all attachments as: .zip