| Version 37 (modified by , 12 years ago) (diff) |

|---|

Project Number

1794

Project Title

Integrating a CRON (Cyberinfrastructure of Reconfigurable Optical Network) Testbed into ProtoGENI

a.k.a. CRON

Technical Contacts

Principal Investigator Seung-Jong Park sjpark@csc.lsu.edu

Co-PI: Rajgopal Kannan rkannan@csc.lsu.edu

PoC for escalation group:

PI: Seung‐Jong Park

Computer Science and Center for Computation & Technology

Louisiana State University

289 Coates Hall

Baton Rouge, LA 70803

Seung-Jong Park

Participating Organizations

Computer Science

Louisiana State University

289 Coates Hall

Baton Rouge, LA 70803

GPO Liaison System Engineer

Vic Thomas vthomas@geni.net

Scope

This effort provides a reconfigurable optical network emulator aggregate (CRON) connected to the GENI backbone over Louisiana Optical Network Initiative (LONI). The role of optical network emulation in GENI is to provide a predictable environment for repeatable experiments, and to perform early tests of network research experimentation prior to acquiring real network resources. The tools and services developed by this project will integrate with the ProtoGENI suite of tools. The aggregate manager and network connections between LONI and GENI for this project will also allow other LONI sites to participate in GENI.

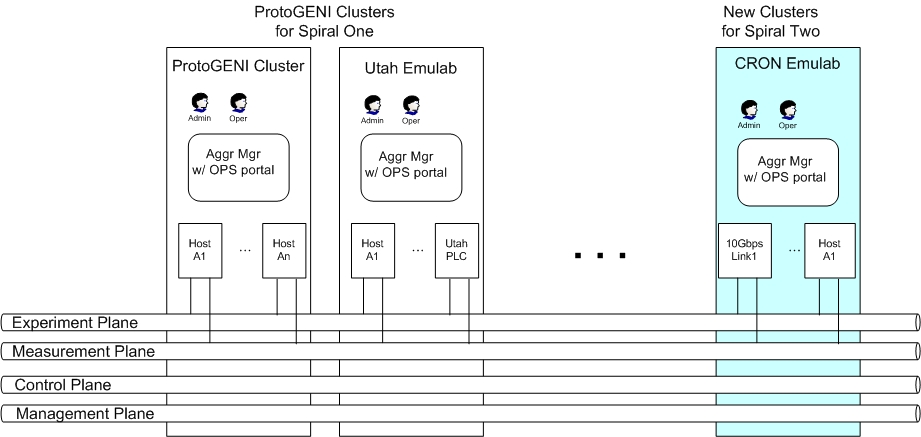

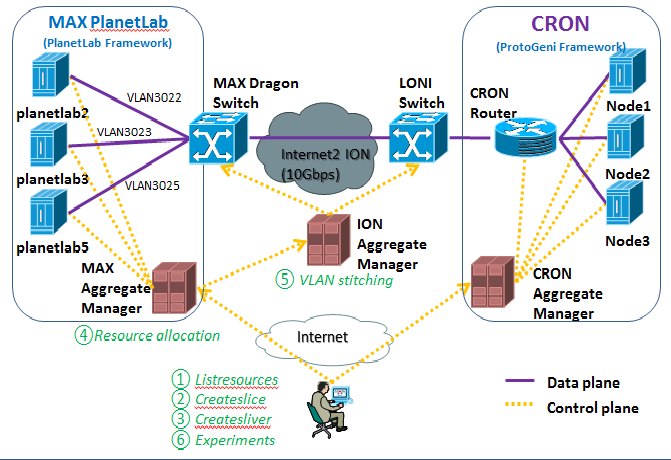

A basic workflow of integration of CRON with ProtoGENI is similar to those of current federations among ProtoGENI and the outher clusters shown in Figure 1 because the CRON testbed adopts the architectures and protocols of Emulab and ProtoGENI and modifies them based on characteristics of different resources at CRON, such as hardware emulators, a high capacity 10Gbps switch, and users opt-in control fitted to scientific application users.

All CRON’s resources, such as 10Gbps paths, virtual built-in optical networks (NRL, LONI, Internet2, etc), and computing resources, will be defined based on the resource specification (RSpecs). Those defined resources will be reported to the ProtoGENI Cleaninghouse so that any user at other federated sites can share them at an assigned slice. The list of defined resources, which will be given to the ProtoGENI cleaning house, are following:

(1) four 10Gbps virtual optical networking and computing environments

(2) four 5Gbps virtual optical networking and computing environments

To mange and exchange the information of defined resources, management, control data, CRON will host two servers, an aggregate manager and a component manager administrating resources and slices with common global identifiers (GID).

Below figure shows the implementation of Federation of CRON into ProtoGENI Cluster C at the Spiral 2

Current Capabilities

1. Introduction

CRON is the project funded by NSF MRI (Major Research Instrumentation) program, which builds a virtual high speed optical networking and computing testbed. The CRON testbed can provide up to four 10Gbps optical hardware emulated paths, four 5Gbps optical software emulated paths, and 16 high-end workstations attached to those paths simultaneously. A large number of application developers and networking researchers can share those virtually created high speed networking and computing environments without technical knowledge of networks and communications and without interference of other users. This virtual feature of CRON is exactly matched to the concept of GENI - Virtualization and Other Forms of Resource Sharing.

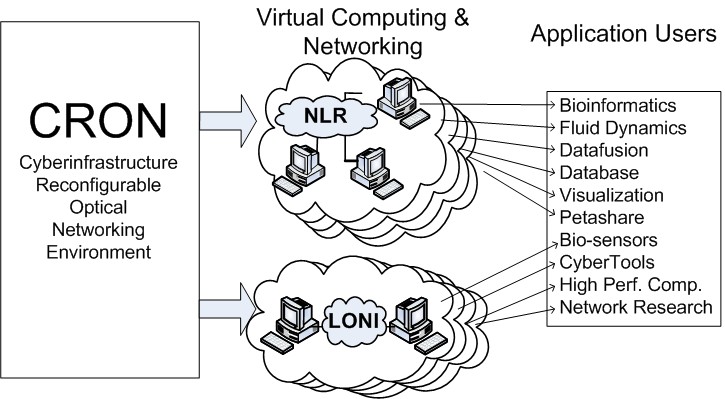

The cyberinfrastructure, CRON, provides integrated and automated access to a wide range of high speed networking configurations. Figure 1 shows how CRON can be reconfigured to emulate such optical networks as NLR (National Lambda Rail), Internet2, LONI (Louisiana Optical Network Initiative) configuration, or purely user-defined networks having different networking characteristics such as bandwidth, latency, and data loss rates. Moreover, users can dynamically reconfigure whole computing resources, such as operating system, middleware, applications, based on their specific demands. Due to the automated and reconfigurable characteristics, all types of experiments over CRON will be repeatable and controllable. This reconfigurable feature of CRON coincides with that of GENI – Programmability

3. Architecture of CRON

Above figure shows the architecture of the CRON, which consists of two main components: (i) hardware (H/W) components, including a 10Gbps switch, a 1Gbps switch, optical fibers, network emulators, and workstations; and (ii) software (S/W) components, creating an automatic configuration server that will integrate all the H/W components to create virtual networking and computing environments based on the users' requirements. All components are connected at two different networking planes: a control plane connected with 100/1000 Mbps Ethernet links and a data plane connected with 10 Gbps optical links. To allow access from outside networks, such as Internet2, NLR, and LONI, and to connect to external compute resources, the switch at the data plane has four external 10 Gbps optical connections that will extend the capacity of CRON and integrate it with existing networks and projects for the purpose of cooperative scenarios shown in the Figure

Due to the high cost of the network hardware emulators, we will connect them to only four optical links that will be allocated to satisfy demands over 5 Gbps of bandwidths. The remaining links will be connected to machines running software emulators. More precisely, we utilize the NIST Net emulator. This emulator can only support up to 5 Gbps of bandwidth due to computing overhead on the CPUs of the machines they run on

All components of CRON, except the remote access clients, are located at LSU, which already has established 10 Gpbs optical paths to LONI and Internet2. Although all computing and networking resources are located at LSU, CRON provides virtual networking and computing environments to users from different physical locations.

Major Accomplishments

- Sep 2009 -Finished hardware purchase including servers, racks, 10Gbps network cards, 1Gbps control switch and 10Gbps data switch and connection cables.

- Oct 2009 -Finished the OPS server, including user file service, mailing service. Set up the mail relay with CCT through CCT’s mail exchange server.

- Nov 2009 --Finished the testbed Control Network, including Cisco 3560E switch configuration.

- Dec 2009 -Finished the BOSS server, including web service, accounts management, DNS service, and PXE DHCP configuration.

- Dec 2009 -Finished CRON testbed cluster movement from testing machine cabinet to the machine room of the first floor of the Frey building

- Jan 2010 -Finished Memory File System (MFS) including both FreeBSD based and Linux based MFS. Database configuration and webpage configuration are made successfully. Updated the FreeBSD based MFS from version 6.2 to 6.4 and then to the latest version of FBSD7.2 MFS. Successfully customized Fedora 8 image to be compatible with CRON project hardware. However, due to the SUN Fire x4240 hardware advanced feature of SAS disk buss and RAID controllers, the Emulab FreeBSD 6.x version generic image can’t be used.

- Jan 2010 -Finished network access for BOSS and OPS server, both within LSU and outside Internet, including LSU wireless access.

- Feb 2010 -Finished Serial line control network, testing on the serial line cable because the Sun Fire x4240 machine has special pinout serial port. Asked the Comtrol company’s official technical support and got the right cable pinout design. Currently, the serial control network could work, but has some wrong display problem. Asked CCT IT support to help on making such serial cable on test.

- Mar 2010 -Finished CRON system version upgrading from Utah Emulab stable source branch.

Project participants

Professor Seung‐Jong Park: PI

Cheng Cui: CRON system lead developer

Mohammed Abul Monzur Azad: CRON system developer

Praveenkumar Shivappa Kondikoppa: CRON system developer

Lin Xue: CRON system developer

[Cheng, Mohammed, Praveenkumar and Lin are Ph.D. students at LSU.]

Outreach activities

The CRON project already envisages close cooperation between LSU and Southern University via a shared testbed, thus uniting two institutions from an EPSCoR state which struggles to overcome historic disadvantages related to education and poverty levels. Due in part to the large African American population of its state, LSU has a history of minority recruitment (one of the co-PI's Ph.D. students is in fact African-American). We expect to hire several more minority graduate students to work on networking research problems using the CRON infrastructure. Furthermore, the two PIs regularly teach the graduate High-Speed Networking Course (CSC 7502) as well as the primary undergraduate course in the LSU computer science department on computer networks (CSC 4501). We plan to incorporate a laboratory component in these courses relating to CRON - by allowing students in these courses hands-on access via experiments for utilizing the virtual networking and computing environment of CRON, we will enable a better understanding of the benefits of virtualization and network reconfigurability. We plan to crosslist this course with Southern University thereby allowing students there to also participate in and understand virtualization research via class experiments. Thus through this collaboration, the two institutions will become better equipped to train future generation of engineers for the network computing industry, an industry that is expected to experience rapid growth over the next several years. The LSU computer science department also hosts an annual summer workshop for incoming undergraduates called Computer Science Intensive Orientation for Students (CIOS). We plan to use the workshop to introduce freshman computer science students to the challenging field of networking research through CRON presentations and on-site demos.

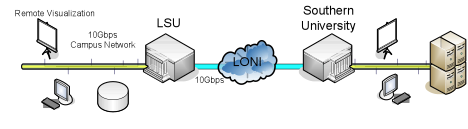

Other Contributions

Our goal is to actively involve minority institutions and underrepresented students in this project. As a preliminary step towards this broader outreach, we have already established an experimental testbed connection between LSU and Southern University through LONI to perform networking experiments as shown in Figure 4. Southern University is one of the nation’s largest Historically Black Colleges & Universities. Thus the broader impact of CRON at Louisiana State will be to enable underrepresented minority institutions, which suffer the last mile problem and cannot access high speed networking resources, to perform experiments and researches. We also actively plan to publicize and advertise research opportunities in CRON at our annual departmental workshop for incoming freshman undergraduates and interested high-school students. This annual workshop in which the PIs participated in 2008 is called Computer Science Intensive Orientation for Students (CIOS) and covers basic introductions to key aspects of computer science as a discipline and a profession. In 2010, we plan to use the workshop to introduce freshman computer science students to the challenging field of networking research through CRON presentations and demos. We also hope to actively increase undergraduate and high-school student participation in the project through summer internships as part of the LSU Chancellors undergraduate research program which involves mentoring honors undergraduate students.

Federation of CRON

Our goal of CRON federation is to aggregate CRON with other ProtoGeni and Planetlab sites through GENI framework. Federation between CRON and BBN GPOLab within ProtoGeni has been demonstrated at GEC 9. Federation between CRON and PlanetLab MAX has been demonstrated at GEC12.

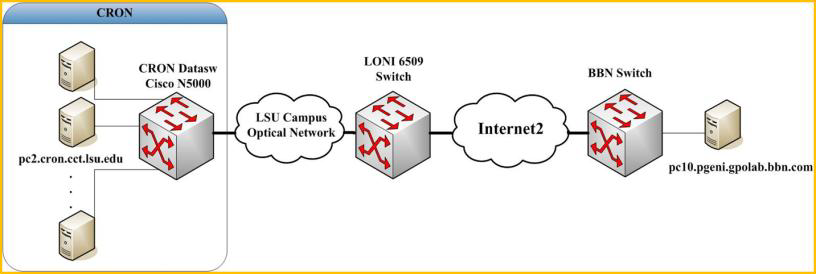

Federation CRON with ProtoGENI@BBN GPOLab

Federate CRON testbed with ProtoGeni to connect one server at CRON testbed and one server at GPOLab at BBN. Using the ProtoGeni package on Emulab, we are able to reserve external resources from other ProtoGeni site. The data interfaces at both sides are connected into Internet2 through ION layer 2 services.

Federation CRON with PlanetLab@MAX

For GENI aggregation, at first, a GENI clearinghouse authenticates experimenters and issues them credentials needed to obtain GENI resources for experimentation. Then GENI aggregates provide resources to experimenters with GENI credentials. A GENI slice holds a collection of computing and communications resources capable of running an experiment or a wide area service. And RSpec is the mechanism for advertising, requesting, and describing the resources used by slice. The Internet2 ION Aggregate Manger does VLAN stitching to connect CRON and MAX as a coherent network. CRON will use the GENI Aggregate Manager API, including Flack and Omni. The GENI Aggregate Manager API provides a common interface to Aggregate Managers, including PlanetLab, ProtoGENI, and OpenFlow. Also, network stitching will be provided for CRON to connect into GENI through Internet2. GENI network stitching operation is to construct a topology of substrates as represented by their Aggregate Managers. Each Aggregate Manager has a unique Rspec which defines its Substrate resources. Rspecs is a topology description of the individual substrate. More technical information is available at 4Q 2011 Status Report.

Milestones

MilestoneDate(CRON: S2.a)? Identify POC for GENI Prototype Response and Escalation Group.

MilestoneDate(CRON: S2.b)? Establish 10Gbps connection from LONI to ProtoGENI through Internet 2.

MilestoneDate(CRON: S2.c)? Establish a 10Gbps connection from CRON to ProtoGENI through LONI and Internet2.

MilestoneDate(CRON: S2.d)? CRON RSpec definition completed and documented. See 1Q2010 status report for details.

MilestoneDate(CRON: S2.e)? Site authority and component manager ready.

MilestoneDate(CRON: S2.f)? Report on experiment over the federation among CRON, LONI, ProtoGENI sites.

MilestoneDate(CRON: S3.a Demonstration and outreach at GEC9)?

MilestoneDate(CRON: S3.b CRON aggregate manager is functional)?

MilestoneDate(CRON: S3.c Demonstration and outreach at GEC10)?

MilestoneDate(CRON: S3.d Outreach to current CRON user community)?

MilestoneDate(CRON: S3.e GENI API on aggregate manager supports slices over I2)?

MilestoneDate(CRON: S3.f Demonstration and outreach at GEC11)?

MilestoneDate(CRON: S3.g "Deliver software, documentation, user guides")?

Project Technical Documents

Links to wiki pages for the project's technical documents go here. List should include any document in the working groups, as well as other useful documents. Projects may have a full tree of wiki pages here.

Quarterly Status Reports

Spiral 2 Connectivity

Links to wiki pages about details of infrastrcture that the project is using (if any). Examples include IP addresses, hostnames, URLs, DNS servers, local site network maps, VLANIDs (if permanent VLANs are used), pointers to public keys. GPO may do first drafts of any of these and have the PI correct them to bootstrap. May also include ticket links for pending or known connectivity issues. Many projects will have a full tree of wiki pages here.

Related Projects

Includes non-GENI projects.

Attachments (18)

-

milestone 2b- Draft.docx (151.2 KB) - added by 14 years ago.

CRON Milestone S2.b

- WeatherMap.png (189.4 KB) - added by 14 years ago.

- Contribution.png (32.6 KB) - added by 14 years ago.

- CRON-GENI.png (118.4 KB) - added by 14 years ago.

- data-plane.jpg (52.0 KB) - added by 14 years ago.

- control.jpg (46.8 KB) - added by 14 years ago.

- CRON.jpg (78.0 KB) - added by 14 years ago.

-

CRON-QSR-1Q2010.pdf (321.7 KB) - added by 14 years ago.

QSR-Jan-Mar 2010

-

CRON-QSR-1Q2010.2.pdf (321.7 KB) - added by 14 years ago.

QSR-Jan-Mar 2010

-

CRON-Spiral2-Project-Review.pptx (653.7 KB) - added by 14 years ago.

CRON project review power point file for Sprial 2

-

1Quarter2011_Report.pdf (709.7 KB) - added by 13 years ago.

1Quarter2011_Report

-

cron_bbn.png (108.1 KB) - added by 12 years ago.

cron to bbn

-

cron_max.png (57.9 KB) - added by 12 years ago.

cron to max

-

1794-IntegratingCRONintoProtoGENI-Report-31June-2011.pdf (403.9 KB) - added by 12 years ago.

2Q2011 Status Report

-

1794-IntegratingCRONintoProtoGENI-Report-05Oct-2011.pdf (804.5 KB) - added by 12 years ago.

3 Quarter 2011 Status Report

-

1794-IntegratingCRONintoProtoGENI-Report-03Jan-2012.pdf (714.0 KB) - added by 12 years ago.

4 Quarter, 2011 Status Report

- 1794-IntegratingCRONintoProtoGENI-Report-31Mar-2012.pdf (412.9 KB) - added by 12 years ago.

-

CFI2012-Short-Network-Aware_Scheduling.pdf (337.6 KB) - added by 12 years ago.

Network Aware Scheduling