This is an example topology of a campus OpenFlow network, designed to allow experimenters to access the GENI network core in a variety of ways depending on their needs. In particular, it describes a single topology for connecting resources at campuses to a local OpenFlow network, which then offers three options for experimenters to link those resources to inter-campus VLANs.

Note that this page assumes that your campus is already connected to the GENI network core. The GENI Connectivity page has more information about how to get connected to the GENI core, how to get connected to other campuses through the core, etc.

Options

Those options for experimenters are:

- Link directly to one or more pre-provisioned core VLANs, without using any campus OpenFlow resources. This is a very simple option for experiments that don't need to use OpenFlow campus resources at all, and merely want to access the GENI network core.

- Use OpenFlow to link to one or more pre-provisioned core VLANs, via a cross-connect cable that translates from a campus OpenFlow VLAN onto the core VLANs. This is a fairly simple option for experiments that want to use OpenFlow campus resources, and can use existing core VLANs.

- Use OpenFlow to link to any core VLANs, by having OpenFlow configure the switch to do VLAN translation. This is a more complicated option for experiments that want to use OpenFlow campus resources, and need to use VLANs that aren't provisioned with a physical cross-connect for whatever reason (e.g. large numbers of VLANs, dynamically provisioned VLANs, etc).

Most OpenFlow experiments will probably be able to use the second option, which offers a good combination of performance, features, and ease of use.

Operations

Once you have this set up, experimenters will be able to create OpenFlow slivers to use in their experiments. Before they can actually use those slivers, though, you'll need to approve them (or configure FOAM to approve them automatically). Here are some things to think about when doing that:

- Does the sliver seem to accomplish what the experimenter is trying to accomplish? For example, if they tell you that they want traffic to and from a particular IP subnet for your MyPLC plnodes, you should confirm that this is actually what they've requested. If you aren't familiar with what the experimenter is trying to do, you should probably ask them for more information about their experiment, since it's easier to evaluate their request if you know what their intended effect is.

- Is the experimenter requesting a safe topology? In particular, if they request a topology that includes more than one of the ports that cross-connect VLAN 1750 and the inter-campus VLANs, this can cause traffic leaks and broadcast storms. Some experimenters have good reasons for wanting a topology with multiple paths into the core, but you should confirm that they understand what they're doing, and that they know how to ensure that the controller for their sliver won't leak traffic or cause storms.

- Does their sliver include an IP subnet? If so, check the network core subnet reservations page and confirm that they've reserved the subnet they've requested.

- Does their sliver include an entire experimental (non-standard) ethertype? If so, check the network core ethertype reservations page and confirm that they've reserved the ethertype they've requested.

- Does their sliver include an entire port on a switch? If so, confirm that they have an exclusive reservation for the entire device (or interface) connected to that port. (If the device is a MyPLC plnode, for example, they don't have an exclusive reservation for it; they should be requesting only an IP subnet or ethertype or some such, not all traffic to the entire port. If it's a Wide-Area ProtoGENI node (wapgnode), they might -- although you may need a Utah ProtoGENI admin to confirm that.)

When in doubt, don't hesitate to ask the experimenter for more information about what they're trying to do. They're using your campus's resources, and it's important that you understand how they're going to use them -- and it makes it easier for you to help them if they have questions or run into problems.

Diagram

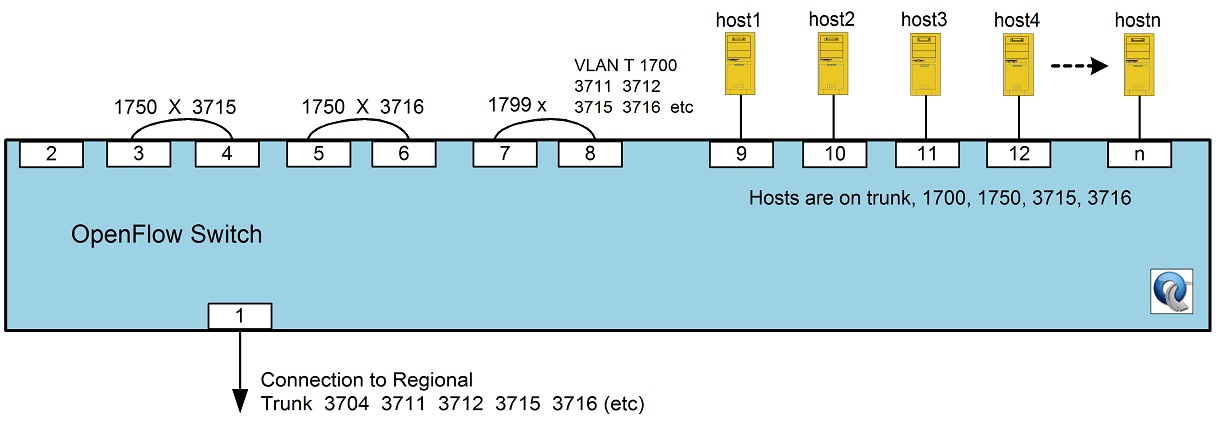

Here's a minimalist diagram of a single OpenFlow switch configuration that implements the infrastructure to support all three of these options.

In text form:

port connects to VLAN(s) 1 regional T 3704 3711 3712 3715 3716 (etc) 2 nothing this port intentionally left blank 3 port 4 1750 4 port 3 3715 5 port 6 1750 6 port 5 3716 7 port 8 T 1700 3704 3711 3712 3715 3716 (etc) 8 port 7 1799 9 a host T 1700 1750 3715 3716 10 another host T 1700 1750 3715 3716 11 another host T 1700 1750 3715 3716 12+ more hosts T 1700 1750 3715 3716

Visually:

Each of the following sections refers to this diagram to explain how the various options are implemented. The precise port numbers aren't actually important, they're just selected for ease of explanation here.

VLANs starting with "17" are local to each campus, and are OpenFlow-controlled; the ones starting with "37" are inter-campus VLANs, which may be OpenFlow-controlled on campuses, or not. (This is up to each campus, and doesn't materially affect the topology.)

See below for examples of how to configure the interfaces on NEC and HP switches to implement the above topology. (But note that the HP example isn't fully functional -- in particular, the configuration for ports 7 and 8 doesn't correctly implement option 3 above.)

Uplink

Port 1 is the uplink port from the campus OpenFlow switch to the regional network. It might connect directly to the regional, or it might go through other non-OpenFlow campus switches. It's a trunk port, configured to carry all of the VLANs that will be used between the campus and the GENI network core through the regional. All traffic between the campus and the core uses this port.

Port 2 is intentionally left blank, only so that if you wanted to implement this using exactly these port numbers, the next few pairs of ports would be neatly vertically stacked pairs (in a typical switch).

Port-based physical VLAN translation

Ports 3 and 4 are a pair of ports that are directly connected to each other by a short cross-connect cable, to effectively implement VLAN translation at Layer 1 (aka "physical VLAN translation"). Traffic from VLAN 1750 that exits the switch on port 3 will re-enter the switch on port 4, but the switch will now consider that traffic to be on VLAN 3715. Ports 5 and 6 do the same thing, but for VLAN 1750 and VLAN 3716.

Note that the two ports don't have to be on the same switch. In particular, if there were already another campus switch in the path between this OpenFlow switch and the regional, you could connect port 3 on this switch (still on VLAN 1750) to a port on that other campus switch (still on VLAN 3715), and accomplish the same effect. This would free up a port on this OpenFlow switch, but use up a port on the other switch, so campuses should decide where to put the cross-connects based on where ports are scarce.

VLAN 1750 is an OpenFlow-controlled VLAN, shared by multiple experimenters via the FlowVisor. In addition to whatever other OpenFlow programming each experimenter wishes to do with their sliver, the experimenter also uses OpenFlow to direct outbound traffic to a physical port; the port they choose controls which inter-campus VLAN will be used for the outbound traffic. For example, an experiment that wanted to send inter-campus traffic via VLAN 3715 would use OpenFlow to send that traffic out port 3.

Note that since VLAN 1750 is cross-connected to multiple intercampus VLANs, care must be taken to ensure that e.g. broadcast packets aren't flooded out to both VLAN 3715 and 3716. The simplest way to prevent this is for experimenters to never reserve a topology that includes more than one cross-connect port, and for campus FOAM admins to carefully check experimenter requests to make sure that they don't. If a particular experimenter wanted to do a particular experiment that did use multiple inter-campus VLANs, they would need to expressly confirm that they understand the risks and are confident that they won't accidentally flood broadcast traffic, ideally by testing and demonstrating their experiment in a staging environment first.

Additional VLANs can be set up for physical translation, but they use two ports per VLAN, and they need to be physically connected by a campus network admin... So this can be done if needed, but should generally be minimized.

OpenFlow-based software VLAN translation

Ports 7 and 8 are a pair of ports that are directly connected to each other by a short cross-connect cable, but unlike the previous pairs, one is a trunk port, carrying any VLANs that the campus network admin wants to allow experimenters to translate between. VLAN 1799 is OpenFlow controlled, using a controller that can rewrite VLAN tags (such as transvl). When a packet from VLAN 1700 goes out port 7, the switch tags it (because port 7 is a trunk port), and the transvl controller then receives the tagged packet. It can then remove the tag and add a new one (for VLAN 3704, say), and put the packet back out port 8 with the new tag, at which point port 8 receives the tagged packet, strips off the tag, and the switch then handles it in whatever way VLAN 3704 is normally handled.

This approach effectively implements VLAN translation in OpenFlow. It has a few limitations:

- The transvl controller can insert a flow rule to handle the translation, so every packet doesn't have to flow to the controller; but this sort of rewriting operation is typically done in the slow path on the switch, rather than at line speed. This can have a significant performance impact, so this approach is more suitable for experiments that don't have high performance requirements.

- Some switch firmware will reject the tagged packets coming in on port 8, before the transvl controller sees them. In particular, the HP OpenFlow firmware doesn't seem to permit this configuration; the NEC firmware does (both Product and Prototype versions).

Its main advantage is that experimenters can translate between any VLAN carried on port 7, without requiring any physical provisioning from campus network admins (e.g. when GENI tools become able to provision new inter-campus VLANs, all the way to port 7).

Hosts

Ports 9 and 10 (and so on) are the ports that are connected to the dataplane interfaces on hosts (e.g. MyPLC, ProtoGENI, etc). Their key unusual feature is that they're trunk ports, i.e. they carry multiple tagged VLANs; this requires the hosts that you connect to them to speak 802.1q, aka "VLAN-based subinterfacing". Modern Linux distributions, like Ubuntu and Fedora / Red Hat, do this just fine, with interface names like eth1.1700, eth1.3715, etc. Configuring the hosts' dataplane interfaces with 802.1q, and connecting them as trunk ports, is the key ingredient that allows experimenters to control which VLANs their compute slivers actually connect to. We're working on detailed guidelines for how campus resource operators can enable this on their hosts, and how experimenters can take advantage of it. (FIXME: Replace the previous sentence with a link to a page with more information.)

Example configurations

The following configuration, on an NEC switch (running the Product firmware), will implement the topology in the diagram above:

interface gigabitethernet 0/1 switchport mode trunk switchport trunk allowed vlan 3704,3711,3712,3715,3716 interface gigabitethernet 0/3 switchport mode access switchport access vlan 1750 interface gigabitethernet 0/4 switchport mode access switchport access vlan 3715 interface gigabitethernet 0/5 switchport mode access switchport access vlan 1750 interface gigabitethernet 0/6 switchport mode access switchport access vlan 3716 interface gigabitethernet 0/7 switchport mode trunk switchport trunk allowed vlan 1700,3704,3711,3712,3715,3716 interface gigabitethernet 0/8 switchport mode dot1q-tunnel switchport access vlan 1799 interface gigabitethernet 0/9 switchport mode trunk switchport trunk allowed vlan 1700,1750,3715,3716 interface gigabitethernet 0/10 switchport mode trunk switchport trunk allowed vlan 1700,1750,3715,3716 interface gigabitethernet 0/11 switchport mode trunk switchport trunk allowed vlan 1700,1750,3715,3716 interface gigabitethernet 0/12 switchport mode trunk switchport trunk allowed vlan 1700,1750,3715,3716

The following configuration, on an HP switch, will implement the topology in the diagram above (although note again that the configuration for ports 7 and 8 doesn't actually have the desired effect):

vlan 1700 tagged 7,9-12 vlan 1750 tagged 9-12 untagged 3,4 vlan 1799 tagged 8 vlan 3704 tagged 1,7 vlan 3711 tagged 1,7 vlan 3712 tagged 1,7 vlan 3715 tagged 1,7,9-12 untagged 4 vlan 3716 tagged 1,7,9-12 untagged 6

Attachments (1)

- OF-CampusDiagram.jpg (81.8 KB) - added by 13 years ago.

Download all attachments as: .zip