GRAM Network and Software Architecture Description

Introduction

This document describes the network and software architectural layers and components comprising the GRAM Aggregate Manager.

Network Architecture

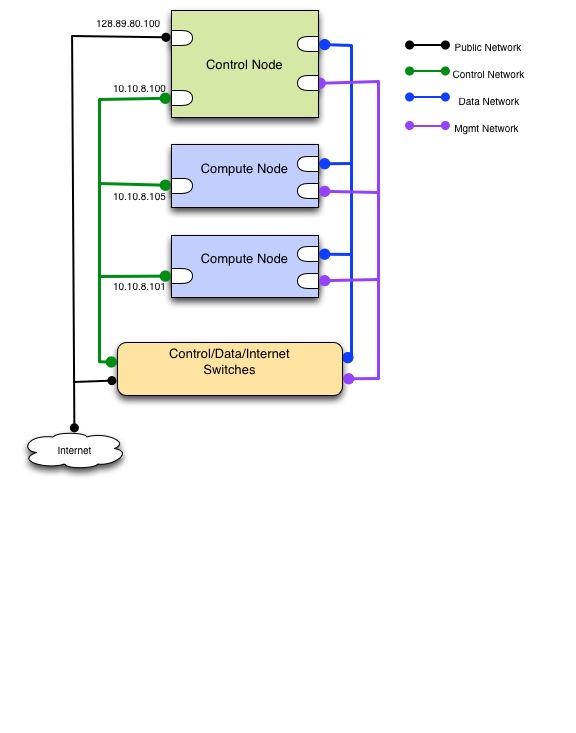

OpenStack and GRAM present software layers on top of rack hardware. It is expected that rack compute nodes are broken into two categories:

- Controller Node: The central management and coordination point for OpenStack and Gram operations and services. There is one of these per rack.

- Compute Node: The resource from which VM's and network connections are sliced and allocated on requrest. There are many of these per rack.

OpenStack and GRAM require establishing four distinct networks among the different nodes of the rack:

- Control Network: The network over which OpenFlow and GRAM commands flow between control and compute nodes. This network is NOT OpenFlow controlled and has internal IP addresses for all nodes.

- Data Network: The allocated network and associated interfaces between created VM's representing the requested compute/network resource topology. This network IS OpenFlow controlled.

- External Network: The network connecting the controller node to the external internet. The compute nodes may or may not also have externaly visible addresses on this network, for conveniene.

- Management Network: Enables SSH entry and between created VM's. This network is NOT OpenFlow controlled.

The mapping of the networks to interfaces is arbitrary and can be changed by the installer. For this document we assume the following convention:

- eth0: Control network

- eth1 : Data network

- eth2 : External network

- eth3 : Management network

The Controller node will have four interfaces, one for each of the above networks. The Compute nodes will have three (Control, Data and Management) with one (External) optional.

<* Need a better, generic more readable drawing *>

OpenFlow

OpenFlow is a standard (http://www.openflow.org/documents/openflow-spec-v1.0.0.pdf) for enabling software-definable networking (SDN) at the switch level. That is, OpenFlow enabled switches allow administrators to control the behavior of switches programmatically.

OpenFlow is based on the interaction of a series of entities:

- Switch: An OpenFlow enabled switch that speaks the switch side of the OpenFlow protocol

- Controller: A software service to which the OpenFlow switch delegates decisions about traffic routing

- Interfaces: The set of network interfaces connected to and by the switch.

The essential OpenFlow protocol works as follows:

- The switch receives incoming packet traffic.

- The switch looks in its set of "flow mod" rules that tell it how traffic should be dispatched.

- The flow mod table contains "match" rules and "action" rules. If the packet matches the "match" (e.g. same VLAN, same MAC src address, same IP dest address, etc. then the actions are taken.

- If there are no flow mods matching the packet, the packet is sent to the controller. The controller can then take one of several actions including:

- Drop the packet

- Modify the packet and return it to the switch with routing instructions

- Install a new flow mod to tell the switch how to handle similar traffic in the future without intervention by the controller.

Details on GRAM's particular relationship with OpenFlow is provided in the VMOC section below.

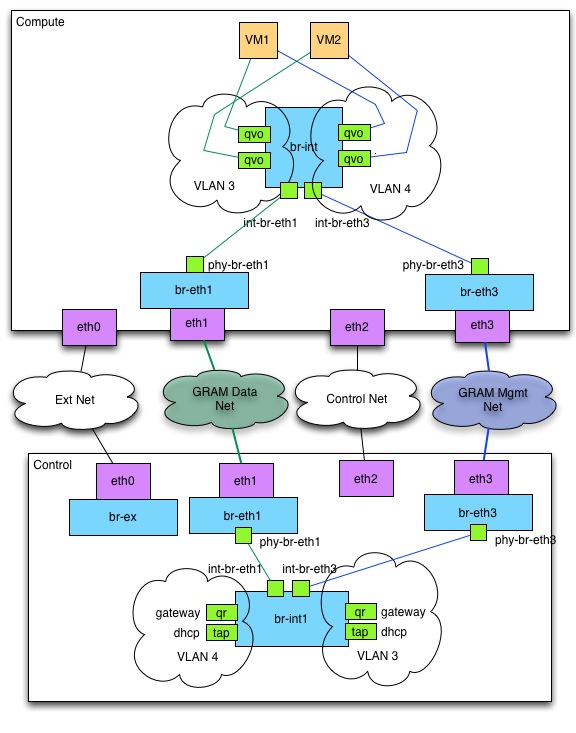

Quantum Bridge Interfaces

GRAM uses the Quantum OVS plugin to manage network traffic between the nodes. Quantum requires some OVS bridges to be created on both the control node and the compute nodes for managing this network traffic. These bridges will be created and configured during GRAM installation.

On the control node, four bridges are required:

- br-ex: external network, external interface (eth2) must be added as port on this bridge

- br-eth1: data network, data interface (eth1) must be added as a port on this bridge

- br-eth3: management network; management interface (eth3) must be added as a port on this bridge

- br-int: internal bridge, will manage traffic to and from the compute nodes and the gateway for each network

The ovs-vsctl commands executed during GRAM installation are:

ovs-vsctl add-br br-ex

ovs-vsctl br-set-external-id br-ex bridge-id br-ex

ovs-vsctl add-port br-ex eth2

ovs-vsctl add-br br-int

ovs-vsctl add-br br-eth1

ovs-vsctl add-port br-eth1 eth1

ovs-vsctl add-br br-eth3

ovs-vsctl add-port br-eth3 eth3

On the compute nodes, three bridges are required:

- br-eth1: data network, data interface (eth1) must be added as a port on this bridge

- br-eth3: management network; management interface (eth3) must be added as a port on this bridge

- br-int: internal bridge, will manage traffic to and from the compute nodes and the gateway for each network

The ovs-vsctl commands executed during GRAM installation are:

ovs-vsctl add-br br-int

ovs-vsctl add-br br-eth1

ovs-vsctl add-port br-eth1 eth1

ovs-vsctl add-br br-eth3

ovs-vsctl add-port br-eth3 eth3

Upon initial start-up, the quantum OpenVSwitch plugins will add a few ports to these bridges.

On both the control and compute nodes, four ports will be added and connections will be made between these ports:

- int-br-eth1 and int-br-eth3 will be added to br-int

- phy-br-eth1 will be added to br-eth1

- phy-br-eth3 will be added to br-eth3

- int-br-eth1 will be connected to phy-br-eth1 to allow data network traffic to flow to and from br-int

- int-br-eth3 will be connected to phy-br-eth3 to allow management network traffic to flow to and from br-int

The resulting OVS configuration on the control node after initial installation should look something like this:

$ sudo ovs-vsctl show

107352c3-a0bb-4598-a3a3-776c5da0b62b

Bridge "br-eth1"

Port "phy-br-eth1"

Interface "phy-br-eth1"

Port "eth1"

Interface "eth1"

Port "br-eth1"

Interface "br-eth1"

type: internal

Bridge "br-eth3"

Port "phy-br-eth3"

Interface "phy-br-eth3"

Port "eth1"

Interface "eth3"

Port "br-eth3"

Interface "br-eth3"

type: internal

Bridge br-ex

Port br-ex

Interface br-ex

type: internal

Port "eth2"

Interface "eth2"

Port "qg-9816149f-9c"

Interface "qg-9816149f-9c"

type: internal

Bridge br-int

Port "int-br-eth1"

Interface "int-br-eth1"

Port "int-br-eth3"

Interface "int-br-eth3"

Port br-int

Interface br-int

type: internal

ovs_version: "1.4.0+build0"

The "qg-" interface is an interface created by quantum that represents the interface on the quantum router connected to the external network.

The resulting OVS configuration on the compute nodes after initial installation should look something like this:

$ sudo ovs-vsctl show

4ec3588c-5c8f-4d7f-8626-49909e0e4e02

Bridge br-int

Port br-int

Interface br-int

type: internal

Port "int-br-eth1"

Interface "int-br-eth1"

Bridge "br-eth1"

Port "phy-br-eth1"

Interface "phy-br-eth1"

Port "br-eth1"

Interface "br-eth1"

type: internal

Port "eth1"

Interface "eth1"

ovs_version: "1.4.0+build0"

As networks are created for each sliver, ports will be added to the br-int bridges on both the control and compute nodes.

On the control node, the quantum plugin will create a "qr-" interface for the network's gateway interface on the quantum router and a port connected to this interface will be added to the br-int bridge. In addition, a "tap" interface for each network will be created for communication with the network's DHCP server on the control node.

On the compute nodes, the quantum plugin will create a "qvo" interface for each VM on the network and a port connected to this interface will be added to the br-int bridge.

Software Architecture

GRAM is a body of software services that sits on top of two supporting layers: Linux and OpenStack.

The following diagram shows the different layers of software on which GRAM resides:

We will describe these underlying layers and then GRAM itself.

Linux

Linux is an Open-source family of Unix-based operating systems. GRAM has been developed to work on Ubuntu 12+ flavors of LInux, with other versions of Linux (Fedora, notably) planned for the near future. Some points of note about Linux and Ubuntu as a basis for GRAM:

- In Ubuntu, software is maintained/updated using the debian/apt-get/dpkg suite of tools.

- Networking services are provided through Linux in a number of ways. GRAM disables the NetworkManager suite in favor of the suite managed by /etc/network/interfaces and the networking service.

- Ubuntu manages services by placing configurations in the /etc/init directory. Where links are provided in the /etc/init.d directory to upstart, services are managed by the upstart package and logs are found in the /var/log/upstart/<service_name>.log.

- Python is an Open-source scripting/programming language that is the basis of the whole GRAM software stack.

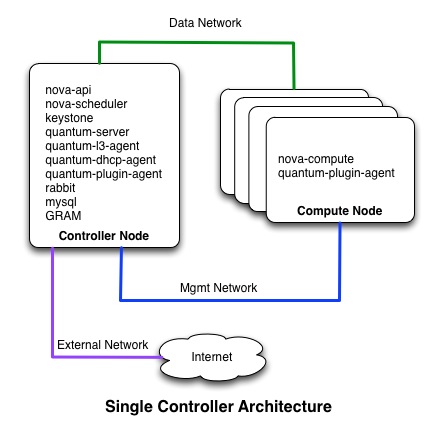

OpenStack

OpenStack is an Open-source suite of software services to support creation and management of virtual topologies composed of networks, storage and compute resources. OpenStack services include:

- Nova: A service for creating and controlling virtual machines. Nova is, itself, a layer on top of other virtualization technologies such as KVM, Qemu or Xen.

- Quantum: A service for creating and controlling virtual networks. Quantum provides a common API on top of Plugins that implement these services in particular ways, such as Linux Bridging, GRE tunnelling, IP subnets or VLANs. GRAM uses a plugin providing VLAN-based segregation using OpenVSwitch.

- Keystone: A service to manage identity credentials for users and services

- Glance: A service to manage registration of OS images for loading into virtual machines

An OpenStack installation consists of two flavors of nodes:

- Control Node. There should be one of these per rack. It provides the public facing OpenStack and GRAM interfaces.

- Compute Nodes. There should be one or more of these per rack. These provide raw compute, network and storage resources from which the control node can carve virtual resources on demand.

The following diagram illustrates the kinds of nodes and the OpenStack services typically resident on each.

POX

POX is a python-based OpenFlow controller toolkit for developing and deploying OpenFlow compliant controllers. VMOC uses this toolkit as does the default controller. Experimenters are free to build controllers on POX or on one of several other Python Toolkits. More information about POX can be seen at http://www.noxrepo.org/pox/documentation/.

GRAM

GRAM (GENI Rack Aggregate Manager) consists of a set of coordinated services that run on the rack control node. Together these services provide an aggregate manager capability that communicates with both the underlying OpenStack infrastructure to manage virtual and physical resources. In addition, it works with a OpenFlow controller to manage interactions with an OpenFlow switch and user/experimenter-provided controllers.

GRAM Services and Interfaces

The following services comprise GRAM:

- gram-am: The aggregate manager, speaking the GENI AM API and providing virtual resources and topologies on request.

- gram-amv2: A version of the aggregate manager that speaks the "V2" version of the AM API for purposes of communicating with tools that speak this dialect.

- gram-vmoc: VMOC (VLAN-based Multiplexed OpenFlow Controller) is a controller that manages OpenFlow traffic between multiple OpenFlow switches and multiple user/experimenter controllers.

- gram-ctrl: A default controller for experiments and topologies that have not specified a distinct OpenFlow controller This controller performs simple L2 MAC<=>Port learning.

- gram-ch: A rack-internal clearinghouse. This is provided for testing and standalone rack configurations. Once a rack is federated with the GENI federation, it should speak to the GENI Clearinghouse and not this clearinghouse.

- gram-mon: A monitoring process that is expected to provide information to the GMOC.

The diagram below describes the interactions and interfaces between these services:

- The gram-am and gram-amv2 speak the GENI AM API to external clients and tools.

- The VMOC speaks the controller side of the OpenFlow interface to switches, and the switch side of the OpenFlow interface to controllers. That is, it is a controller to switches and a switch to controllers.

- The VMOC provides a management interface by which it communicates to the GRAM who informs it about adding/deleting/modifying slice/sliver topologies and controller directives.

- The gram-am and gram-amv2 speak the OpenStack APIs to invoke OpenStack services from the Keystone, Nova, Quantum and Glance suites.

- The gram-mon reads the snapshot files written by the gram-am and assembles monitoring information to be sent to the GMOC.

GRAM Storage

GRAM holds storage about the state of slices and associated resources in two synchronized ways:

- OpenStack holds information about resources in mysql db

- GRAM holds information about slices/slivers in JSON snapshots (typically on the control node in /etc/gram/snapshots)

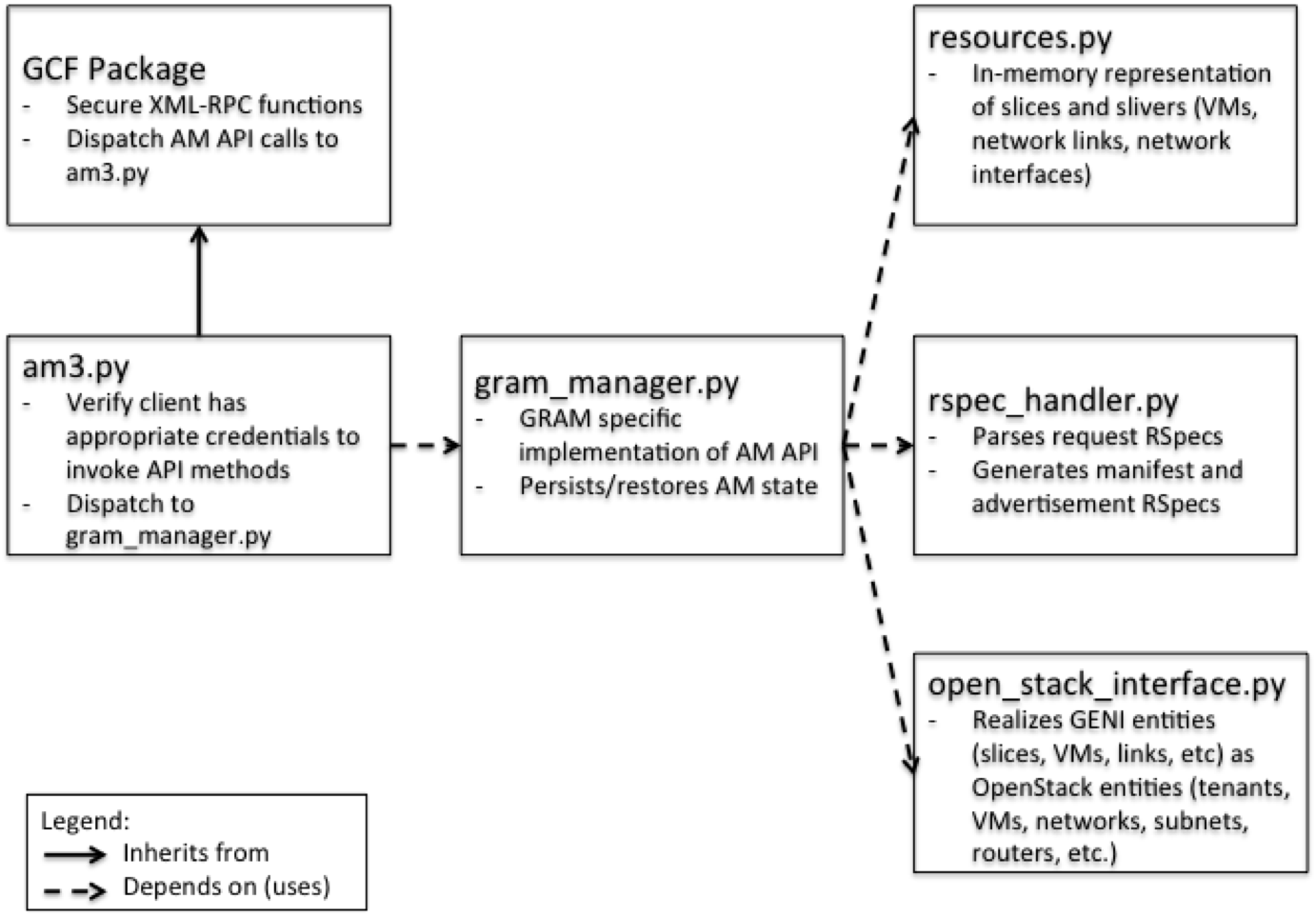

GRAM AM

The GRAM AM software is based on the reference aggregate manger software distributed with the GCF package (http://trac.gpolab.bbn.com/gcf). GRAM relies on GCF primarily for xml-rpc related functions. GCF provides GRAM with a secure xml-rpc listener that dispatches AP API calls to the GRAM module called am3.py. The following figure shows the major modules of the GRAM software:

VMOC

GRAM provides a particular controller called VMOC (VLAN-based Multiplexing OpenFlow Controller).

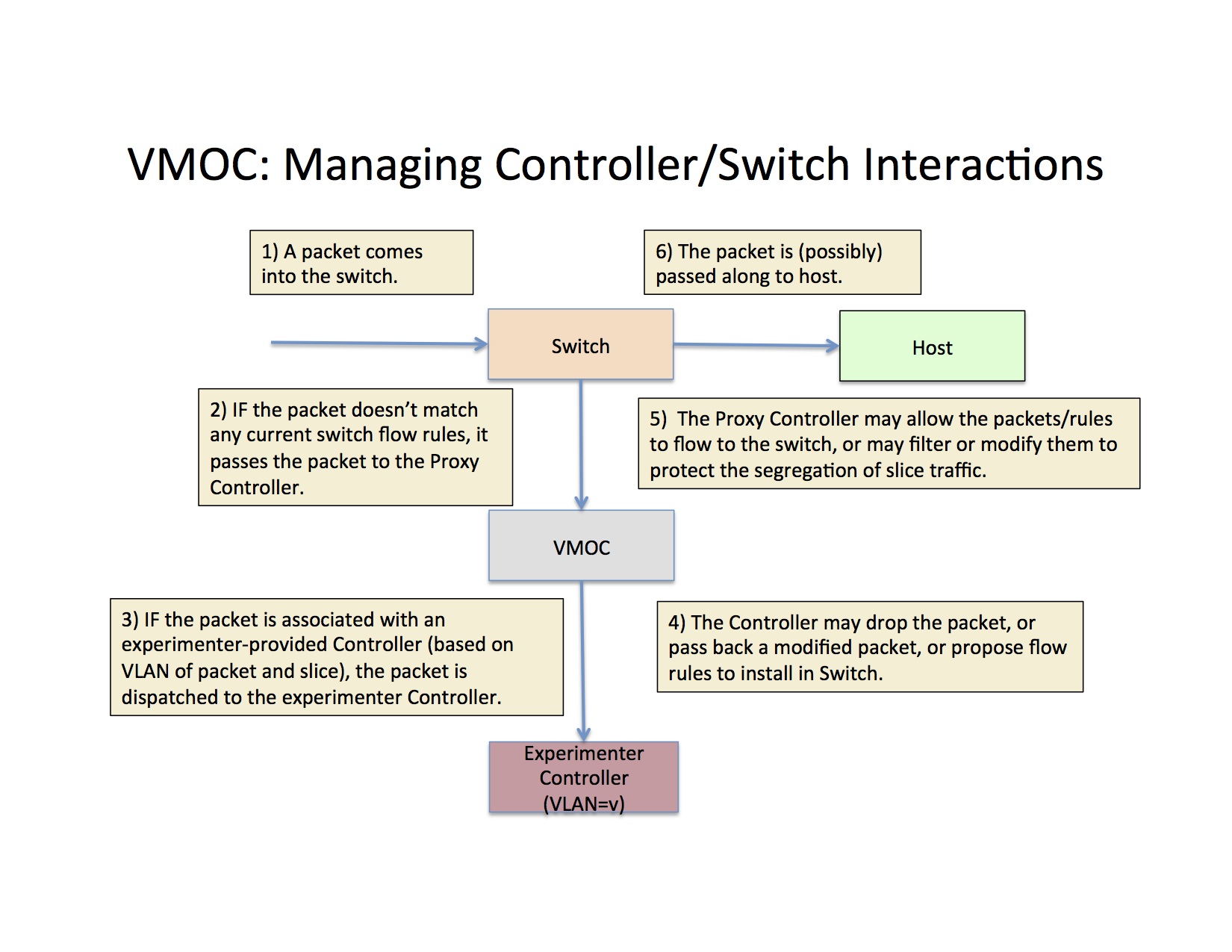

As indicated in the figure below, VMOC serves as a controller to switches, but as a switch to user/experimenter provided controller, multiplexing based on VLAN tag and switch DPID/port. GRAM provides a default L2-learing controller behavior for those slices that do not specify their own controller.

When packets flow to the OpenFlow-enabled switch, they are tested against the current set of flow-mods. If the packet matches the flow-mod "match" portion, the associated action (e.g. modify, drop, route) are taken. If not, the packet is sent to VMOC. VMOC then dispatches the packet to the controllers registered with VMOC based on VLAN which, in turn, correspond to the slice to which the controller is associated. The controller responds to the packet by some action (e.g. modify, drop, install flow mod). The VMOC will then vet the response to make sure the response maintains the VLAN-based boundaries of that controller and, if appropriate, forward the response back to the switch.

VMOC can handle multiple OpenFlow switches simultaneously, as part of the OpenFlow vision is managing a network of switches with a single controller.

The diagram below shows the essential flows of VMOC. VMOC serves as a controller for one or more OpenFlow switches, and as a switch to one or more controllers. It assures that traffic from switches goes to the proper controller, responses from controllers goes to the proper switch. It uses a combination of VLAN and switch DPID to determine the proper two-way flow of traffic.

Additional Services

Virtual Machine Configuration. When GRAM creates virtual machines for experimenters, it configures the virtual machines with user accounts, installs ssh keys for these users, installs and executes scripts specified in the request rspec, sets the hostname to that specified in the request rspec, adds /etch/hosts entries so other virtual machines on the aggregate that belong to the same slice can be reached by name, and sets the default gateway to be the GRAM management network. All of this configuration of the virtual machine is accomplished using OpenStack config drive services.

Ssh access to Virtual Machines. GRAM uses IP port forwarding to allow experimenters to ssh from Internet connect hosts to their virtual machines on GRAM aggregates. When a virtual machine is created, it is assigned a port number. GRAM sets up IP forwarding rules on the controller node so ssh connections to this port on the controller get forwarded to the virtual machine. These forwarding rules are removed when the virtual machine is deleted. Since changes to IP tables requires root privileges, GRAM invokes a binary executable with setuid root permissions to make these changes.

Attachments (8)

- GRAM-Modules.png (311.8 KB) - added by 10 years ago.

- VMOC_Controller_Switch_Interactions.jpg (281.6 KB) - added by 10 years ago.

- GRAM_Software_Stack.jpg (332.0 KB) - added by 10 years ago.

- OpenstackTwoNodeArchitecture.jpg (37.6 KB) - added by 10 years ago.

- GRAM_Network_Architecture_Detailed.jpg (94.3 KB) - added by 10 years ago.

- GRAM_Network_Architecture.jpg (46.2 KB) - added by 10 years ago.

- GRAM_Srvcs_and_Intf.jpg (58.9 KB) - added by 10 years ago.

- VMOC_Multiplexing.jpg (315.2 KB) - added by 10 years ago.

Download all attachments as: .zip