| Version 6 (modified by , 9 years ago) (diff) |

|---|

- VirginiaTech InstaGENI Confirmation Tests

VirginiaTech InstaGENI Confirmation Tests

For details about the tests in this page, see the InstaGENI Confirmation Tests page.

For site status see the InstaGENI New Site Confirmation Tests Status page.

Note: Omni nick_names for site aggregate used for these tests are:

vt-ig=urn:publicid:IDN+instageni.arc.vt.edu+authority+cm,https://instageni.arc.vt.edu:12369/protogeni/xmlrpc/am vt-ig-of-=urn:publicid:IDN+openflow:foam:foam.instageni.arc.vt.edu+authority+am,https://foam.instageni.arc.vt.edu:3626/foam/gapi/2

IG-CT-1 - Access to New Site VM resources

Got Aggregate version, which showed AM API V1, V2, and V3 are supported and V2 is default:

$ omni.py getversion -a vt-ig

The InstaGENI version in" 'code_tag':'99a2b1f03656cb665918eebd2b95434a6d3e50f9'" is the same as the other two available InstaGENI site (GPO and Utah):

IG GPO: { 'code_tag': '99a2b1f03656cb665918eebd2b95434a6d3e50f9'

IG VirginiaTech: { 'code_tag': '99a2b1f03656cb665918eebd2b95434a6d3e50f9',

IG Utah: { 'code_tag': '99a2b1f03656cb665918eebd2b95434a6d3e50f9'

Get list of "available" compute resources:

$ omni.py -a vt-ig listresources --available -o

Verified that Advertisement RSpec only includes available resources, as requested:

$ egrep "node comp|available now" rspec-xxx

Created a slice:

$ omni.py createslice IG-CT-1

Created a 4 VMs sliver using the RSpec IG-CT-1-gatech.rspec:

$ omni.py createsliver -a vt-ig IG-CT-1 IG-CT-1-gatech.rspec

The following is login information for the sliver:

$ readyToLogin.py -a vt-ig IG-CT-1 <...>

Measurements

Log into specified host and collect iperf and ping statistics. All measurements are collected over 60 seconds, using default images and default link bandwidth:

Iperf InstaGENI VirginiaTech VM-2 to VM-1 (TCP) - TCP window size: 16.0 KB

Collected: 2015-05-XX

One Client_

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech VM-2 to the VM-1 (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech VM-2 to the VM-1

IG-CT-2 - Access to New Site bare metal and VM resources

Create a slice:

$ omni.py createslice IG-CT-2

Created a sliver with one VM and one Raw PC using RSpec IG-CT-2-gatech.rspec

$ omni.py createsliver -a vt-ig IG-CT-2 IG-CT-2-gatech.rspec

Determined login information:

$ readyToLogin.py -a vt-ig IG-CT-2 <...>

Measurements

Log into specified host and collect iperf and ping statistics. All measurements are collected over 60 seconds, using default images and default link bandwidth:

Iperf InstaGENI VirginiaTech PC to VM (TCP) - TCP window size: 16.0 KB

Collected: 2015-05-XX

One Client_

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech PC to the VM (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech PC to VM

Iperf InstaGENI VirginiaTech PC to PC (TCP) - TCP window size: 16.0 KB

Even though not part of this test, ran an experiment with 2 raw pcs to capture performance between dedicated devices.

Collected: 2013-02-20

One Client

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech PC to the PC (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech PC to PC

IG-CT-3 - Multiple sites experiment

Create a slice:

$ omni.py createslice IG-CT-3

Create a sliver with one VM at VirginiaTech and one VM at GPO using RSpec IG-CT-3-gatech.rspec. First created the InstaGENI VirginiaTech sliver:

$ omni.py createsliver IG-CT-3 -a vt-ig IG-CT-3-gatech.rspec

Then creates the InstaGENI GPO sliver:

$ omni.py createsliver IG-CT-3 -a ig-gpo IG-CT-3-gatech.rspec

Determined login information at each VirginiaTech and GPO aggregate:

$ readyToLogin.py IG-CT-3 -a vt-ig .... $ readyToLogin.py IG-CT-3 -a ig-gpo ....

Measurements

Iperf InstaGENI GPO VM-2 to VirginiaTech VM-1 (TCP) - TCP window size: 16.0 KB

Collected: 2015-05-XX

One Client_

Five Clients

Ten Clients

Iperf InstaGENI GPO VM-2 to GPO VM-1 (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI GPO VM-2 to the GPO VM-1

Iperf InstaGENI VirginiaTech VM-1 to GPO VM-2 (TCP) - TCP window size: 16.0 KB

Collected: 2015-05-XX

One Client_

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech VM-1 to GPO VM-2 (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech VM-1 to GPO VM-2

IG-CT-4 - Multiple sites OpenFlow experiment and interoperability

First create a slice:

$ omni.py createslice IG-CT-4

Then create slivers at all OpenFlow aggregates. This confirmation test creates a sliver at each of the following aggregates using the RSpec specified. RSpecs can be found here.

$ omni.py createsliver -a of-nlr IG-CT-4 IG-CT-4-openflow-nlr.rspec $ omni.py createsliver -a of-rutgers IG-CT-4 IG-CT-4-openflow-rutgers.rspec $ omni.py createsliver -a of-i2 IG-CT-4 IG-CT-4-openflow-i2.rspec $ omni.py createsliver -a of-gpo IG-CT-4 IG-CT-4-openflow-gpo.rspec $ omni.py createsliver -a eg-of-gpo IG-CT-4 IG-CT-4-openflow-eg-gpo.rspec $ omni.py createsliver -a of-uen IG-CT-4 ./IG-CT-4-openflow-uen.rspec $ omni.py createsliver -a ig-of-gatech IG-CT-4 IG-CT-4-openflow-vt-ig.rspec $ omni.py createsliver -a ig-of-gpo IG-CT-4 IG-CT-4-openflow-ig-gpo.rspec $ omni.py createsliver -a ig-of-utah IG-CT-4 ./IG-CT-4-openflow-ig-utah.rspec

Then create a sliver at each of the compute resource aggregates, RSpecs used can be found RSpecs can be found here.

$ omni.py createsliver -a pg-utah IG-CT-4 IG-CT-4-rutgers-wapg-pg-utah.rspec $ omni.py createsliver -a ig-utah IG-CT-4 IG-CT-4-ig-utah.rspec $ omni.py createsliver -a ig-gpo IG-CT-4 IG-CT-4-ig-gpo.rspec $ omni.py createsliver -a eg-gpo IG-CT-4 IG-CT-4-eg-gpo.rspec $ omni.py createsliver -a vt-ig IG-CT-4 IG-CT-4-vt-ig.rspec

Determine login for each of compute resources:

$ readyToLogin.py -a vt-ig IG-CT-4 <...> $ readyToLogin.py -a ig-gpo IG-CT-4 <...> $ readyToLogin.py -a pg-utah IG-CT-4 <...> $ readyToLogin.py -a ig-utah IG-CT-4 <...> $ readyToLogin.py -a eg-gpo IG-CT-4 <...>

Measurements

This section captures measurements collected between the following endpoints:

1. InstaGENI VirginiaTech VM and InstaGENI VirginiaTech VM 2. InstaGENI VirginiaTech VM and InstaGENI GPO VM 3. InstaGENI VirginiaTech VM and the PG Utah VM 4. InstaGENI VirginiaTech VM and ExoGENI GPO VM 5. InstaGENI VirginiaTech VM and the WAPG node at Rutgers

- The measurements collected for InstaGENI VirginiaTech VM to the InstaGENI VirginiaTech VM:

Iperf VirginiaTech GPO VM to the VirginiaTech GPO VM (TCP) - TCP window size: 16.0 KB

Collected: 2015-05-XX

One Client_

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech VM to the InstaGENI VirginiaTech VM (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech VM to the InstaGENI VirginiaTech VM

- The measurements collected for InstaGENI VirginiaTech VM to the InstaGENI GPO VM:

Iperf InstaGENI VirginiaTech VM to the InstaGENI GPO VM (TCP) - TCP window size: 16.0 KB

Collected: 2015-05-XX

One Client_

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech VM to the InstaGENI GPO VM (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech VM to the InstaGENI GPO VM

- The measurements collected for InstaGENI VirginiaTech VM to the PG Utah VM

Collected: 2015-05-XX

Iperf InstaGENI VirginiaTech VM to the PG Utah VM (TCP) - TCP window size: 16.0 KB

One Client_

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech VM to the PG Utah VM (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech VM to the PG Utah VM

- The measurements collected for InstaGENI VirginiaTech VM to the ExoGENI GPO VM:

Iperf InstaGENI VirginiaTech VM to the ExoGENI RENCI VM (TCP) - TCP window size: 16.0 KB

Collected: 2015-05-XX

One Client_

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech VM to the ExoGENI RENCI VM (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech VM to the ExoGENI GPO VM

- The measurements collected for InstaGENI VirginiaTech VM to the WAPG Rutgers:

Collected: 2015-05-XX

Iperf InstaGENI VirginiaTech VM to the WAPG Rutgers (TCP) - TCP window size: 16.0 KB

One Client_

Five Clients

Ten Clients

Iperf InstaGENI VirginiaTech VM to the WAPG Rutgers (UDP) - 1470 byte datagrams & UDP buffer size: 136 KByte

Ping from InstaGENI VirginiaTech VM to the WAPG Rutgers

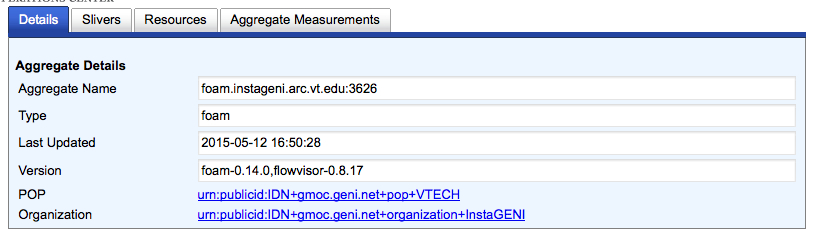

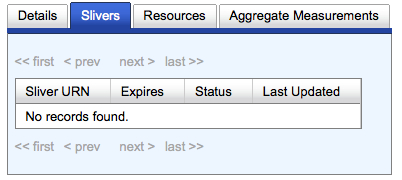

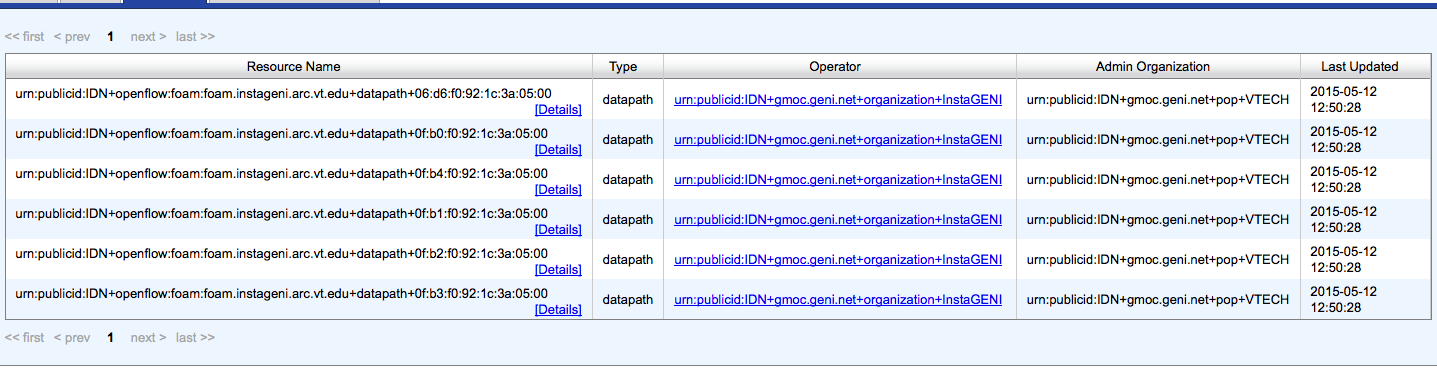

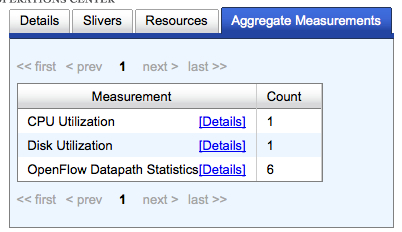

IG-CT-5 - Experiment Monitoring

Reviewed content of the GMOC Monitoring page for aggregates, and found/not found both Compute Resources and FOAM aggregates.

Verified that the FIU compute resources aggregate shows up in the list of aggregates and provides Aggregate Name, Type, Last Update, Version, POP, and Organization:

Active slivers:

List of resources:

Aggregate measurements:

Aggregate measurements:

The VirginiaTech InstaGENI FOAM resources aggregate details:

Active OpenFlow Slivers:

List of OpenFlow Resources in use:

Monitoring should show Aggregate measurement for CPU utilization, Disk Utilization, Network Statistics and OF Datapath and Sliver Statistics. At the time of this test these measurements were not available.

IG-CT-6 - Administrative Tests

Sent request for administrative account to site contact from the VirginiaTech InstaGENI aggregate page. Followed instructions at Admin Accounts on InstaGeni Racks page for account request. A local admin account was create and also had to join the emulab-ops group at https://www.instageni.arc.vt.edu/joinproject.php3?target_pid=emulab-ops. Once account was create and membership to emulab-ops was approved proceeded to execute administrative tests.

LNM:~$ ssh lnevers@control.instageni.arc.vt.edu

Also access the node via the PG Boss alias:

LNM:~$ ssh boss.instageni.arc.vt.edu

Further verified access by ssh from ops.instageni.gpolab.bbn.com to boss.instageni.gpolab.bbn.com, which is usually restricted for non-admin users:

LNM:~$ ssh ops.instageni.arc.vt.edu

From boss node accessed each of the experiment nodes that support VMs:

[lnevers@boss ~]$ for i in pc1 pc2; do ssh $i "echo -n '===> Host: ';hostname;sudo whoami;uname -a;echo"; done

In order to access Dedicated Nodes some experiment must be running on the raw-pc device. At the time of this capture two raw-pc nodes were in use (pcX and pcY):

[lnevers@boss ~]$ sudo ssh pcX [root@pcX ~]# sudo whoami root [root@pcX ~]# exit logout Connection to pcX.instageni.arc.vt.edu [lnevers@boss ~]$ sudo ssh pcY [root@pc ~]# sudo whoami root [root@pc ~]#

Access infrastructure Switches using documented password. First connect to the switch named procurve1 the control network switch:

[lnevers@boss ~]$ sudo more /usr/testbed/etc/switch.pswd XXXXXXXXX [lnevers@boss ~]$ telnet procurve1

Connect to the switch named procurve2 the dataplane network switch via ssh using the documented password:

[lnevers@boss ~]$ sudo more /usr/testbed/etc/switch.pswd xxxxxxx [lnevers@boss ~]$ ssh manager@procurve2

Access the FOAM VM and gather information for version

LNM:~$ ssh lnevers@foam.instageni.arc.vt.edu sudo foamctl admin:get-version --passwd-file=/etc/foam.passwd

Check FOAM configuration for site.admin.email, geni.site-tag, email.from settings:

foamctl config:get-value --key="site.admin.email" --passwd-file=/etc/foam.passwd foamctl config:get-value --key="geni.site-tag" --passwd-file=/etc/foam.passwd foamctl config:get-value --key="email.from" --passwd-file=/etc/foam.passwd # check if FOAM auto-approve is on. Value 2 = auto-approve is on. foamctl config:get-value --key="geni.approval.approve-on-creation" --passwd-file=/etc/foam.passwd

Show FOAM slivers and details for one sliver:

foamctl geni:list-slivers --passwd-file=/etc/foam.passwd

Access the FlowVisor VM and gather version information:

ssh lnevers@flowvisor.instageni.arc.vt.edu

Check the FlowVisor version, list of devices, get details for a device, list of active slices, and details for one of the slices:

fvctl --passwd-file=/etc/flowvisor.passwd ping hello # Devices fvctl --passwd-file=/etc/flowvisor.passwd listDevices fvctl --passwd-file=/etc/flowvisor.passwd getDeviceInfo 06:d6:6c:3b:e5:68:00:00 #Slices fvctl --passwd-file=/etc/flowvisor.passwd listSlices fvctl --passwd-file=/etc/flowvisor.passwd getSliceInfo 5c956f94-5e05-40b5-948f-34d0149d9182

Check the FlowVisor setting:

fvctl --passwd-file=/etc/flowvisor.passwd dumpConfig /tmp/flowvisor-config more /tmp/flowvisor-config

Verify alerts for the compute resource Aggregate Manager are being reported to the GPO Tango GENI Nagios monitoring and that all alerts have status OK.

Verify alerts for the FOAM Aggregate Manager are being reported to the GPO Tango GENI Nagios monitoring and that all alerts have status OK.

Email help@geni.net for GENI support or email me with feedback on this page!

Attachments (8)

- VirginiaTech-FOAMAggregate.jpg (140.0 KB) - added by 9 years ago.

- VirginiaTech-genimon-aggregates.jpg (83.2 KB) - added by 9 years ago.

- VirginiaTech-genimon-compute.jpg (353.9 KB) - added by 9 years ago.

- VirginiaTech-genimon-ext.jpg (134.4 KB) - added by 9 years ago.

- VirginiaTech-genimon-foam.jpg (351.7 KB) - added by 9 years ago.

- VirginiaTech-OFSlivers.jpg (66.5 KB) - added by 9 years ago.

- VirginiaTech-OFResources.jpg (550.1 KB) - added by 9 years ago.

- VirginiaTech-OFMonitoring.jpg (93.4 KB) - added by 9 years ago.

Download all attachments as: .zip