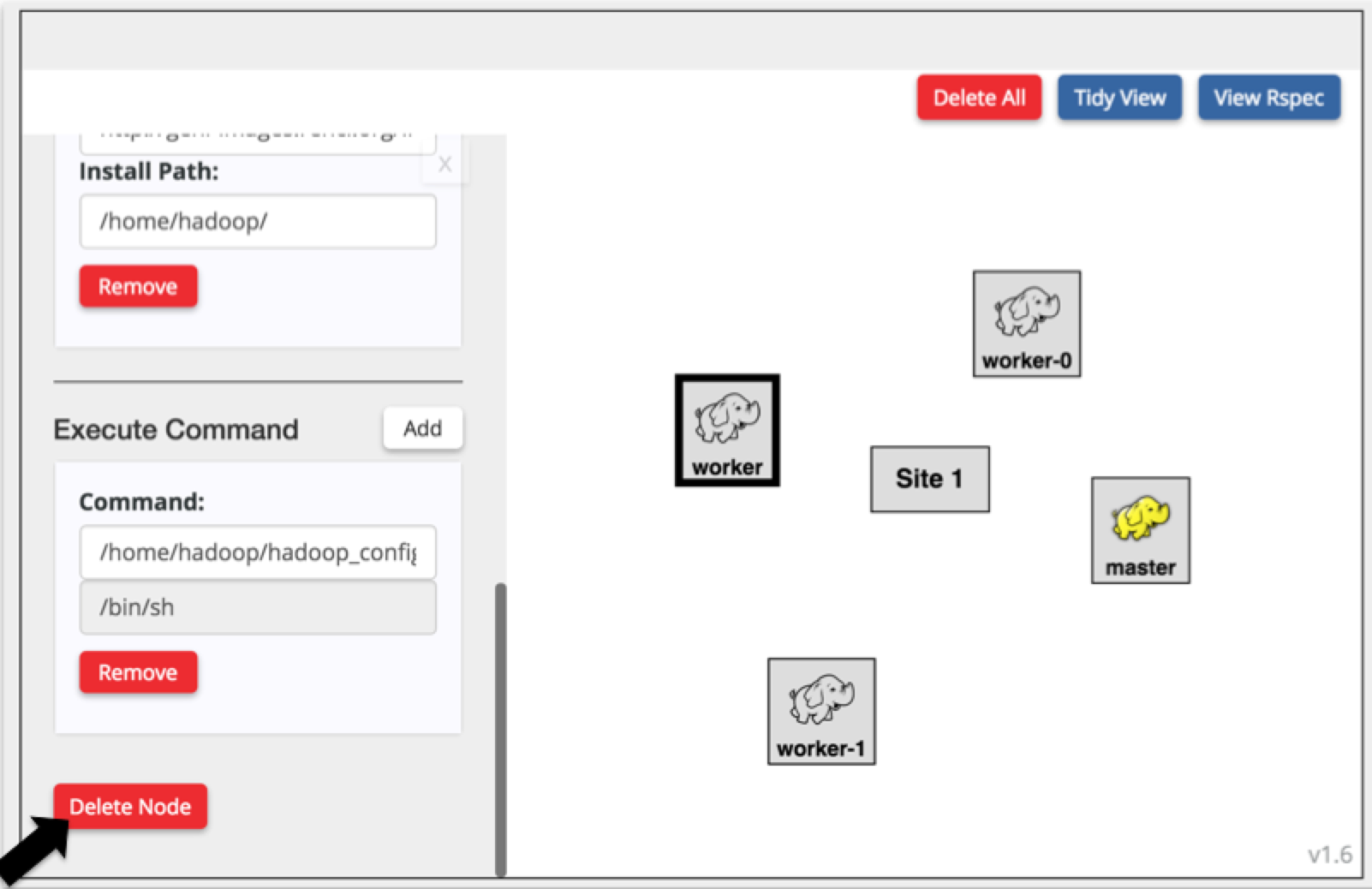

- Select the worker node.

- Press the "Duplicate Nodes only" button.

- Repeat the above two steps so that you have at least three workers but no more than six (please be respectful to other and limit your cluster size, especially if you are doing this in the context of a tutorial).

- Delete the original worker node (select it and on the attributes on the left click the Delete button).

DO NOT press the delete/backspace key since this is equivalent to pressing the Back button on the browser.

We only delete the original worker to make the naming of our workers consistent since the install scripts are sensitive to the node names

- Ensure that all your workers are named in the pattern `worker-i` with i=0,1.2,... (e.g. `worker-0, worker-1, worker-2`)

WARNING This step is important, if the workers are not named based on the above convention your cluster WILL NOT be configured correctly

- On the

master node make sure that on the Execute script the input number matches the number of workers, e.g. if you have 3 workers the entry should be

/home/hadoop/hadoop_config_dynamic.sh 3

- Connect all your nodes in one LAN

- Drag a line between two of your nodes

- From each unconnected node draw a line from that node to the middle of the link where there is a small square

- Assign IPs to your nodes:

Click on the small square in the middle of your lan and set the IPs and netmask for each interface according to this list:

- the netmask for all interfaces should be

255.255.255.0

- master IP: 172.16.1.1

- worker-i IP: 172.16.1.<10+i>, e.g. worker-0: 172.16.1.10, worker-1: 172.16.1.11, worker-5: 172.16.1.15, etc

WARNING: Make sure the IPs are assigned according to the above pattern, your cluster will not be configured correctly otherwise

- Inspect your topology to ensure that all the configurations are correct and Download your rspec.

NOTE: The rspec will be saved in your default dowload folder (usually ~/Downloads) under a name similar to slicename_request_rspec.xml

|